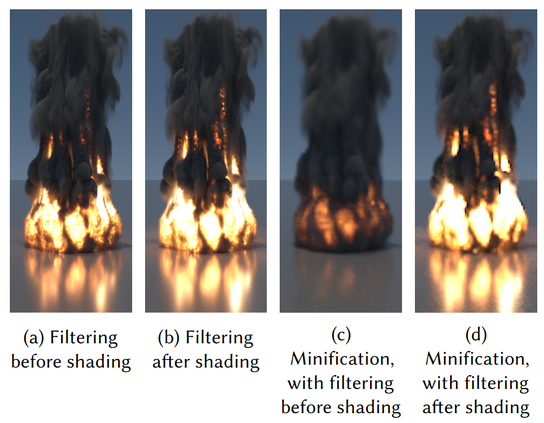

However, this is how texture filtering is taught and applied - we filter textures, then "shade" (apply non-linear functions). This introduces bias and error and often destroys the appearance. 2/N

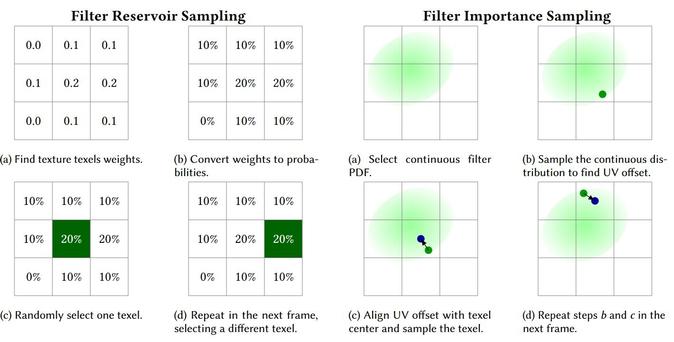

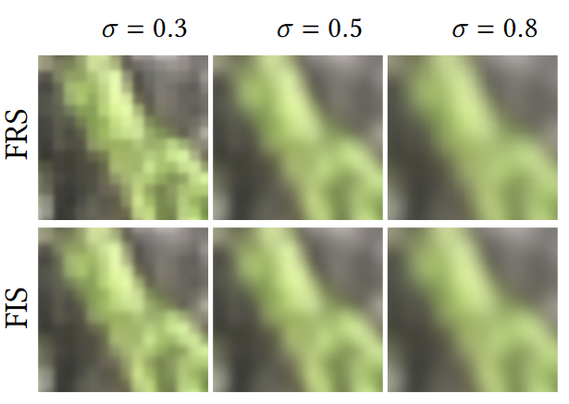

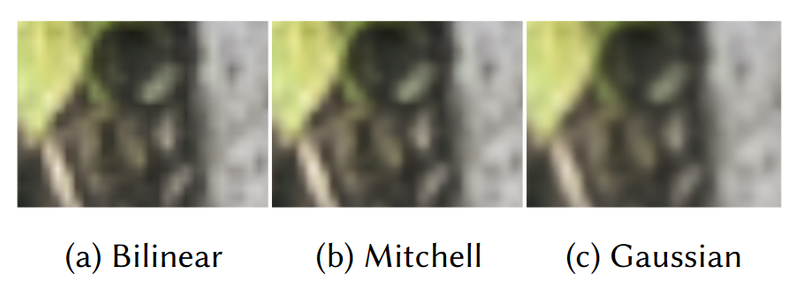

To make it practical and fast, we realize it through stochastic filtering and propose unbiased Monte Carlo estimators, together with two families of low variance methods. 3/N

We discuss the limitations of those techniques and cases where we do not recommend FAS. 4/N

Curious?

Check our paper and presentation slides: https://research.nvidia.com/labs/rtr/publication/pharr2024stochtex/ .

We also made shadertoys demonstrating two families of stochastic techniques: https://www.shadertoy.com/view/clXXDs https://www.shadertoy.com/view/MfyXzV 6/6

Filtering After Shading with Stochastic Texture Filtering | NVIDIA Real-Time Graphics Research

2D texture maps and 3D voxel arrays are widely used to add rich detail to the surfaces and volumes of rendered scenes, and filtered texture lookups are integral to producing high-quality imagery. We show that applying the texture filter after evaluating shading generally gives more accurate imagery than filtering textures before BSDF evaluation, as is current practice. These benefits are not merely theoretical, but are apparent in common cases. We demonstrate that practical and efficient filtering after shading is possible through the use of stochastic sampling of texture filters.<p>Stochastic texture filtering offers additional benefits, including efficient implementation of high-quality texture filters and efficient filtering of textures stored in compressed and sparse data structures, including neural representations. We demonstrate applications in both real-time and offline rendering and show that the additional error from stochastic filtering is minimal. We find that this error is handled well by either spatiotemporal denoising or moderate pixel sampling rates.

PS. If you read a tech report, earlier paper version - I recommend reading the new one. We improved it substantially - turning one small conference "rejection" into ACM conference "best paper" - and discovered new theory, limitations, and practical advice. :)

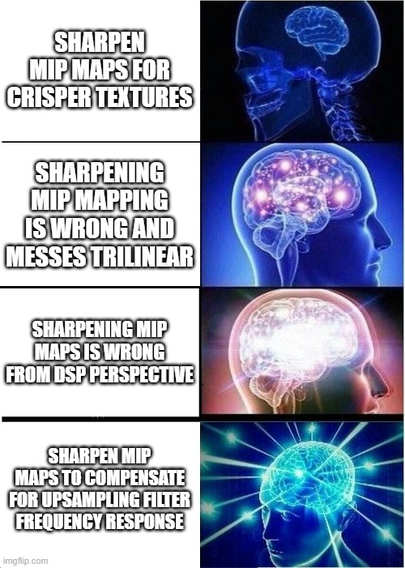

PS.2. Writing this paper, we discovered a DSP explanation of something puzzling me for a decade - why literature and practice recommend upsampling in sRGB/gamma, not linear? See the paper for details! I might blog about it as well. :)

Did it motivate us to make it better and clearer by understanding why we were mis-judged? Also yes. :)

In hindsight, I am happy about it, as I believe the paper has a much higher chance of being impactful now.

@BartWronski just finished reading this today after your excellent presentation last week at i3d.

Great work! The paper is very clear and well written and the ideas are really exciting.

@mattpharr @jesta88 @aeva I put this "everyone" in quotation as tongue-in-cheek suggestion that it's not really everyone, and it's not that obvious - because of the way we teach and practice it. :)

"Bilinear is free? Sure, I'll use it! Everyone uses it? It must be the right thing to do!"

Once it's not free (our motivation - filtering under a new compression format) and you see the different outcome, you are starting to question yourself and everything you know - "which way is correct?!" :)

@aras yes, we had a tech report with our initial findings and a ton of folks reported some great precedents in old games. We knew of all the academic literature, but game developers just use them and often not even report. :)

The coolest example was this old Star Trek game and the first Unreal, we had no idea! This helped us a lot to contextualize our research. :)

Game developers, please report your findings and even "hacks"! :)

@aras yeah and some of them had even worse compression ratios than yours :( visibility of blog posts is close to zero for non-academics. And I even had one academic explicitly refusing to cite my blog post about a similar method as their paper "because it's not peer reviewed". :(

FWIW, I think if you wrote a LaTeX version of your post, just put it on arXiv with references - you'd get a ton of citations. And at many conferences, chance of a "best paper" award. :)

https://tellusim.com/improved-blue-noise/

https://acko.net/blog/stable-fiddusion/

Putting something together in LaTeX and submitting there is not much work (if you need "vouching" before submission to the arXiv CS.GS group, I am happy to recommend you! :) ), especially since Overleaf got really good recently (including some WYSIWYG editing!) and it's free for a single user and small projects.

"What do you mean games already do robust temporal multi-frame super-resolution???"

@BartWronski @aras @demofox yep, I've done two work from home stints (one in 2010, another in 2020), and I burned out both times.

Aras, take care of yourself, it's easy to end up in a bad spot

About all the folks saying "come visit!" around here, every rendering team on the planet would love to have you show up at their office for a week and have random discussions over lunch.

You could really start the nerdiest world tour ever. And we'd all love it 😄

@jon_valdes @BartWronski @aras @demofox indeed, that's a pretty awesome idea. And I'm sure it could even be crowd-funded!

For now, maybe saving the date for https://www.graphicsprogrammingconference.nl/ is a good step? 🙂