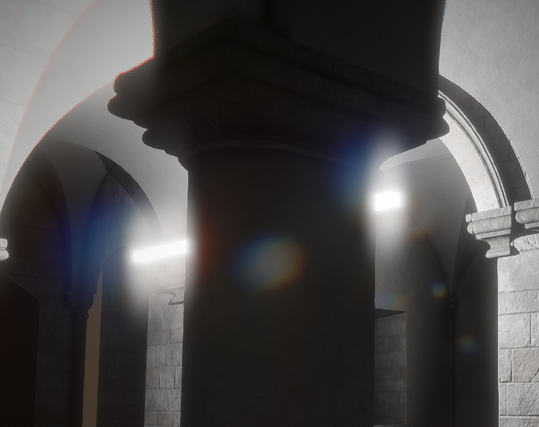

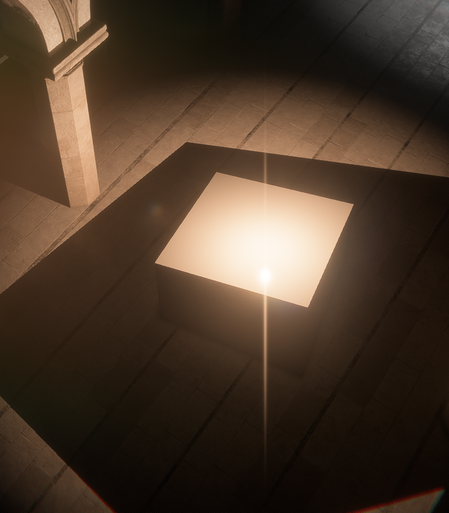

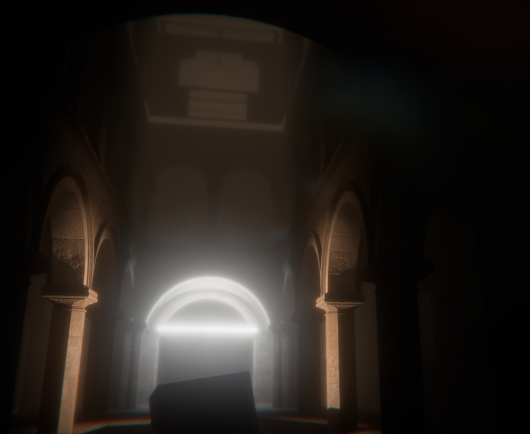

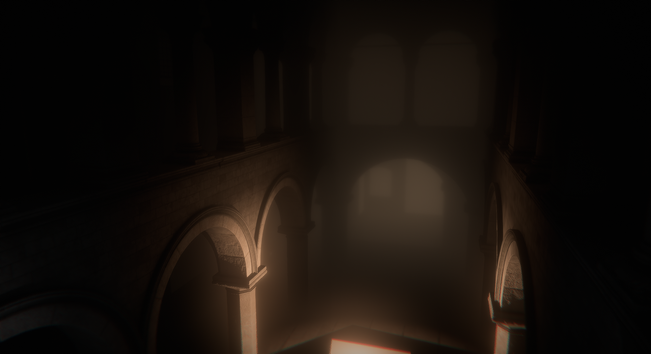

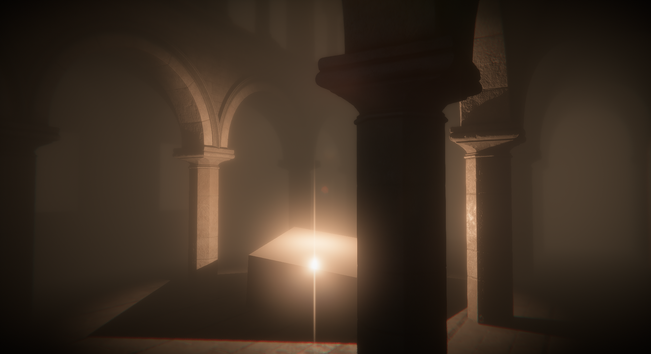

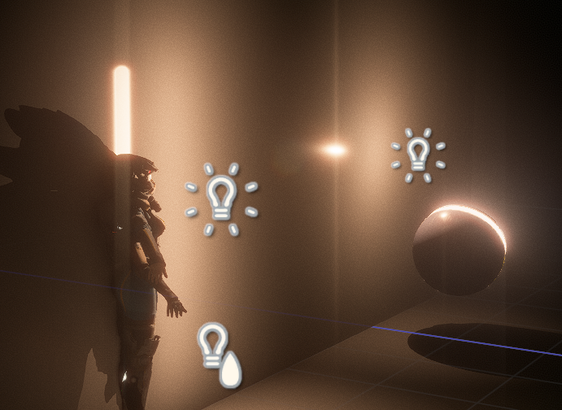

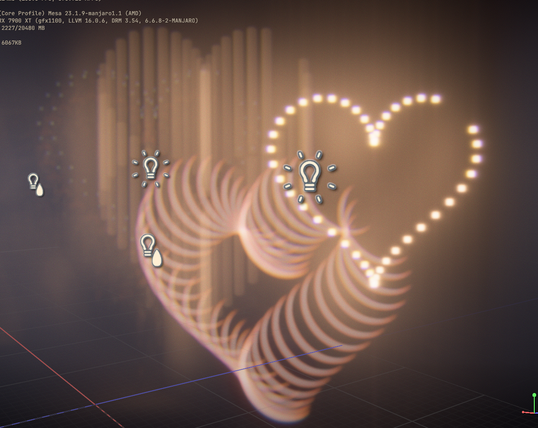

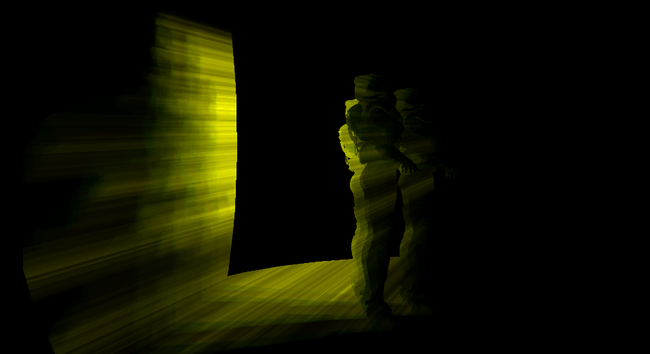

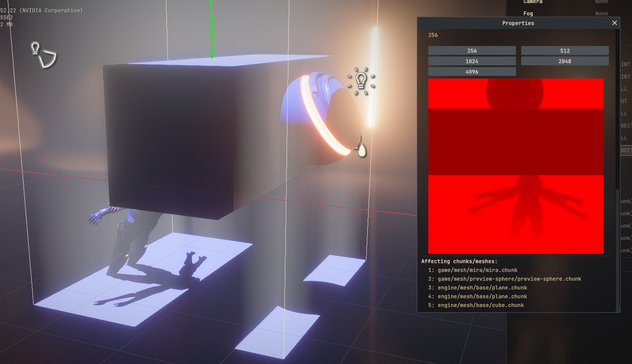

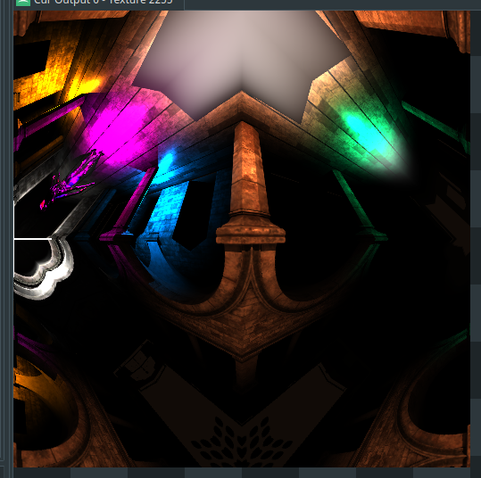

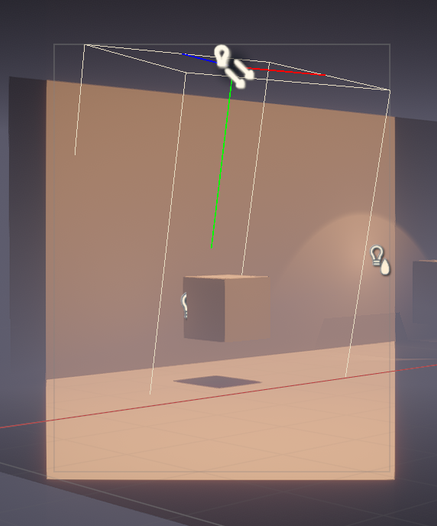

Back on Ombre... and I decided to play again with lens-flares (I know 🤪 ).

This time I wanted to try out the little radial projection trick from John Chapman article (https://john-chapman.github.io/2017/11/05/pseudo-lens-flare.html) to create fake streaks. It's a good start, but I will need to think about how to refine that effect. It looks nice already !