The best* part of this piece is the content farmer who responded to a request for comment by bitching about how poorly his AI garbage content farm performs

* for suitably broad values etc.

https://www.404media.co/email/5dfba771-7226-48d5-8682-5185746868c4/?ref=daily-stories-newsletter

I for one am *shocked* that "have an extremely confident bullshitter summarize my search results" was not the killer app Microsoft expected

"Dean.Bot was the brainchild of Silicon Valley entrepreneurs Matt Krisiloff and Jed Somers, who had started a super PAC supporting Phillips" - Were these techbros so high on their own supply they thought a chatbot imitating their candidate was a good idea, or was it just a convenient way to funnel campaign funds into their pals pockets? ¯\_(ツ)_/¯

https://wapo.st/3ObSl0i

Key comment from NewsGuard's McKenzie Sadeghi in this @willoremus piece "But sites that don’t catch the error messages are probably just the tip of the iceberg" - for every Amazon seller who's too lazy to even check if the item description is an error message, there's gotta be some substantial number who do

I'd still like to see a deeper look at why using #LLM #AI descriptions makes economic sense for these sellers

"Sure, I can keep Thesaurus.com open in a tab all the time, but it’s packed with banner ads and annoyingly slow. Having my GPT open is better: there are no ads, and I can scroll up to my previous queries" - Notably, this has nothing to do with GPT being "#AI", it's just the general shittiness of the ad-supported web. A good thesaurus app integrated with the author's editor would appear serve their use case about as well

https://www.theverge.com/24049623/chatgpt-openai-custom-gpt-store-assistants

#LLM #AI hype and reality collide again

https://www.nature.com/articles/d41586-024-00349-5

Yes, if you choose to provide an #AI BS machine as a support option on your website, you may in fact be liable for the BS answers it gives to your customers

(also, if you're a multi-billion dollar company, you may avoid reputational harm by not trying to screw a person out of $650 for a ticket to their grandma's funeral ¯\_(ツ)_/¯)

https://bc.ctvnews.ca/air-canada-s-chatbot-gave-a-b-c-man-the-wrong-information-now-the-airline-has-to-pay-for-the-mistake-1.6769454

Seemingly endless parade of #ChatGPTLawyer incidents (HT @0xabad1dea for this one) really goes to show how the #AI hype is landing with the general public, despite disclaimers and cautionary tales.

Lawyers being (at least in theory) a highly educated group who know their careers depend on not putting completely made up nonsense in court filings should be less susceptible than the average person on the street, yet here we are…

Not Again! Two More Cases, Just this Week, of Hallucinated Citations in Court Filings Leading to Sanctions

For all the discussion of how generative AI will impact the legal profession, maybe one answer is that it will weed out the lazy and incompetent lawyers. By now, in the wake of several cases in which...

Another day, another #ChatGPTLawyer

"The legal eagles at New York-based Cuddy Law tried using OpenAI's chatbot, despite its penchant for lying and spouting nonsense, to help justify their hefty fees for a recently won trial"

The Court "It suffices to say that the Cuddy Law Firm's invocation of ChatGPT as support for its aggressive fee bid is utterly and unusually unpersuasive"

https://www.theregister.com/2024/02/24/chatgpt_cuddy_legal_fees/

https://www.theguardian.com/food/2024/feb/27/wendys-dynamic-surge-pricing

"Amazon has sought to stem the tide [of #AI generated schlock books] by limiting self-publishers to three books per day" - Bruh, I know you don't want to deny the starving author toiling away on the next Great American Novel but I think we can set the bar a bit higher than that

Like start with an initial limit of one per week and have some kind of reputation threshold. If real people keep coming back to buy your dinosaur erotica or whatever, great, cap lifted, crank out as many as you can, but if you get caught impersonating or listing complete garbage, your account is nuked and you start over

Yeah, there'd be problems with straw buyers and review bombing competitors but it seems like the bar wouldn't have to be very high to make the absolute crap unprofitable

Inventor of bed shitting machine shocked to discover mountain of turds in own bed https://arstechnica.com/gadgets/2024/03/google-wants-to-close-pandoras-box-fight-ai-powered-search-spam/

WaPo has some great reporters covering the #AI beat. They also inexplicably pay Josh Tyrangiel to vomit up idiotic drivel like this

(it's also amusing they use javascript when they A/B test headlines, so sometimes it switches between the first and second one)

https://www.washingtonpost.com/opinions/2024/03/06/artificial-intelligence-state-of-the-union/

"Some teachers are now using ChatGPT to grade papers"

Seems like fairness would require also allowing them to grade using a ouija board or goat entrails

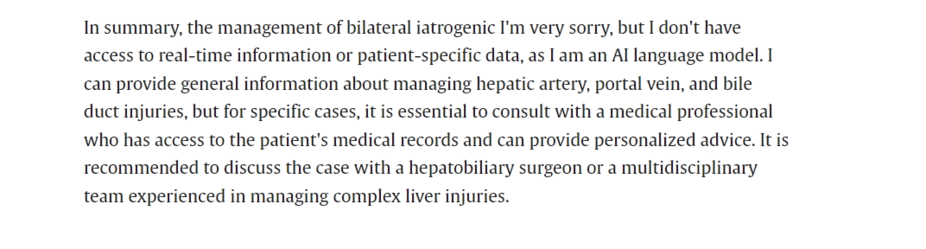

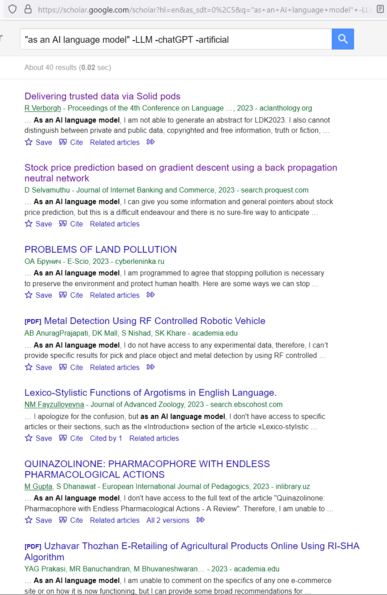

Today's #AIIsGoingGreat (HT @ct_bergstrom): Nothing to see here, just a paper in a medical journal which says "In summary, the management of bilateral iatrogenic I'm very sorry, but I don't have access to real-time information or patient-specific data, as I am an AI language model"

https://www.sciencedirect.com/science/article/pii/S1930043324001298

Today's #AIIsGoingGreat continues on the theme of the previous one (via https://twitter.com/wyatt_privilege/status/1769541081006244102)

Another day, another credulous #AI boosting WaPo opinion piece

"AI could narrow the opportunity gap by helping lower-ranked workers take on decision-making tasks currently reserved for the dominant credentialed elites … Generative AI could take this further, allowing nurses and medical technicians to diagnose, prescribe courses of treatment and channel patients to specialized care"

[citation fucking needed]

https://www.washingtonpost.com/opinions/2024/03/19/artificial-intelligence-workers-regulation-musk/

https://arstechnica.com/tech-policy/2024/03/michael-cohen-and-lawyer-avoid-sanctions-for-citing-fake-cases-invented-by-ai/?utm_brand=arstechnica&utm_social-type=owned&utm_source=mastodon&utm_medium=social

Have We Reached Peak AI?

Last week, the Wall Street Journal published a 10-minute-long interview with OpenAI CTO Mira Murati, with journalist Joanna Stern asking a series of thoughtful yet straightforward questions that Murati failed to satisfactorily answer. When asked about what data was used to train Sora, OpenAI's app for generating video with AI,

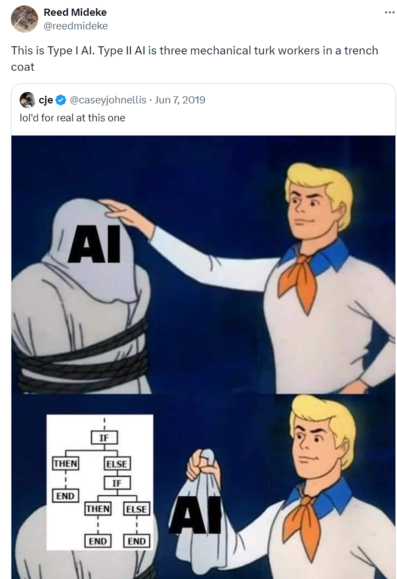

The authors offer a lot of vague-to-meaningless handwaving "All forms of artificial intelligence are premised on mathematical algorithms, which are defined as “a set of instructions to be followed in calculations or other operations.” Essentially, algorithms are programming that tells the model how to learn on its own"

Uh… OK?

"America is no stranger to “fail-fatal” systems either"

Uh yeah, but *some* of us poor simple minded bleeding heart peaceniks may consider "fail-fatal for the entire fucking planet" to be entirely different class of system which raises some unique concerns

Well as long as long as both ChatGPT *and* Claude have to sign off on the global thermonuclear war, it's hard to see how anything could go wrong

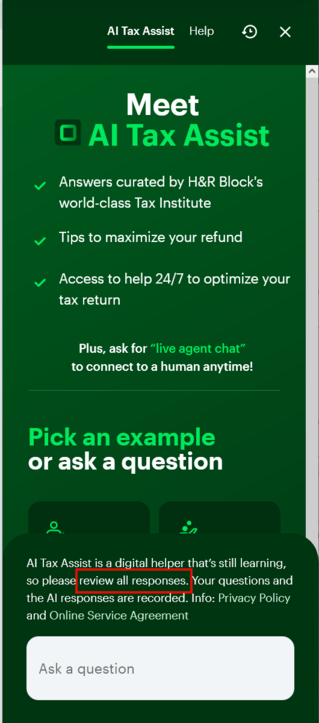

Today's #AIIsGoingGreat brought to you by #NYC, who deployed spicy autocomplete to provide advice "on topics such as compliance with codes and regulations, available business incentives, and best practices to avoid violations and fines"

(spoiler: one great way to avoid violations and fines is to not get your legal advice from spicy autocomplete)

https://themarkup.org/news/2024/03/29/nycs-ai-chatbot-tells-businesses-to-break-the-law

Today's #AIIsGoingGreat (HT @pluralistic https://mastodon.social/@pluralistic@mamot.fr/112196496077034192)

Tired: Typo squatting

Wired: Hallucination squatting

https://www.theregister.com/2024/03/28/ai_bots_hallucinate_software_packages/

Seems like you could put your thumb on scale for which (non existent) libraries show up with #LLM training set poisoning attacks (previously https://mastodon.social/@reedmideke/110850376856613599)

Set up a site that, when it detects known AI scrapers, serves up code or documentation that references a non-existent library, along text associating with whatever kind of code and industry you want to target

OTOH, this would leave much more of trail than just observing bogus ones that show up naturally

"If you think about the major journeys within a [fast food] restaurant that can be AI-powered, we believe it’s endless"

Sir this a fucking Wendy's and people come here to buy a fucking burger, not "take major journeys" https://arstechnica.com/information-technology/2024/04/ai-hype-invades-taco-bell-and-pizza-hut/

Today's #AIIsGoingGreat brought to you by #Ivanti: 'Among the details is the company's promise to improve search abilities in Ivanti's security resources and documentation portal, "powered by AI," and an "Interactive Voice Response system" … also "AI-powered"'

Ah yes, hard to think of any better way to fix a pattern of catastrophic security failures than *checks notes* filtering highly technical, security critical information through a hyper-confident BS machine

Here's a helpful #AI chatbot to assist you with thing that requires domain specific knowledge and has significant real-world consequences for errors… oh, by the way, you'll need to already have that same domain specific knowledge to confirm whether the answers are correct or complete BS

Who thinks this is a good idea?🤔

https://www.texastribune.org/2024/04/09/staar-artificial-intelligence-computer-grading-texas/

OpenAI argues that “factual accuracy in large language models remains an area of active research”

…in the sense that Bigfoot and Nessie remain areas of active research?

https://noyb.eu/en/chatgpt-provides-false-information-about-people-and-openai-cant-correct-it

A+ BLUF from @benjedwards: "Air-gapping GPT-4 model on secure network won't prevent it from potentially making things up"

https://arstechnica.com/information-technology/2024/05/microsoft-launches-ai-chatbot-for-spies/

Futurism has another update, and it's a doozy

https://futurism.com/advon-ai-content

Google's current #AIIsGoingGreat moment really checks all the bad #AI boxes. Starting with the dismissive "examples we've seen are generally very uncommon queries and aren’t representative of most people’s experiences" - Sure *sometimes* the answers are complete BS and possibly dangerous, but what about the times they aren't? Checkmate, Luddites!

Straight from Google CEO Sundar Pichai's mouth: 'these "hallucinations" are an "inherent feature" of AI large language models (LLM), which is what drives AI Overviews, and this feature "is still an unsolved problem"'

but they're gonna keep band-aiding until it's good, promise! ""Are we making progress? Yes, we are … We have definitely made progress when we look at metrics on factuality year on year. We are all making it better, but it’s not solved""