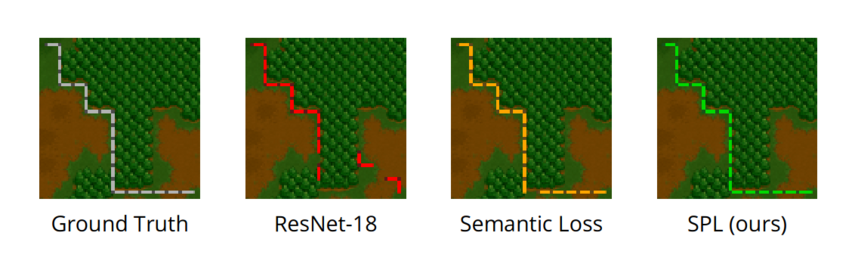

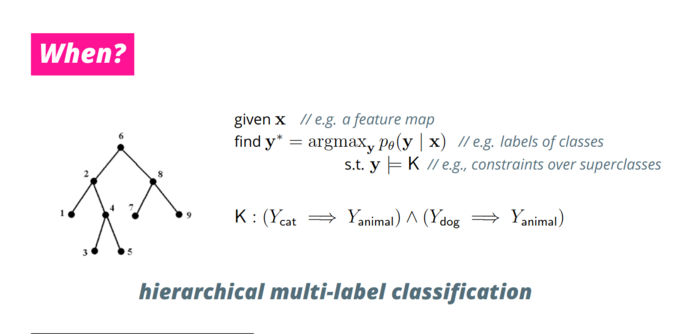

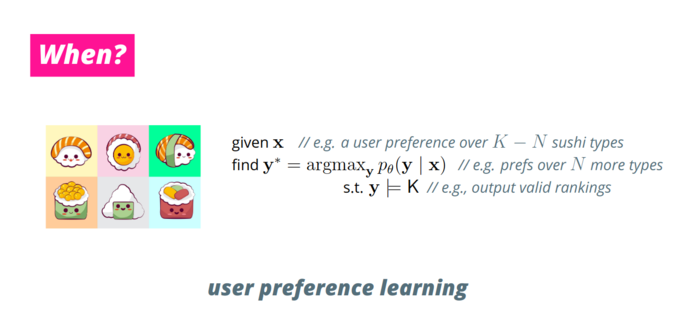

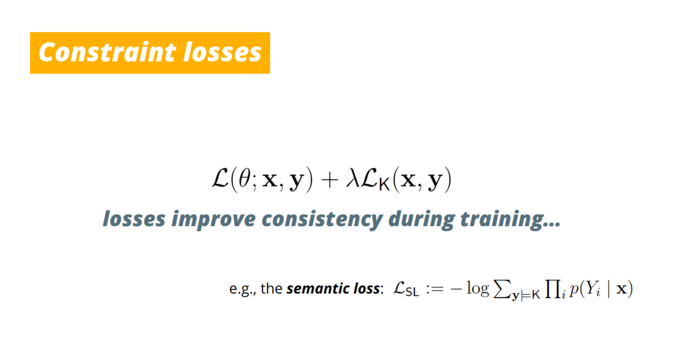

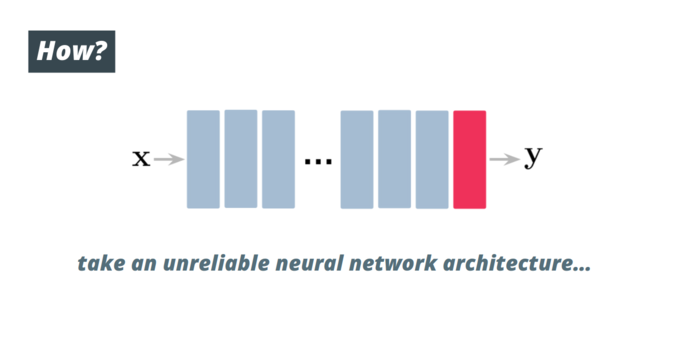

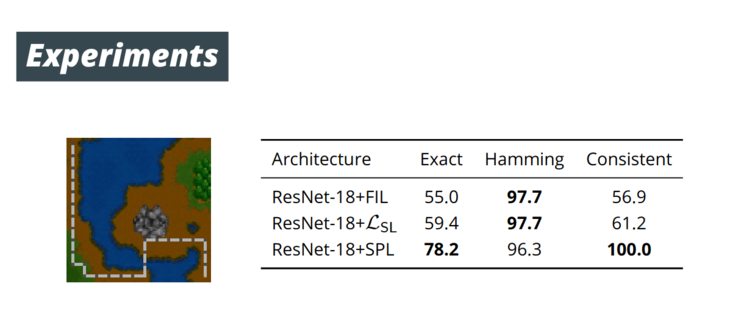

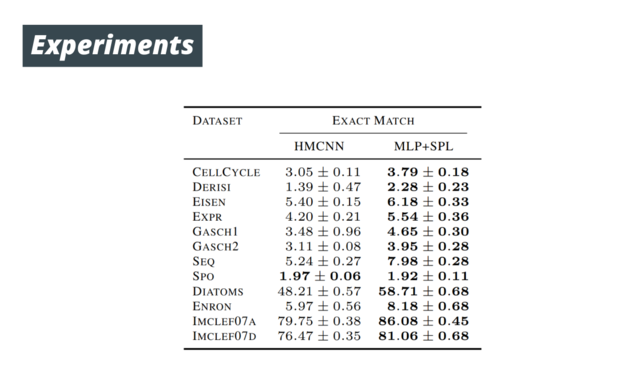

#Neural nets struggle to #guarantee that their predictions always conform to our prior knowledge!

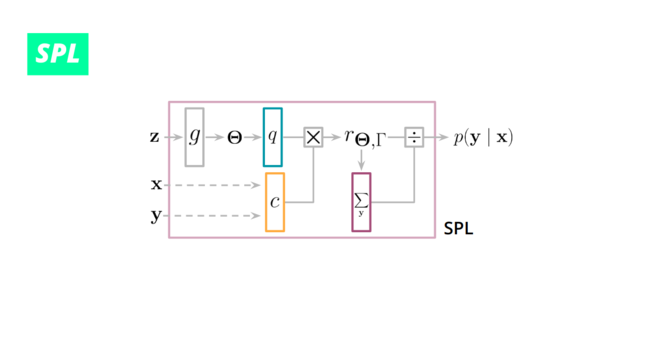

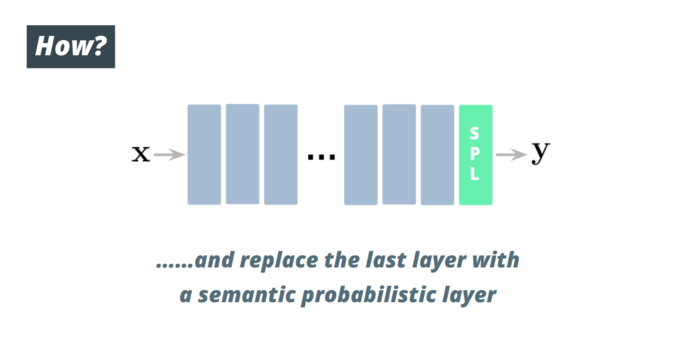

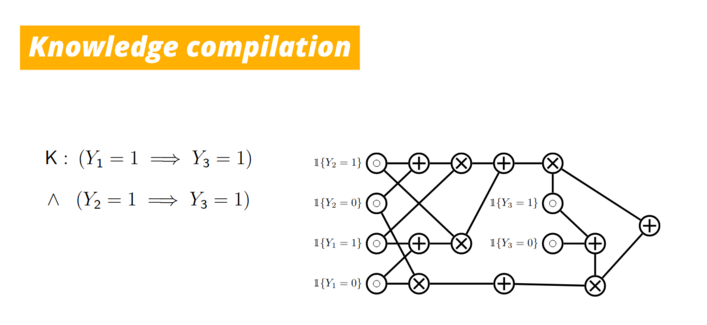

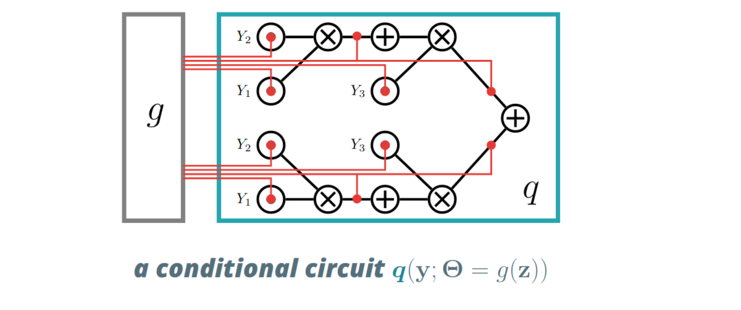

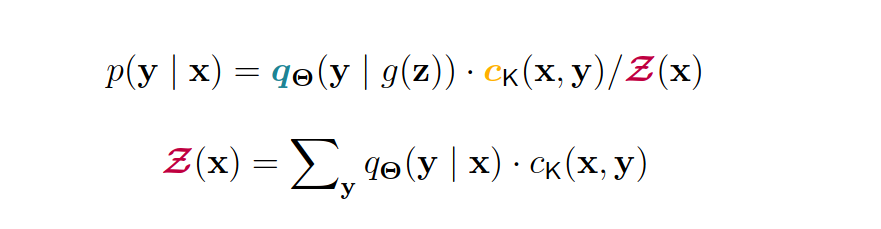

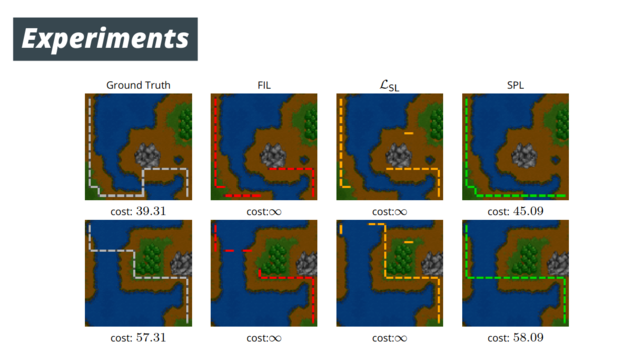

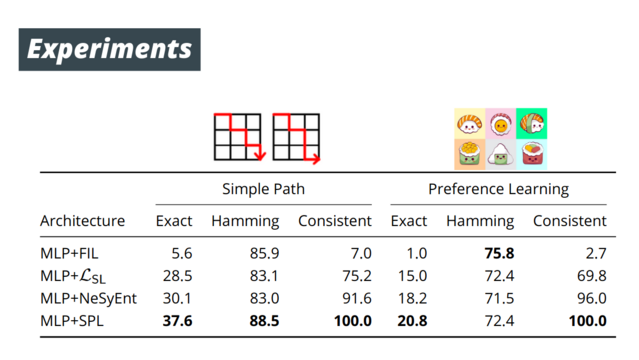

We devise a drop-in replacement layer that injects given #symbolic #constraints while retaining #exact and #efficient gradient optimization in our #NeurIPS paper:

https://openreview.net/forum?id=o-mxIWAY1T8

1/🧵