Backward frees saved tensors, so you cannot reuse the same graph.

Build neural networks in Rust using matmul, gradients, and backpropagation.

RE: https://mathstodon.xyz/@albertcardona/115850375733218207

Cool to see a concrete alternative to #ReLU #backprop models that remains both interpretable and biologically grounded:

I am really proud to announce that our latest effort on showing the advantages of our analog/digital #neuromorphic spiking neural network chips in solving complex biomedical applications has just been published here: https://rdcu.be/ef5N0

The demonstrates that *small* *highly variable* and *low accuracy* #SNNs can indeed be useful, without having to resort to #backprop in large-scale #DNNs! 😉

I am personally fascinated by "adjoint optimization in CFD", e.g. people using autodiff on entire physical simulations to improve shapes of objects.

At the same time, it seems comparatively rarely used in industry, e.g. most design processes only perform forward simulation.

Does anyone here have an idea *why* that is? Boost for reach please...

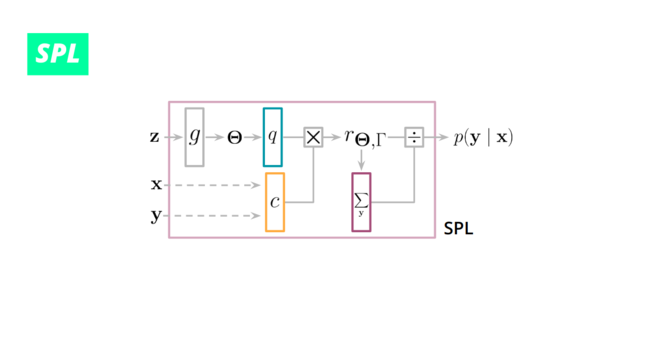

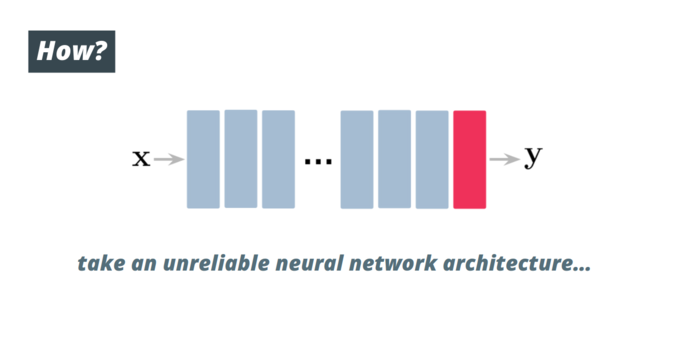

Our #Semantic #Probabilistic #Layers #SPLs instead always guarantee 100% of the times that predictions satisfy the injected constraints!

They can be readily used in deep nets as they can be trained by #backprop and #maximum #likelihood #estimation.

4/

🧠

🧠