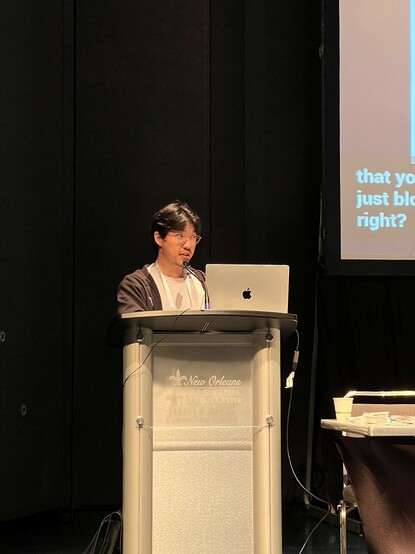

Wonderfully insightful talk by Jimmy Ba in our “Has it Trained Yet” Workshop (hity-workshop.github.io/NeurIPS2022/).

Find us in Theatre B now for the next talk, by Susan Zhang

And you can still do our questionnaire at http://forms.gle/oxs9nUHzEkJPcZxH7!

HITY Workshop Poll

With this survey, we want to feel the pulse of how neural networks are currently being trained. Please answer the following questions with how you would typically train and what your setup usually looks like. The aggregated results of this poll will be presented at the HITY workshop at NeurIPS 2022.