Wordstat API в Yandex Cloud Search API: разбор endpoints, подводные камни, минимальный Python wrapper (2026)

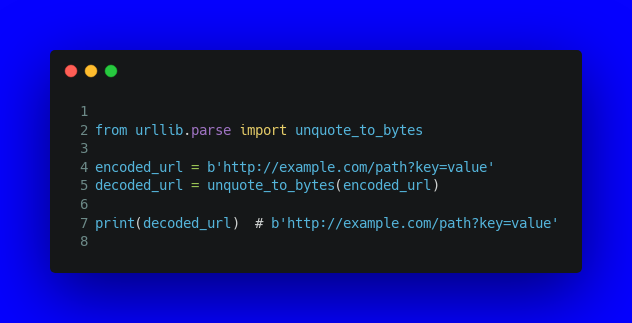

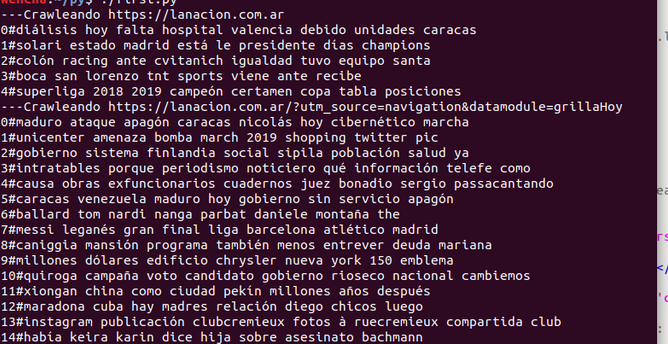

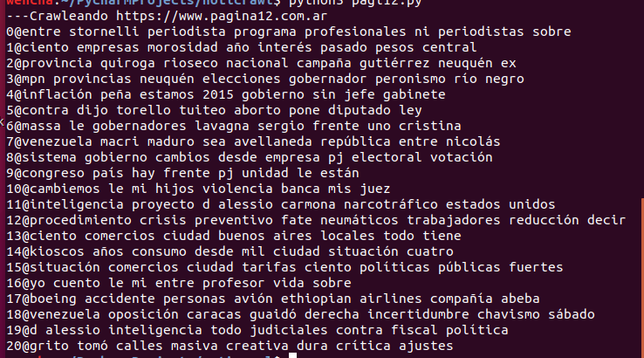

Понадобилась мне семантика - не в смысле «один раз глянуть Wordstat в браузере», а программно, чтобы прогонять по 50-100 фраз в день и складывать результаты в свою базу. Контекст: веду контент-маркетинг для агентства разработки чат-ботов BotKraft, статьи под Яндекс Нейро. Веб-Wordstat для такого объёма не вариант - копировать вручную из таблички полдня. Direct API - слишком дорогой вход: нужен рекламный аккаунт, отдельный OAuth, у меня этого не было и заводить ради одного метода не хотелось. Случайно полез в новые сервисы Yandex Cloud AI Studio (там сейчас живёт YandexGPT) и обнаружил, что Wordstat теперь есть в Search API v2 - отдельным сервисом без зависимости от Direct. Доступ - обычный API-ключ из AI Studio, тот же что и для YandexGPT. По сути в один клик получаешь ещё и доступ к семантике. Подключал, по дороге собрал коллекцию граблей. Этим и поделюсь.

https://habr.com/ru/articles/1030276/

#wordstat #yandex_cloud #search_api #ai_studio #python #seo #urllib