Ghost Debugging in the Age of AI: Why Your Code is Fine, but Your Toolchain is AI Slop

988 words, 5 minutes read time.

Big Tech is currently incinerating billions of dollars in a desperate, scorched-earth race to save a few million in labor costs by replacing seasoned engineers with AI—but the reality on the ground is a visceral nightmare of “High-Fidelity Slop” that forces you to spend more time debugging the toolchain than writing actual code.

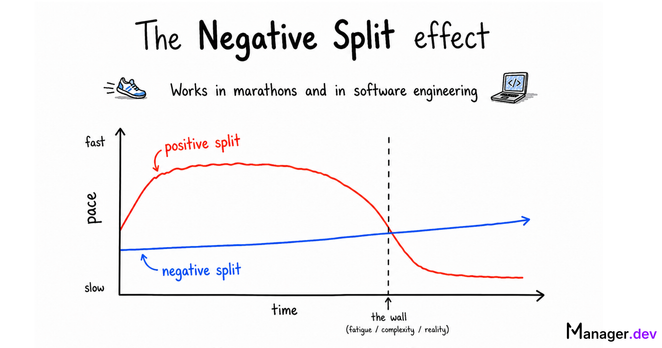

The modern developer’s greatest enemy isn’t a lack of skill; it’s a feedback loop of automated hallucinations and aggressive caching. You spend three hours gutting your logic and questioning your sanity only to realize your code was perfect the entire time. The failure was in a “smart” toolchain that decided, in its automated arrogance, to serve you a zombie version of your work. We are paying a “Slop Tax” for tools that are buggy, error-prone, and fundamentally insecure.

To survive this era of corporate psychosis, you have to understand three hard truths: the lie of toolchain abstraction, the rot of agentic maintenance, and the absolute necessity of the manual override.

The Abstraction Lie: When “Smart” Toolchains Gaslight You

The first protocol of any lead architect is to ensure that the feedback loop between the editor and the execution environment is pure. If you change a line of code, that change must manifest. But in the age of modern enterprise toolchains, that contract has been shredded. These systems were built for massive, sprawling monorepos where thousands of developers push code simultaneously. For that specific, niche environment, aggressive incremental caching makes sense. For the man in the trenches trying to ship a specific feature, it is a catastrophic layer of unnecessary complexity.

When you write a function, you are performing surgery. When the toolchain decides to “optimize” your build by not re-transpiling a file because it didn’t detect a “significant” enough change, it is effectively lying to you. It tells you the build is successful, but it serves a ghost—the version of the code from three saves ago. We’ve allowed ourselves to be pushed into black boxes that are so “smart” they’ve become stupid. A lead developer knows exactly what his compiler is doing. If your toolchain isn’t transparent, it isn’t a tool; it’s an obstacle.

Agentic Rot: Why Your Tools are Maintained by Machines

We have entered the era of Agentic Rot, where the tools we use are being maintained by other tools. Modern build engines aren’t the hand-crafted work of master architects anymore; they are repositories where AI agents are constantly opening pull requests to update dependencies and “refactor” logic. This creates a terrifying lack of accountability. When an AI updates a library version or a “Rig,” it doesn’t care that it just broke the file-watcher for every developer on the team.

This is why your toolchain is lying to you. The ivory towers have decided that “automation” is more valuable than “transparency.” They’ve optimized for a world where the build server never stops, even if that means the local developer can never start. As a lead architect, you have to recognize that this is a direct attack on your technical discipline. You cannot let a machine’s hallucination about how a framework should be structured dictate your project’s timeline. You have to be the one who understands the protocol well enough to know when the documentation is stale and the tool is wrong.

The Protocol of the Hard Reload: Reclaiming Your Integrity

There is a direct correlation between the integrity of your code and the integrity of your character. In a world of AI slop, it is incredibly easy to be “good enough.” It is easy to ignore the warning signs, see that the build “mostly” works, and move on. But that is how technical debt begins. That is how you end up with a deployment that is missing critical logic because you didn’t have the discipline to verify the source.

A lead architect doesn’t surrender to the machine. If the code isn’t updating, you don’t keep clicking refresh; you rip the system open. You go into the hidden folders, you check the temporary artifacts, and you find the stale file that is poisoning your build. This level of aggression toward bad tooling is what separates the veterans from the casualties. You have to be the manual override. Integrity means ensuring the execution matches the source—every single time.

Stop Trusting, Start Verifying

The reality of 2026 is that Big Tech is spending billions to save millions, and they’ve decided your productivity is an acceptable sacrifice. They’ve built a world where the code looks good, but the infrastructure is a buggy mess. You can either be a victim of this system or the master of it.

The next time you’re three hours deep into a bug that shouldn’t exist, stop. Don’t look at your code. Look at your toolchain. Kill the process. Wipe the cache. Burn the build folder to the ground. Force the machine to confront the reality of the logic you actually wrote. This isn’t just a technical fix; it’s a statement of intent. It’s you reclaiming your role as the architect. Build with discipline. Deploy with skepticism. And never, ever let the slop win.

Author’s Note: This post was written in the immediate aftermath of a three-hour debugging gauntlet. A critical piece of logic had been correctly refactored and fixed, yet the bug persisted in the output with haunting consistency. After multiple IDE shutdowns, full system restarts, and repeated rebuilds, the culprit was finally unmasked: the toolchain was aggressively caching an old version of the codebase, refusing to acknowledge the new reality of the source. This is what happens when tools stop serving the developer and start serving the “optimization” algorithm.

Investigating how these modern toolchains are maintained revealed a sobering reality. Many of these repositories are now “curated” by AI-driven development workflows. High-volume contributions in these ecosystems are increasingly handled by automated agents that generate pull requests for everything from security patches to dependency management. When a tool is “authored” by an engine that prioritizes patterns over local execution context, you get a build system that looks impressive on paper but gaslights you in practice.

Call to Action

If you found this guide helpful, don’t let the learning stop here. Subscribe to the newsletter for more in-the-trenches insights. Join the conversation by leaving a comment with your own experiences or questions—your insights might just help another developer avoid a late-night coding meltdown. And if you want to go deeper, connect with me for consulting or further discussion.

D. Bryan King

Sources

Disclaimer:

I love sharing what I’m learning, but please keep in mind that everything I write here—including this post—is just my personal take. These are my own opinions based on my research and my understanding of things at the time I’m writing them. Since life moves way too fast and things change quickly, please use your own best judgment and consult the experts for your specific situations!

#AIHallucinationsInCode #AISlop #AutomatedMaintenance #AutomatedPullRequests #BigTechAITrends #BlackBoxTooling #BuildArtifacts #BuildEngineFailures #BuildProcessOptimization #CodeExecutionContext #CodeTransparency #CodebaseIntegrity #CorporateAutomationTrends #DebuggingGauntlet #DebuggingRage #DependencyManagementRisks #DeveloperBurnout #DeveloperExperienceDX #developerProductivity #DevelopmentFeedbackLoop #EngineeringDiscipline #EnterpriseToolchainBloat #GhostDebugging #GhostInTheMachine #HardReloadStrategy #HighFidelitySlop #IncrementalCachingProblems #JuniorVsSeniorDeveloperMindset #KillingTheCache #LeadArchitectStrategy #ManualOverrideProtocol #ModernBuildSystems #ModernProgrammingChallenges #ProfessionalProgrammingStandards #ProgrammingBlog2026 #RealWorldProgrammingInsights #RefactoringLogic #SharePointFrameworkDebugging #SoftwareArchitecturePrinciples #softwareCraftsmanship #SoftwareDeploymentRisks #SoftwareDevelopmentEthics #SoftwareEngineeringIntegrity #SPFxToolchainIssues #StaleCodeCache #SystemAbstractionTax #TechIndustryLaborCosts #technicalDebt #technicalLeadership #TechnicalSovereignty #ToolchainCaching #WebDevelopmentFrustrations