New Article in R&D Management: »Anticipating Knowledge Applicability in Open Science Through Recycling, Mimicking, and Shortcutting«

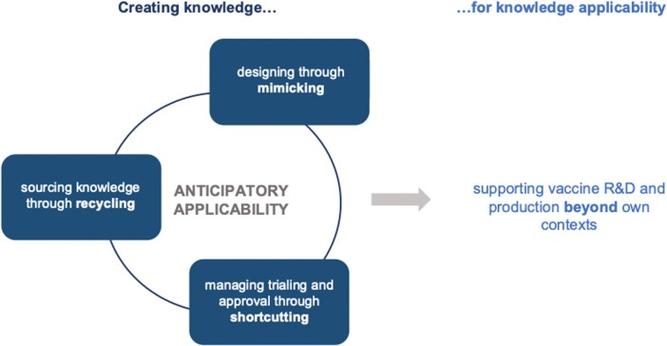

Model of anticipatory applicability throughout the R&D process.

During the Covid-19 pandemic, several ventures tried to develop vaccines that are not protected by patents and could be fast and easily distributed acround the globe. In the course of the research project “Organizing Creativity under Regulatory Uncertainty: Alternative Approaches to Intellectual Property” (funded by the Austrian Science Fund FWF and the German Research Foundation DFG), we collected data on such alternative, more open approaches to pharmaceutical R&D.

It is with great pleasure that a paper comparing five such cases has now been published in the journal R&D Management. Check out the abstract of the article entitled “Anticipating Knowledge Applicability in Open Science Through Recycling, Mimicking, and Shortcutting” and co-authored with my former PhD student Milena Leybold and long-term collaborators Konstantin Hondros and Sigrid Quack below:

Open science literature scrutinizes how organizations provide access to knowledge. Yet, much less is known about how organizations pursuing open science for societal impact anticipate knowledge applicability—that shared knowledge is reusable for other organizations and individuals, and enables open social innovation. Mobilizing a practice perspective on open science, we investigate how organizations create knowledge that is applicable for participation and further use. Focusing on vaccine research and development during the COVID-19 pandemic, we zoom in on five organizations that pursue open science with the goal of making vaccines available worldwide. We identify three practices of creating knowledge that the organizations employ when doing open science. They recycle accessible knowledge, mimic vaccine designs, and shortcut parts of the approval processes. Beyond facilitating accessibility, these practices create knowledge to support knowledge applicability: they constitute a relation between knowledge creation and sharing that anticipates multiple contexts for the reuse of knowledge. The paper argues that the resulting ‘anticipatory applicability’ leverages open science for societal impact.

The article is available as an open access full text over at R&D Management. And in addition, I have also created the obligatory 1paper1meme below:

#anticipatoryApplicability #mimicking #openSocialInnovation #RDManagement #recycling #shortcutting