Are AI overviews quietly breaking our research discovery ecosystem? If zero-click readership becomes the norm, who controls visibility, what does impact even measure, and can we still claim to be “engaging” with research at all?

#AI #scholcomm #research

They’re not! Discover how preprints are findable, citable, and transforming scholarly communication in the ASAPbio Myth Busting whiteboard video.🎬

🎥 Watch here → https://www.youtube.com/watch?v=LzJcELG3WqI

#Preprint #OpenScience #ScholComm #ScientificPublishing

Myth 3: Preprints are not discoverable or citable

Update. Related:

"Opening Pandora's box: Paper mills in conference proceedings."

https://arxiv.org/abs/2604.22458

From the abstract: "This study aims to identify papers in conference proceedings whose titles have been offered for sale on social media platforms. We collected more than 4,000 unique publication offers from more than 200 social media channels and used semi-automated methods along with human assessment to match offers with papers published in IEEE conference proceedings. We identified 1,720 papers in 286 IEEE conference proceedings, accounting for up to 23.51% of an individual conference. These problematic papers are co-authored by more than 6,500 researchers from over 3,500 affiliations in 55 countries."

Opening Pandora's box: Paper mills in conference proceedings

Paper mills are a growing threat to the integrity of science, yet their penetration in conference proceedings remains underexplored despite conferences being more important than journals in some scientific subfields. This study aims to identify papers in conference proceedings whose titles have been offered for sale on social media platforms. We collected more than 4,000 unique publication offers from more than 200 social media channels and used semi-automated methods along with human assessment to match offers with papers published in IEEE conference proceedings. We identified 1,720 papers in 286 IEEE conference proceedings, accounting for up to 23.51% of an individual conference. These problematic papers are co-authored by more than 6,500 researchers from over 3,500 affiliations in 55 countries. The identified papers demonstrate collaboration anomalies, high diversity of affiliations per paper, citation manipulation, a predominance of six-author papers, and content-based irregularities. Our findings show that paper mills are a large, organized, and often public market that commercializes scientific misconduct, not limited to papers, but infiltrating multiple parts of the research ecosystem.

Update. You can now see the preprint itself, on #arXiv.

https://arxiv.org/abs/2604.24576

From the abstract: "We assemble BuyTheBy, a large, annotated dataset of timestamped, text-based paper mill advertisements from seven businesses operating out of seven different countries. The dataset consists of 18,710 individual advertisements, of which 15,839 have prices listed. Among these there are 20,598 positions listed as for sale on 5,567 unique products in 14 different product categories with 51,812 timestamped price data points."

BuyTheBy: A dataset of 18,710 text-based paper mill advertisements with 51,812 timestamped prices

The study of paper mills and similar businesses operating in the market for academic and education fraud services is frustrated by the lack of market price data on their various offerings. Here, we assemble BuyTheBy, a large, annotated dataset of timestamped, text-based paper mill advertisements from seven businesses operating out of seven different countries. The dataset consists of 18,710 individual advertisements, of which 15,839 have prices listed. Among these there are 20,598 positions listed as for sale on 5,567 unique products in 14 different product categories with 51,812 timestamped price data points. We perform elementary analysis of this dataset to demonstrate its utility for quantitative understanding of markets for academic fraud services and suggest future use cases.

"Thousands of shady ads sell paper authorship for cash, large-scale investigation finds."

https://www.science.org/content/article/thousands-shady-ads-sell-paper-authorship-cash-large-scale-investigation-finds

PS: This article from _Science_ reports important news about paper mills. But apart from that, note that it draws from an #arXiv preprint that hasn't been released yet. It's a preprint preprint, and from _Science_. A nice example of the ongoing #ScholComm transformation.

Update. "Many [non-native writers of English] cannot confidently judge whether their original expression is better than the AI's suggestion. Some do not even suspect that their original phrasing might carry nuance worth preserving…Thus, AI does not affect all writers equally. Native speakers tend to use AI to clarify their writing while preserving their voice. Non-native speakers often use AI in a fundamentally different way: their voice is replaced by a standardized, fluent, but impersonal tone. In many cases, they do not even notice that this has happened. This difference is unfair, yet largely invisible."

https://onlinelibrary.wiley.com/doi/10.1111/jep.70455

Update. The passage in the #Trump budget criticizing expensive #subscriptions and #APCs (previous post, this thread) triggered a debate in the House of Representatives.

"US lawmakers intensify scrutiny of scientific-publishing practices."

https://www.nature.com/articles/d41586-026-01251-y

"From ‘paper mills’ that sell authorships on fake or low-quality research papers to the costs associated with open-access publishing, US lawmakers are paying increasing attention to widely debated issues in scientific publishing. In a rare show of unity, members of the US House of Representatives from both sides of the political aisle agreed at a hearing that these issues deserve more attention from government — but there was less unity on what the solutions should be."

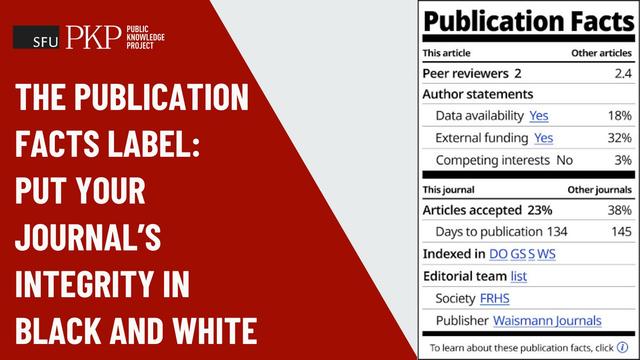

✅ In PKP's "Archipelago" - "The Publication Facts Label: Put your journal's integrity in black and white"

"The #PFLabel will inspire #editors and #authors to comply with #PublishingStandards... (and) for editors, keep an eye on days-to-publication and pursue #indexing opportunities...

The first 500+ journals have it in place and the number grows every day. The more widely it is used... the better it serves as a trust marker..."

Update. I'm very glad to see the #DOAJ endorse the #PKP "Publication Facts" label.

https://blog.doaj.org/2026/04/22/introducing-the-publication-facts-label-another-tool-in-your-research-integrity-toolkit/

I hope this nudges more publishers to use the labels. In any case, it should lead more readers to expect publishers to use them.

Do Large Language Models know ...

Do Large Language Models know Which Published Articles have been Retracted?

Large Language Models (LLMs) can be helpful for literature search and summarisation, but retracted articles can confuse them. This article asks three open weights (offline) LLMs whether 161 high profile retracted articles had been retracted, performing a similar check for a benchmark multidisciplinary set of 34,070 non-retracted articles. Based on titles and abstracts, in over 80% of cases the LLMs claimed that a retracted article had not been retracted (GPT OSS 120B: 82%; Gemma 3 27B: 84%; DeepSeek R1 72B: 88%). The reasons given for a correct retraction declaration were often wrong, even if detailed. This confirms that LLMs have little ability to distinguish between valid and retracted studies, unless they are allowed to, and do, check online. For the benchmark test, there were only 55 false retraction claims from 34,070 non-retracted full text articles, and 28 false claims when only the title and abstract were entered, suggesting that there is only a small chance that LLMs discount valid studies. When retractions are erroneously claimed, this does not seem to be due to mistakes in the article. Overall, the results give new reasons to be cautious about LLM claims about academic findings.