GLM-4.7: China’s Open-Source AI Revolution Challenging Global Giants

China’s Zhipu AI Unveils GLM-4.7: Surpassing GPT-5.1 in Key Benchmarks at a Fraction of the Cost. The Open-Source AI That Outpaces GPT-5.1 and Costs 7x Less Than Claude

In the fast-evolving world of artificial intelligence, where American tech behemoths like OpenAI and Anthropic have long dominated the headlines, a quiet revolution is brewing from the East. China’s Zhipu AI, often dubbed “China’s OpenAI,” has just launched GLM-4.7, a groundbreaking open-source language model that’s not only challenging the status quo but outright surpassing established players in several critical areas. Released on December 22, 2025, this model represents a significant leap forward in accessible, high-performance AI, particularly for developers and researchers who crave power without the prohibitive costs.

Imagine an AI that can run seamlessly on your personal computer, costs nothing to download, and yet delivers results that rival or exceed those of proprietary giants like GPT-5.1 from OpenAI and Claude Sonnet 4.5 from Anthropic. That’s the promise of GLM-4.7. Built with a massive 358 billion parameters in a Mixture-of-Experts (MoE) architecture, it boasts an impressive context window of 204,800 input tokens and up to 131,072 output tokens, allowing it to handle sprawling datasets and generate extensive responses without breaking a sweat. But what truly sets it apart is its focus on real-world applicability, especially in programming and logical reasoning tasks, where it has been hailed as the premier open-source model available today.

Zhipu AI, founded in 2019 as a spin-off from Tsinghua University, has been steadily climbing the ranks in the global AI arena. Backed by major investors including Alibaba, the company has poured resources into developing the GLM series, with each iteration pushing boundaries further. GLM-4.7 builds directly on the successes of its predecessor, GLM-4.6, but introduces refinements that address key pain points in AI usage, such as consistency in multi-step tasks and efficient tool integration. This isn’t just an incremental update; it’s a model engineered for production environments, where developers need reliability over flashy demos.

One of the most exciting aspects of GLM-4.7 is its open-source nature. The model weights are freely available on platforms like Hugging Face and ModelScope, meaning anyone with sufficient hardware can deploy it locally without relying on cloud services. This democratizes access to frontier-level AI, empowering individual developers, small startups, and even hobbyists to experiment and innovate without subscription fees. For those who prefer API access, Zhipu offers the GLM Coding Plan, which provides Claude-level performance at just one-seventh the cost—input at $0.60 per million tokens and output at $2.20, compared to Claude’s $3 input and $15 output. This pricing model makes it up to seven times more affordable, opening doors for widespread adoption in resource-constrained settings.

But affordability alone wouldn’t make headlines. It’s the performance metrics that have the AI community buzzing. Across a suite of 17 rigorous benchmarks spanning reasoning, coding, and agentic tasks, GLM-4.7 demonstrates remarkable prowess. In the SWE-bench Verified, a gold-standard test for real-world coding capabilities involving fixing GitHub issues, it scores 73.8%, a substantial 5.8% improvement over GLM-4.6 and competitive with proprietary models like Claude Sonnet 4.5’s 77.2% and GPT-5.1 High’s 76.3%. Even more impressive is its multilingual variant, where it achieves 66.7%, outpacing many competitors and highlighting its versatility in non-English codebases.

In logical reasoning and mathematical domains, GLM-4.7 shines brightly. On the Humanity’s Last Exam (HLE) benchmark, which probes advanced academic-level reasoning, it notches 42.8% with tools enabled, surpassing Claude Sonnet 4.5’s 32.0% and GPT-5 High’s 35.2%. This isn’t mere number-crunching; it reflects the model’s ability to tackle complex problems that mimic real human intellectual challenges. Similarly, in AIME 2025, a high-stakes math competition benchmark, GLM-4.7 hits 95.7%, edging out GPT-5.1’s 94.0% and far exceeding Claude’s 87.0%. These scores position it as a top contender, especially considering its open-source status, which allows for community-driven improvements.

What enables such feats? A key innovation is Zhipu AI’s “thinking technologies,” designed to preserve and enhance the model’s reasoning process. Preserved Thinking stands out here, as it maintains thinking blocks across multi-turn conversations, ensuring consistency in long-horizon tasks. This means the AI doesn’t “forget” its earlier logic midway through a complex problem-solving session; it keeps the entire chain of thought intact from start to finish. Interleaved Thinking prompts the model to deliberate before every response or tool call, while Turn-level Thinking allows users to toggle thinking modes for balancing latency and depth. These features make GLM-4.7 particularly adept at agentic tasks, where AI must interact with external tools like web browsers or APIs.

For instance, in tool-using benchmarks like τ²-Bench, GLM-4.7 scores 87.4%, matching Claude Sonnet 4.5’s 87.2% and outperforming GPT-5.1’s 82.7%. On BrowseComp, a web browsing task evaluator, it achieves 52.0%, better than Claude’s 24.1%. This capability is crucial for developers building AI agents that need to fetch data, execute commands, or navigate interfaces autonomously. In terminal-based tasks, such as Terminal Bench 2.0, it jumps to 41.0% from GLM-4.6’s 24.5%, demonstrating enhanced handling of command-line environments.

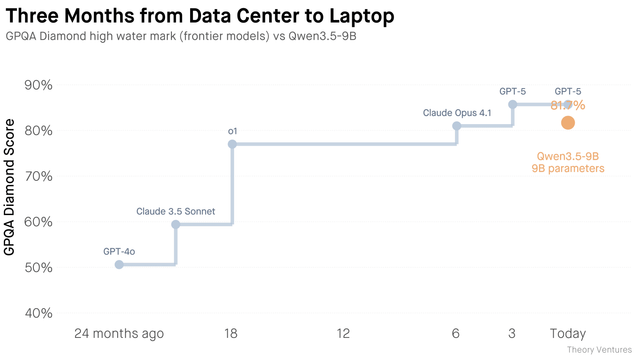

Comparisons to industry leaders reveal GLM-4.7’s edge in value proposition. While GPT-5.1, released earlier in 2025, boasts raw power in some general reasoning tasks—like 88.1% on GPQA-Diamond versus GLM-4.7’s 85.7%—it comes at a premium cost and remains closed-source. Claude Sonnet 4.5, meanwhile, excels in certain agentic scenarios but lags in cost-efficiency and open accessibility. GLM-4.7 bridges this gap by offering similar or superior performance in coding and tool use while being freely deployable. Independent tests, such as those on Medium, confirm that GLM-4.7 is “consistently close enough to GPT-5.1 and Claude 4.5” in production scenarios, with dramatic improvements in behavior over previous versions.

The implications for the AI ecosystem are profound. In a landscape where access to cutting-edge models is often gated by high fees or proprietary restrictions, GLM-4.7 lowers barriers, fostering innovation across borders. Chinese AI firms like Zhipu are proving that open-source doesn’t mean second-rate; in fact, it can lead to faster iteration through global collaboration. Developers have already begun integrating it into workflows, reporting smoother UI generation and more reliable multi-step coding agents. For example, in vibe coding—creating aesthetically pleasing outputs like webpages or slides—GLM-4.7 produces cleaner results with accurate layouts, a step up from earlier models.

Looking ahead, GLM-4.7’s release signals accelerating competition in AI. With variants like GLM-4.7-Flash, a lighter 30B MoE model optimized for consumer hardware (running at 80+ tokens per second on MacBooks), Zhipu is targeting everyday users too. This could democratize AI further, enabling local runs on laptops without cloud dependency. Challenges remain, such as hardware requirements for the full model and ensuring ethical use, but the trajectory is clear: AI is becoming more inclusive.

As we stand on the cusp of 2026, GLM-4.7 isn’t just a model; it’s a statement. It proves that innovation thrives in openness, and that cost shouldn’t be a barrier to excellence. Whether you’re a coder tackling GitHub bugs or a researcher probing mathematical frontiers, this Chinese powerhouse offers tools to push boundaries without breaking the bank. The future of AI looks brighter—and more affordable—thanks to efforts like this.

👉 Share your thoughts in the comments, and explore more insights on our Journal and Magazine. Please consider becoming a subscriber, thank you: https://borealtimes.org/subscriptions – Follow The Boreal Times on social media. Join the Oslo Meet by connecting experiences and uniting solutions: https://oslomeet.org

Zhipu AI Official GLM-4.7 Documentation – Provides detailed benchmark scores, model architecture (358B parameters, MoE), context window (204,800 input/131,072 output), and innovations like Preserved Thinking. This is the primary source for performance metrics such as 73.8% on SWE-bench Verified, 66.7% on SWE-bench Multilingual, 41% on Terminal Bench 2.0, 42.8% on HLE, 95.7% on AIME 2025, 87.4% on τ²-Bench, and 52.0% on BrowseComp.

Link: https://docs.z.ai/guides/llm/glm-4.7Reddit Discussion on Zhipu AI’s GLM-4.7 Release – Community insights on the December 22, 2025 release, highlighting gains in coding and reasoning over GPT-5.2 and Claude 4.5 Sonnet.

Link: https://www.reddit.com/r/singularity/comments/1pt38jt/zhipu_ai_releases_glm47_beating_gpt52_and_claudeMedium Article: GLM-4.7 vs GLM-4.6 vs DeepSeek-V3.2 vs Claude 4.5 vs GPT-5.1 – Comparative analysis showing GLM-4.7’s proximity to top models in production scenarios, with visual benchmarks and notes on improvements over predecessors.

Link: https://medium.com/data-science-in-your-pocket/glm-4-7-vs-glm-4-6-vs-deepseek-v3-2-vs-claude-4-5-vs-gpt-5-1-e4e69c6099dfLLM Stats Comparison: Claude Sonnet 4 vs GLM-4.7 – Side-by-side benchmark data, including GLM-4.7’s advantages in AIME 2025, GPQA, and SWE-Bench Verified.

Link: https://llm-stats.com/models/compare/claude-sonnet-4-20250514-vs-glm-4.7Bind AI Blog: GLM-4.7 vs Claude Sonnet 4.5 vs GPT-5.2 – Ultimate Coding Comparison – Pricing details (e.g., $0.60/$2.20 per million tokens for GLM vs. higher for Claude), coding focus, and variant flexibility comparisons. Published January 21, 2026.

Link: https://blog.getbind.co/glm-4-7-vs-claude-sonnet-4-5-vs-gpt-5-2-ultimate-coding-comparisonAutomatio AI: GLM-4.7 Pricing, Context Window, and Benchmark Data – Overview of the model’s 200K context window, 73.8% SWE-bench performance, and comparisons to Claude Sonnet 4.5 and GPT-5.1 in tool use. Published December 22, 2025.

Link: https://automatio.ai/models/glm-4-7LinkedIn Post by Raj Gaur: Zhipu AI’s GLM-4.7 – Highlights coding mastery (73.8% on SWE-bench Verified) and Preserved Thinking for workflows.

Link: https://www.linkedin.com/posts/rajgaur_ai-machinelearning-codingai-activity-7412667269010350080-UqEsNVIDIA NIM APIs: glm4.7 Model by Z-ai – Evaluation scores across 17 benchmarks in reasoning, coding, and agent tasks.

Link: https://build.nvidia.com/z-ai/glm4_7/modelcardThe Sequence Substack: The Amazing GLM 4.7 – In-depth on SWE-bench Verified score (73.8%) and agentic reliability focus.

Link: https://thesequence.substack.com/p/the-sequence-ai-of-the-week-781-theTowards AI: The $14 vs $2 Plot Twist – Why GLM-4.7 Just Broke the AI Leaderboard – Ranking on Artificial Analysis Index (#6), cost savings ($2.20/M tokens), and surpassing Kimi K2. Published December 31, 2025.

Link: https://pub.towardsai.net/the-14-vs-2-plot-twist-why-glm-4-7-just-broke-the-ai-leaderboard-addeef80a2f8VERTU: GLM-4.7 vs GPT-5.1 vs Claude Sonnet 4.5 – Terminal operations and reasoning comparisons.

Link: https://vertu.com/ar/%D9%86%D9%85%D8%B7-%D8%A7%D9%84%D8%AD%D9%8A%D8%A7%D8%A9/glm-4-7-vs-gpt-5-1-vs-claude-sonnet-4-5-ai-coding-model-comparisonJulian Goldie: GLM 4.7 – The Free AI That Just Beat Gemini and Claude – Benchmark results like 83% on HumanEval and comparisons to Gemini 3 Pro and Claude Opus.

Link: https://juliangoldie.com/glm-4-7YouTube Video: GLM-4.7-Flash: 42x Cheaper Than Claude – Performance on SWE-bench (59%) and pricing ($0.07/M tokens vs. Claude’s $3/M).

Link: https://www.youtube.com/watch?v=NKGiDGBgtqQMedium: GLM-4.7 Flash – Best Mid-Size LLM – Reasoning, coding advantages over GPT-OSS-20B, and deployment ease.

Link: https://medium.com/data-science-in-your-pocket/glm-4-7-flash-best-mid-size-llm-that-beats-gpt-oss-20b-eea501e821b8LLM Stats: Claude Opus 4.5 vs GLM-4.7 – Token limits and generation comparisons.

Link: https://llm-stats.com/models/compare/claude-opus-4-5-20251101-vs-glm-4.7

#AIInnovation #CodingAI #OpenSourceModels