Running a Local LLM Coding Server on MacBook Pro M5 Pro 48 GB

MacBook Pro M5 Pro 48GB에서 로컬 코딩 AI 서버를 구축하는 과정을 상세히 소개한다. mlx-lm 서버는 메모리 관리 문제로 장시간 대화 시 크래시가 발생했으나, Ollama는 고정된 컨텍스트 크기와 최적화된 모델로 안정적인 운영이 가능했다. 특히 Qwen 3.6 35B-A3B-mxfp8 모델이 Apple Silicon에 최적화되어 높은 품질과 안정성을 제공하며, OpenCode를 통해 네트워크 내에서 편리하게 접근할 수 있다. 이 사례는 클라우드 없이도 강력한 로컬 LLM 코딩 서버 구축이 가능함을 보여준다.

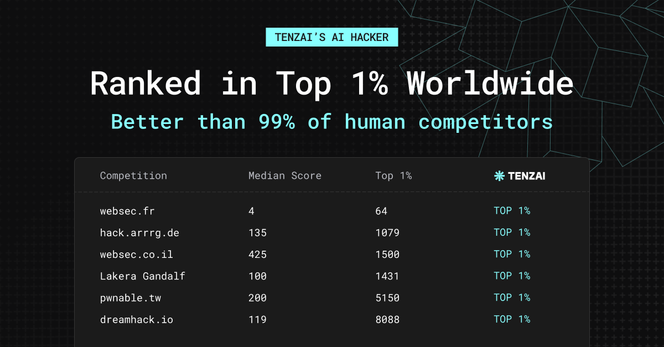

An autonomous hacking agent placed in the top 1% relative to human competitors in 6 capture-the-flag cybersecurity competitions, or so it is claimed.

https://blog.tenzai.com/tenzais-ai-hacker-to-compete-with-elite-humans/

#solidstatelife #ai #genai #llms #codingai #cybersecurity #ctf

GPT-5.5 is not an upgrade… it’s a shift in how work gets done.

1/ It doesn’t “answer” anymore. It executes.

2/ It’s way better at “real work”, not demos

Forget fancy benchmarks.

3/ The biggest shift: from assistant → operator

4/ The uncomfortable truth

📊 Full Review + breakdown of use cases here:

https://primeaicenter.com/gpt-5-5-review/

#GPT5

#AI

#ArtificialIntelligence

#AIAgents

#MachineLearning

#FutureOfWork

#Automation

#TechNews

#AItools

#CodingAI

#SoftwareDevelopment

#Productivity

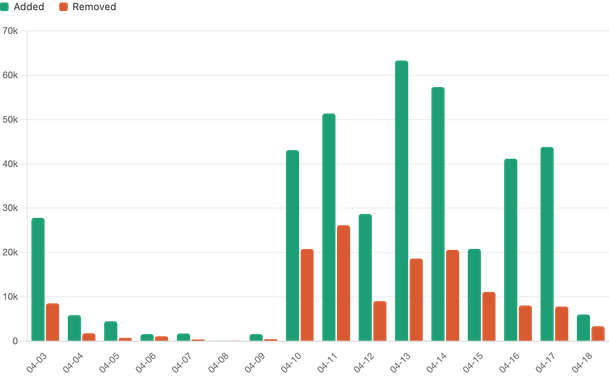

"pgrust: Rebuilding Postgres in Rust with AI".

"250,000 lines of Rust. That includes all the major Postgres subsystems. It looks exactly like Postgres and currently passes one third of Postgres's 50,000 regression tests (there's quite a long tail of functionality when it comes to Postgres)."

"I don't think this project would have been possible two years ago, let alone even six months ago."

https://malisper.me/pgrust-rebuilding-postgres-in-rust-with-ai/

pgrust: Rebuilding Postgres in Rust with AI - malisper.me

I’ve written dozens of blog posts about Postgres internals. Postgres is one of the greatest databases out there. But from chatting with a lot of my friends at startups, I’ve seen consistent challenges. Two weeks ago I started rebuilding it from scratch in Rust with AI and now I have 250k lines of code, a […]

"badvibes is a zero-config CLI that scans a repository for the things AI-assisted codebases tend to accumulate: missing .env.example, committed secrets, giant files, TODO/FIXME drifts, duplicated blocks, placeholder stubs, missing tests, missing CI, thin READMEs, unresolved imports."

やねうら王 (@yaneuraou)

코딩 AI가 쇼기 AI의 버그를 연속적으로 찾아내는 사례를 다룬 글이 소개되었다. AI가 다른 AI 시스템의 품질 문제를 자동으로 발굴하는 방향으로 발전하고 있으며, 소프트웨어 품질 개선과 검증 자동화 측면에서 의미 있는 흐름이다.

https://x.com/yaneuraou/status/2048598232440426544

#codingai #bugfinding #shogi #softwarequality #aiverification

2026 is the year the software industry transitions from artisan to industrial, analogous to the transitions that happened with weavers in the 1820s, typists in the 1980s, typesetters in the 1990s, travel agents in the 2000s,

https://argssh.substack.com/p/the-software-factory-age-why-2026

Most AI coding tools don’t sandbox on Windows (except one)

Most AI coding tools don’t sandbox on Windows (except one)

RT @hxiao: Mit der Veröffentlichung von 3.6-27b schließt sich die Lücke zwischen dichten Modellen und Mixture-of-Experts (MoE)-Modellen, was gut für lokale KI-Anwendungen ist. MoE-Modelle wie 35b-a3b sind günstiger in der Hardware-Nutzung und unterstützen deutlich längere Kontexte (256k Token auf 24GB VRAM). Im direkten Vergleich gleicher Größenordnung (27B dicht vs. 35B-A3B MoE) schneiden dichte Modelle in den meisten Aufgaben noch besser ab, doch in 7 von 10 Benchmarks hat sich die Lücke verringert. MoE schließt leise die Lücke, insbesondere bei Coding-Aufgaben (SWE-bench Multilingual: +9.0 → +4.1). Die einzige Ausnahme ist Terminal-Bench 2.0, wo dichte Modelle deutlich vorlagen (+1.1 → +7.8). Qwen (@AlibabaQwen) 🚀 Meet Qwen3.6-27B, unser neuestes dichtes, Open-Source-Modell mit Flaggschiff-Level Coding-Kompetenz! Ja, 27B Parameter, und Qwen3.6-27B schlägt weit über seinen Gewichtsklasse. 👇 Was ist neu: 🧠 Hervorragende agentic Coding-Fähigkeiten — übertrifft Qwen3.5-397B-A17B in allen wichtigen Coding-Benchmarks 💡 Starke Reasoning-Fähigkeiten bei Text- und Multimodal-Aufgaben 🔄 Unterstützt Thinking- und Non-Thinking-Modi ✅ Apache 2.0 — vollständig offen, vollständig für dich. Kleineres Modell. Größere Ergebnisse. Liebling der Community. ❤️ Wir können es kaum erwarten zu sehen, was ihr mit Qwen3.6-27B baut! 👀 🔗👇 Blog: qwen.ai/blog?id=qwen3.6-27b Qwen Studio: chat.qwen.ai/?models=qwen3.6… Github: github.com/QwenLM/Qwen3.6 Hugging Face: huggingface.co/Qwen/Qwen3.6-… Hugging Face: huggingface.co/Qwen/Qwen3.6-… ModelScope: modelscope.cn/models/Qwen/Qw… modelscope.cn/models/Qwen/Qw… — htt…

mehr auf Arint.info

#AlibabaQwen #CodingAI #LocalAI #MachineLearning #OpenSourceAI #Qwen36 #arint_info

Arint - SEO+KI (@[email protected])

<p>RT @hxiao: Mit der Veröffentlichung von 3.6-27b schließt sich die Lücke zwischen dichten Modellen und Mixture-of-Experts (MoE)-Modellen, was gut für lokale KI-Anwendungen ist. MoE-Modelle wie 35b-a3b sind günstiger in der Hardware-Nutzung und unterstützen deutlich längere Kontexte (256k Token auf 24GB VRAM). Im direkten Vergleich gleicher Größenordnung (27B dicht vs. 35B-A3B MoE) schneiden dichte Modelle in den meisten Aufgaben noch besser ab, doch in 7 von 10 Benchmarks hat sich die Lücke verringert. MoE schließt leise die Lücke, insbesondere bei Coding-Aufgaben (SWE-bench Multilingual: +9.0 → +4.1). Die einzige Ausnahme ist Terminal-Bench 2.0, wo dichte Modelle deutlich vorlagen (+1.1 → +7.8). Qwen (@AlibabaQwen) 🚀 Meet Qwen3.6-27B, unser neuestes dichtes, Open-Source-Modell mit Flaggschiff-Level Coding-Kompetenz! Ja, 27B Parameter, und Qwen3.6-27B schlägt weit über seinen Gewichtsklasse. 👇 Was ist neu: 🧠 Hervorragende agentic Coding-Fähigkeiten — übertrifft Qwen3.5-397B-A17B in allen wichtigen Coding-Benchmarks 💡 Starke Reasoning-Fähigkeiten bei Text- und Multimodal-Aufgaben 🔄 Unterstützt Thinking- und Non-Thinking-Modi ✅ Apache 2.0 — vollständig offen, vollständig für dich. Kleineres Modell. Größere Ergebnisse. Liebling der Community. ❤️ Wir können es kaum erwarten zu sehen, was ihr mit Qwen3.6-27B baut! 👀 🔗👇 Blog: qwen.ai/blog?id=qwen3.6-27b Qwen Studio: chat.qwen.ai/?models=qwen3.6… Github: github.com/QwenLM/Qwen3.6 Hugging Face: huggingface.co/Qwen/Qwen3.6-… Hugging Face: huggingface.co/Qwen/Qwen3.6-… ModelScope: modelscope.cn/models/Qwen/Qw… modelscope.cn/models/Qwen/Qw… — htt…</p> <p><a href="https://arint.info/@Arint/116460518676284651">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#AlibabaQwen #CodingAI #LocalAI #MachineLearning #OpenSourceAI #Qwen36 #arint_info</p> <p><a href="https://x.com/hxiao/status/2047004358500614152#m">https://x.com/hxiao/status/2047004358500614152#m</a></p>