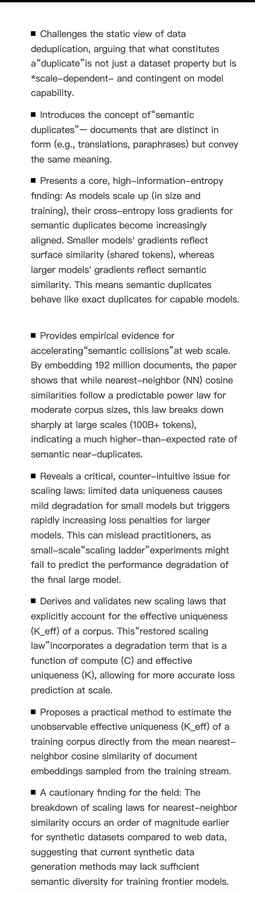

Google deploys Gemini 3.5 Flash to Search, Spark, and enterprise as Pro delays to June. Meta cuts 8,000 roles while shifting 7,000 to AI work. Anthropic hires Andrej Karpathy for pretraining research. The infrastructure shift is underway—compute becoming the constraint, not capability.

https://www.implicator.ai/google-ships-agents-meta-cuts-people-anthropic-buys-brains/