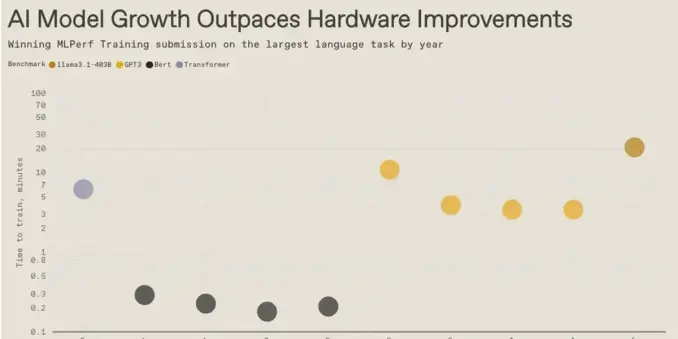

🚀 NVIDIA’s new NVFP4 training recipe slashes AI model training time and cost, powering Blackwell Ultra GPUs to set new MLPerf Training records on large language models like Llama 3.1. Discover how GPU acceleration is reshaping open‑source AI development. #NVFP4 #BlackwellUltra #MLPerf #Llama3_1

🔗 https://aidailypost.com/news/nvidias-nvfp4-training-recipe-boosts-ai-speed-cuts-costs