UPDATED! Generative Artificial Intelligence Tools for Academic Research: AI Research Assistants, AI-Powered Document Analysis Tools, and A Look at Elicit, Undermind, and NotebookLM

https://ryanschultz.com/2026/03/09/generative-artificial-intelligence-tools-for-academic-research-ai-research-assistants-ai-powered-document-analysis-tools-and-a-look-at-elicit-undermind-and-notebooklm/The pretext is basically "what if you're the one building Big Brother for the government?"

#OffBroadway #play #moral #thriller #plot #points #elicit #gasps #audience #BigBrother #surveillance #state #Palantir #data #harvester #broker #privacy #laws

https://www.datatheplay.com/

Im DGI-Praxisseminar „RAG, Deep Research, Forschungsassistenten – was können Sie erwarten?“ zeigt Johanna Gröpler aktuelle Tools wie #ChatGPT #Elicit & #NotebookLM – mit Übungen und Diskussion.

📅 5.11.2025, 13–16 Uhr, online

🔗 Infos + Anmeldung: https://dgi-info.de/event/rag-deep-research-forschungsassistenten-was-koennen-sie-erwarten/

"Comparison of Elicit AI and Traditional Literature Searching in Evidence Syntheses Using Four Case Studies"

Cochrane Evid Synth Methods, 9-27-25

The sensitivity of Elicit was poor, averaging 39.5% compared to 94.5% in the original reviews. Elicit identified some included studies not identified by the original searches & had an average of 41.8% precision which was higher than the 7.55% average of the original reviews.

Comparison of Elicit AI and Traditional Literature Searching in Evidence Syntheses Using Four Case Studies

Elicit AI aims to simplify and accelerate the systematic review process without compromising accuracy. However, research on Elicit's performance is limited. To determine whether Elicit AI is a viable tool for systematic literature searches and ...

Mit #KI schneller Literatur finden und zusammenfassen?

Programme für die Systemtic Literature Review wie #Elicit ersparen auf den ersten Blick viel Arbeit. Doch sie reproduzieren Machtdynamiken des Wissenschaftssystems, indem sie englischsprachige Beiträge bevorzugen, schreibt Rebecca Schmidt. Weil das Programm außerdem oft nur auf die Abstracts zugreifen kann, müssen Forschende am Ende ohnehin alle Texte selbst downloaden & lesen 👇

Mit KI (Elicit) Forschungsstand schreiben – ein kritischer Erfahrungsbericht

Einleitung Dieser Blogbeitrag beschäftigt sich kritisch mit der (Teil-)Automatisierung der Literatursuche, -recherche, sowie der Zusammenfassung eines Forschungsstandes mithilfe digitaler Tools. Aktuell gibt es verschiedene Anbieter und Start-Ups, die eine stetige (Weiter-) Entwicklung von Softwareprodukten anbieten, um Forschende dabei zu unterstützen die relevante Forschungsliteratur zu einer Fragestellung zu finden und den Forschungsstand zusammen zu fassen (Cao … „Mit KI (Elicit) Forschungsstand schreiben – ein kritischer Erfahrungsbericht“ weiterlesen

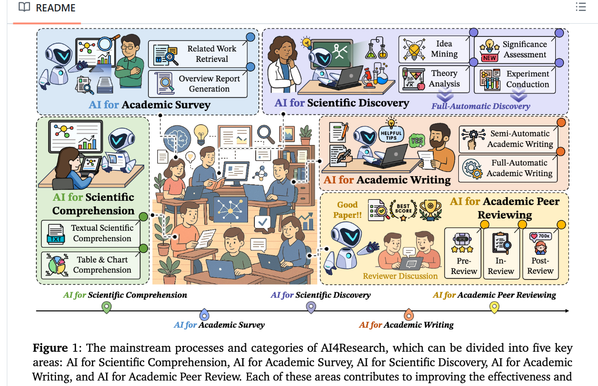

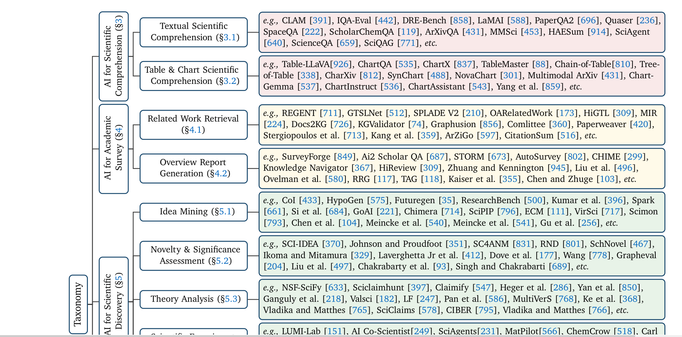

"AI4Research: A Survey of Artificial Intelligence for Scientific Research"

This is by far the best survey paper I have seen analyzing how various AI tools support various aspects of scientific research. This work analyzed a vast amount of information compiled in 950 references that were listed in the paper.

https://arxiv.org/abs/2507.01903

They provide an extensive paper list Github repo;

https://ai-4-research.github.io/

and see:

https://github.com/LightChen233/Awesome-AI4Research

All sizzle, no steak: AI tools are not able to act as credible knowledge brokers by

summarising evidence in mathematics education

https://bsrlm.org.uk/wp-content/uploads/2025/05/BSRLM-CP-45-1-10.pdf

from https://bsky.app/profile/honeypisquared.bsky.social/post/3lraakawvqc2a

"We found that AI tools - both general like #ChatGPT and research-specific like #Elicit - lack the reliability, relevancy and accuracy to summarise research for teachers. This is important because we have been sold the idea that AI will make research more accessible."

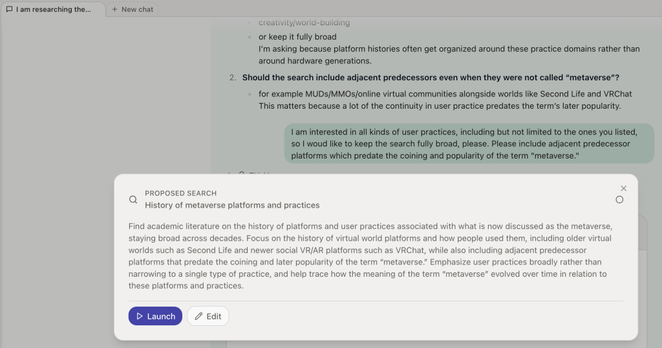

How we evaluated Elicit Reports

We recently announced Elicit Reports: fully-automated research overviews for actual researchers, inspired by systematic reviews. Through external evaluation by researcher specialists, we find that Elicit Reports produce higher quality research overviews and save more time than the other “deep research” tools, including well-known ones like ChatGPT Deep Research, Perplexity Deep

How we evaluated Elicit Reports

We recently announced Elicit Reports: fully-automated research overviews for actual researchers, inspired by systematic reviews. Through external evaluation by researcher specialists, we find that Elicit Reports produce higher quality research overviews and save more time than the other “deep research” tools, including well-known ones like ChatGPT Deep Research, Perplexity Deep