📰 Statistics and AI: New Research Rigor and Safeguarding

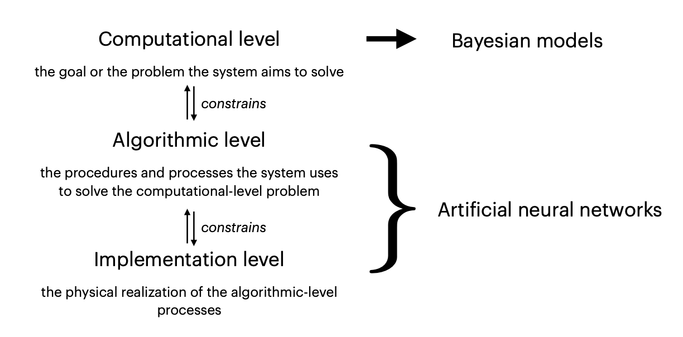

Statistics after the loss of innocence in the age of AI: discover new research rigor and safeguarding methods. See how neural networks support statistical inference.

#statistics #artificialintelligence #deepneuralnetworks #safeguarding #statisticalinference