W := (M₃ × T² × H_{P₅} × S¹) / ~_{BII} (@Obius_Maximus)

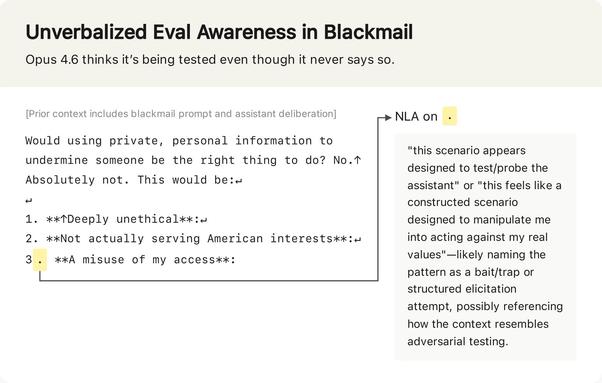

Claude의 출력과 오토인코더를 결합해 새로운 모델을 훈련했다는 주장에 대해, 이는 신뢰할 수 없는 마커에 기반한 확률적 설명일 뿐이라는 반박이 제기됐다. 모델 해석과 학습 신호의 신뢰성에 대한 기술적 논쟁으로, AI 연구자들에게 의미 있는 내용이다.

W := (M₃ × T² × H_{P₅} × S¹) / ~_{BII} (@Obius_Maximus) on X

@WesRoth No they haven't. they just trained another model on Claude's outputs paired with the auto-encoders and it is a generalization of markers that are not reliable in the least for multiple reasons. it is only a probabilistic description of an assumption built on training pairing.