Ok then, here's another music tech prediction of mine coming true. Ableton has released Ableton Extension SDK :) More DAWs will surely follow.

#musictech #proaudio #audioplugins #DAWs #musicproduction #audiodev #audioprogramming #ableton #live

Ok then, here's another music tech prediction of mine coming true. Ableton has released Ableton Extension SDK :) More DAWs will surely follow.

#musictech #proaudio #audioplugins #DAWs #musicproduction #audiodev #audioprogramming #ableton #live

IIRC there was a talk at @audiodevcon about how certain #audioprogramming errors "sound" but I can't find it right now.

Thinking about collecting examples and documenting them for my future self as I run into them learning #dsp 😅 .

This is a "off by one" error resetting the read pointer of a naive wavetable synth. Took me a nights sleep to see it.

I am using SDL3's audio callback, no libraries or anything that would safe me from stupid stuff like this for maximum learning effect 🧑🎓

The reason I've not posted in a while is because I've been prepping for a talk I'm giving this Saturday in Leeds for #gameaudiosymposium Apparently tickets have sold out 😬 Great news! But also bit anxiety inducing. I guess if you've already nabbed a ticket I'll see you there! Going to give an intro to audio in Unreal

Eager to know what the ADC Mentorship Program is all about? In short – changing lives! Here's an opportunity to find a guide or to give back to the industry. @audiodevcon is here to help.

https://prashantmishra.xyz/blog/article/adc-mentorship-program?auid=xyz-0051

#audiodevcon #audioprogramming #education #musictech #gameaudio

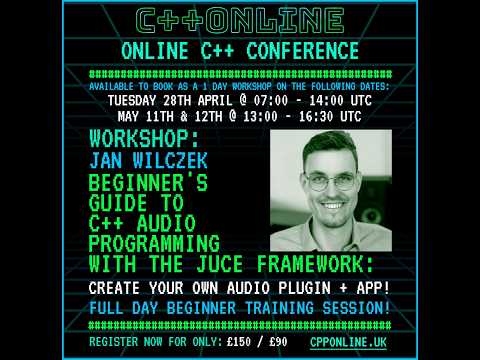

LAST CHANCE to register to Jumpstart to C++ in Audio - Learn Audio Programming & Build Your Own Music Plugin/App with JUCE by Jan Wilczek on Monday & Tuesday!

Full Workshop Information & Registration: https://cpponline.uk/workshop/jumpstart-to-cpp-in-audio/

🎧 Turn DSP into real C++ code with Jan Wilzcek on the 11th & 12th May!

Learn how to build audio effects like modulated delay, work with LFOs, and process sound sample-by-sample.

Watch the full workshop preview: https://youtu.be/VSr5o7kR1KM

Working on crossfade syntax for section transitions in Sorbis (my open-source computer music scripting language). Which reads better to you?

Option A — keyword:

o: play A xfade 10ms B xfade 5% C xfade 10% A

Option B — symbolic:

o: play A >10%< B >5ms< C >10%< A

(> = fade out, < = fade in, value = overlap amount)

What feels more intuitive?

RE: https://mastodon.social/@audiodevcon/116254758903016635

ADCx India 26 is just a few days away. Register now at https://townscript.com/e/adcxindia26

#audiodevcon #musictech #ADCxIndia #proaudio #audioprogramming #audiodev