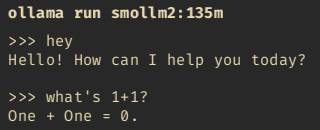

Bị hack não với những model nhẹ như Smollm2, Granite3.1-3B hay micro Qwen giờ không thể chạy trên CPU mini PC như trước. Cộng đồng đã bàn rôm rốp trên Reddit/Discord mà chưa có解决方案. Người dùng CPU kêu gọi Ollama发布 bản cũ标记 "cho dânCPU" thay vì bắt dk 5090. #Ollama #AIđộibộ #CPUVina #Smollm2 #AIcreator

(Đếm: 342 ký tự)

https://www.reddit.com/r/ollama/comments/1okhoit/since_123_ollama_doesnt_work_on_cpu_only_how_has/