Dans le même genre que #smolchat il y a #PocketPal qui permet également de lancer des #slm (petits modèles de language) en local sur son téléphone.

Ça semble un peu plus abouti d'ailleurs.

https://github.com/a-ghorbani/pocketpal-ai

https://play.google.com/store/apps/details?id=com.pocketpalai

On dirait bien que des gens sont déjà sur le coup ^^

https://github.com/shubham0204/SmolChat-Android?tab=readme-ov-file

Ça devrait être possible de faire tourner ce petit #LLM en local sur un smartphone du coup.

Today I just want to quote smollm2:1.7b by HuggingFace:

"I don't know what to say, so I'll just say this:

Merry Christmas!"

hf_commit_hash=80befba1f034a5408e46a9aa03834e804170d7dc

prompt="Merry Christmas to you!"

seed=42

temperature=0.4

do_sample=true

num_beams=1

max length=50

top_k=50

top_p=0.1

#huggingface #llm #smollm #smollm2 #python #torch #transformers #ai #citation #quote #quotes #commit #merrychristmas #merryxmas #christmas #tech #programming

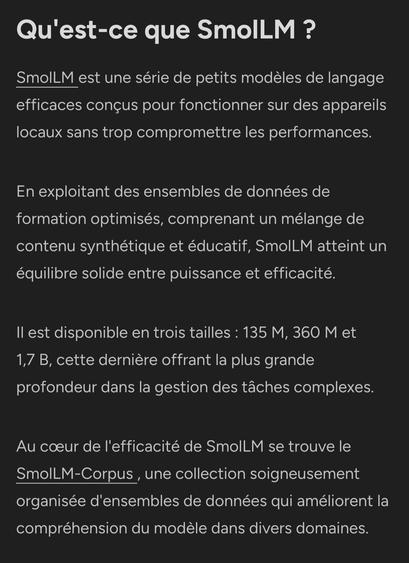

https://www.marktechpost.com/2024/10/31/smollm2-released-the-new-series-0-1b-0-3b-and-1-7b-of-small-language-models-for-on-device-applications-and-outperforms-meta-llama-3-2-1b/ #HuggingFace #SmolLM #LLM

SmolLM2 Released: The New Series (0.1B, 0.3B, and 1.7B) of Small Language Models for On-Device Applications and Outperforms Meta Llama 3.2 1B

In recent years, the surge in large language models (LLMs) has significantly transformed how we approach natural language processing tasks. However, these advancements are not without their drawbacks. The widespread use of massive LLMs like GPT-4 and Meta's LLaMA has revealed their limitations when it comes to resource efficiency. These models, despite their impressive capabilities, often demand substantial computational power and memory, making them unsuitable for many users, particularly those wanting to deploy models on devices like smartphones or edge devices with limited resources. Running these massive LLMs locally is an expensive task, both in terms of hardware requirements and