Results reporting for clinical trials led by medical universities and university hospitals in the Nordic countries was often missing or delayed

Objective To systematically evaluate timely reporting of clinical trial results at medical universities and university hospitals in the Nordic countries.

Study Design and Setting In this cross-sectional study, we included trials (regardless of intervention) registered in the EU Clinical Trials Registry and/or [ClinicalTrials.gov][1], completed 2016-2019, and led by a university with medical faculty or university hospital in Denmark, Finland, Iceland, Norway, or Sweden. We identified summary results posted at the trial registries, and conducted systematic manual searches for results publications (e.g., journal articles, preprints). We present proportions with 95% confidence intervals (CI), and medians with interquartile range (IQR). Protocol: <https://osf.io/wua3r>

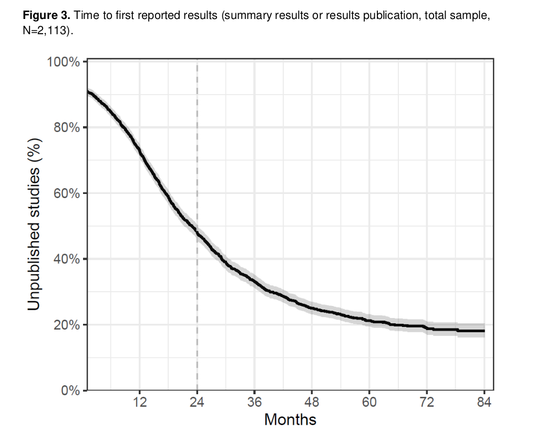

Results Among 2,113 included clinical trials, 1,638 (77.5%, 95%CI 75.9-79.2%) reported any results during our follow-up; 1,092 (51.7%, 95%CI 49.5-53.8%) reported any results within 2 years of the global completion date; and 42 (2%, 95%CI 1.5-2.7%) posted summary results in the registry within 1 year. Median time from global completion date to results reporting was 698 days (IQR 1,123). 856/1,681 (50.9%) of [ClinicalTrials.gov][1]-registrations were prospective. Denmark contributed approximately half of all trials. Reporting performance varied widely between institutions.

Conclusion Missing and delayed results reporting of academically-led clinical trials is a pervasive problem in the Nordic countries. We relied on trial registry information, which can be incomplete. Institutions, funders, and policy makers need to support trial teams, ensure regulation adherence, and secure trial reporting before results are permanently lost.

What is new?

### Competing Interest Statement

The authors have declared no competing interest.

### Clinical Protocols

<https://osf.io/wua3r>

### Funding Statement

CA reports funding for this project by the Knut and Alice Wallenberg Foundation through the Wallenberg Foundation Postdoctoral Scholarship Program at Stanford (KAW 2019.0561). The complete funding statement is available in the manuscript.

### Author Declarations

I confirm all relevant ethical guidelines have been followed, and any necessary IRB and/or ethics committee approvals have been obtained.

Yes

The details of the IRB/oversight body that provided approval or exemption for the research described are given below:

Data on clinical trials were collected from publicly available databases: <https://clinicaltrials.gov/> and <https://eudract.ema.europa.eu/>

I confirm that all necessary patient/participant consent has been obtained and the appropriate institutional forms have been archived, and that any patient/participant/sample identifiers included were not known to anyone (e.g., hospital staff, patients or participants themselves) outside the research group so cannot be used to identify individuals.

Yes

I understand that all clinical trials and any other prospective interventional studies must be registered with an ICMJE-approved registry, such as ClinicalTrials.gov. I confirm that any such study reported in the manuscript has been registered and the trial registration ID is provided (note: if posting a prospective study registered retrospectively, please provide a statement in the trial ID field explaining why the study was not registered in advance).

Yes

I have followed all appropriate research reporting guidelines, such as any relevant EQUATOR Network research reporting checklist(s) and other pertinent material, if applicable.

Yes

All data produced are available online at <https://zenodo.org/records/10091147> All code used for data processing available online at <https://github.com/cathrineaxfors/nordic-trial-reporting>

<https://zenodo.org/records/10091147>

<https://github.com/cathrineaxfors/nordic-trial-reporting>

[1]: http://ClinicalTrials.gov