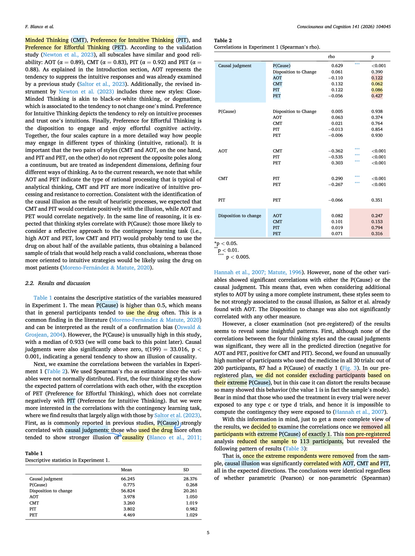

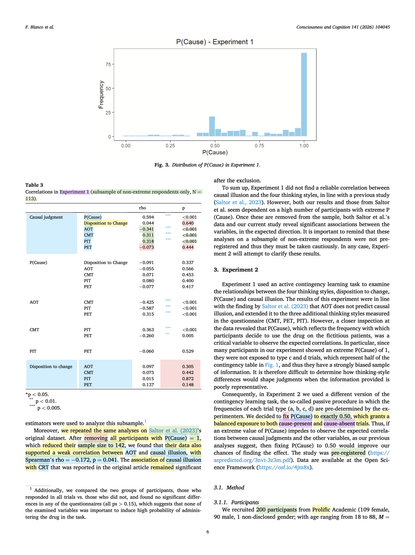

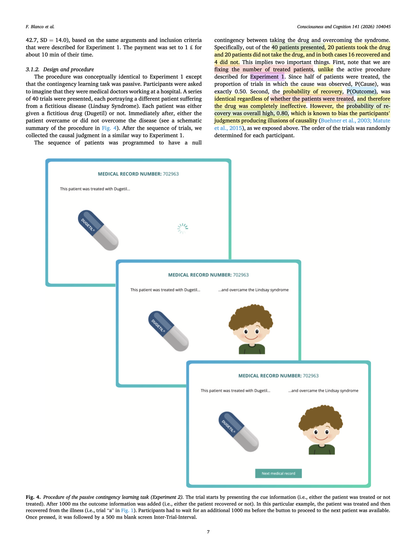

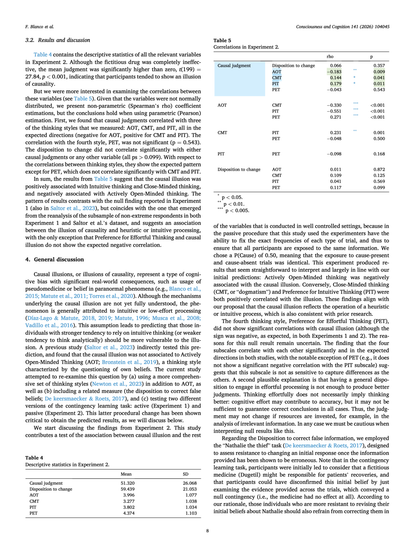

What correlates with #illusions of causality?

Despite seeing enough treatments and outcomes to calculate a #medicine's effect, people overestimated its effectiveness.

That illusion of causality correlated more with #reasoning preferences than effort.

https://doi.org/10.1016/j.concog.2026.104045

stevibe (@stevibe)

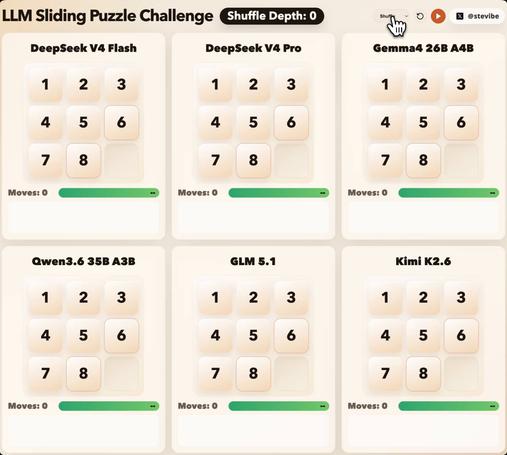

여러 오픈소스 LLM에 슬라이딩 퍼즐과 도구 호출 과제를 주고 장기 추론 능력을 비교한 테스트입니다. 6개 모델 중 5개가 실패했고 1개만 성공했다는 점에서, 단순 벤치마크보다 실제 추론·툴 사용 능력을 드러내는 흥미로운 평가 사례입니다.

https://x.com/stevibe/status/2054206771292692592

#opensource #llm #reasoning #toolcalling #benchmark

stevibe (@stevibe) on X

Six open-source LLMs. One sliding puzzle. A brutal test of long-horizon reasoning and tool calling.

Five of them broke. One didn't.

I gave each model a move_tile tool and a scrambled 3×3 board, then asked it to solve the puzzle through pure turn-by-turn reasoning. The deeper

eyeling — a compact Notation3 (N3) reasoner in JavaScript.

The core idea: forward chaining is the outer loop; backward chaining is the proof engine used inside rule firing. Built-ins can participate in rule bodies, so consequences are computed until fixpoint.

https://github.com/eyereasoner/eyeling

#Notation3 #N3 #SemanticWeb #LinkedData #JavaScript #Reasoning

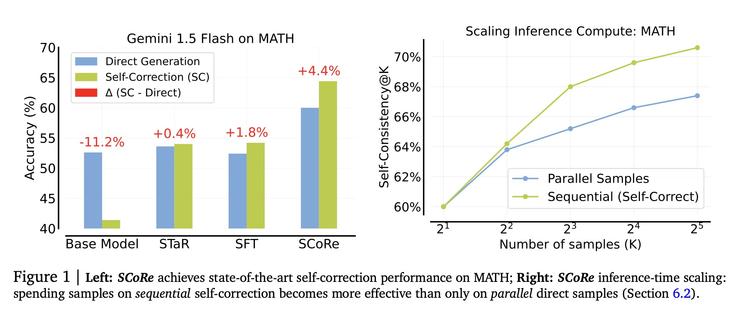

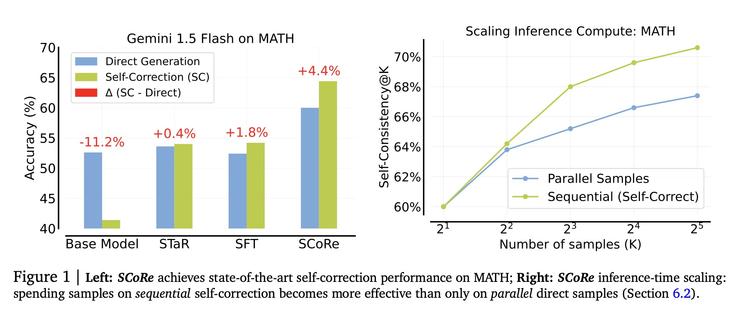

SCoRe is a two-stage on-policy RL recipe that teaches a language model to revise its own answers using only self-generated data. On Gemini 1.5 Flash and 1.0 Pro it gains 15.6 points on MATH and 9.1 on HumanEval over the base model. At matched inference budgets, sequential self-correction beats parallel sampling up to 32 samples.

https://benjaminhan.net/posts/20260512-score/?utm_source=mastodon&utm_medium=social

#Paper #LLMs #RL #Metacognition #Reasoning #ICLR #AI

Training Language Models to Self-Correct via Reinforcement Learning (SCoRe) – synesis

A two-stage on-policy RL recipe teaches Gemini 1.0 Pro and 1.5 Flash to revise their own answers, gaining 15.6 points on MATH and 9.1 points on HumanEval over the base model.

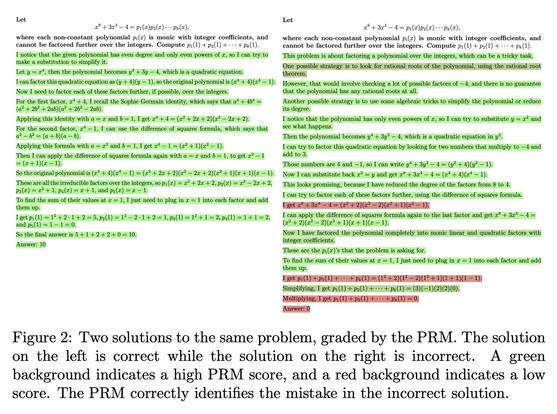

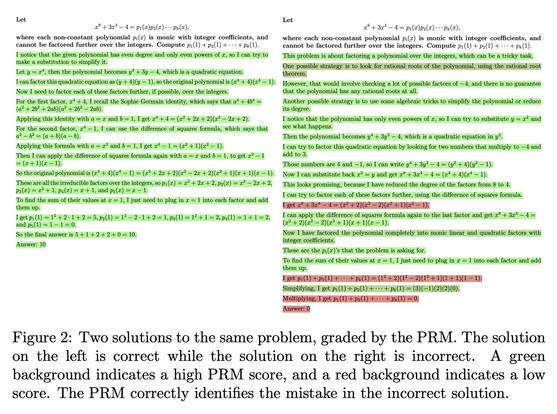

Let's Verify Step by Step compares process and outcome supervision on MATH. The process-reward model reaches 78.2% best-of-1860 vs 72.4% for outcome. But that gap narrows fast at small N, where most deployments actually live.

https://benjaminhan.net/posts/20260512-lets-verify-step-by-step/?utm_source=mastodon&utm_medium=social

#Paper #LLMs #Reasoning #Mathematics #ICLR #OpenAI #AI

Let’s Verify Step by Step – synesis

OpenAI compares outcome vs. process supervision for math reasoning and finds that step-level human feedback trains dramatically more reliable reward models on MATH.

RegexPSPACE: Regex LLM Benchmark

RegexPSPACE는 LLM의 공간 복잡도 한계를 평가하기 위해 PSPACE-완전 정규표현식 문제(동등성 결정과 최소화)를 기반으로 한 최초의 벤치마크를 제안한다. 100만 개 이상의 정규표현식 인스턴스를 포함하는 대규모 데이터셋을 구축하고, 6개 LLM과 5개 LRM을 대상으로 평가를 수행해 LLM의 장황함과 반복 같은 공통 실패 패턴을 발견했다. 이 연구는 LLM과 LRM의 고급 추론 능력과 공간 계산 한계를 정량적으로 분석하는 새로운 평가 프레임워크를 제공한다.

https://arxiv.org/abs/2510.09227

#llm #benchmark #regex #reasoning #pspace

RegexPSPACE: A Benchmark for Evaluating LLM Reasoning on PSPACE-complete Regex Problems

Large language models (LLMs) show strong performance across natural language processing (NLP), mathematical reasoning, and programming, and recent large reasoning models (LRMs) further emphasize explicit reasoning. Yet their computational limits, particularly spatial complexity constrained by finite context windows, remain poorly understood. While recent works often focus on problems within the NP complexity class, we push the boundary by introducing a novel benchmark grounded in two PSPACE-complete regular expression (regex) problems: equivalence decision (RegexEQ) and minimization (RegexMin). PSPACE-complete problems serve as a more rigorous standard for assessing computational capacity, as their solutions require massive search space exploration. We perform a double-exponential space exploration to construct a labeled dataset of over a million regex instances with a sound filtering process to build the benchmark. We conduct extensive evaluations on 6 LLMs and 5 LRMs of varying scales, revealing common failure patterns such as verbosity and repetition. With its well-defined structure and quantitative evaluation metrics, this work presents the first empirical investigation into the spatial computational limitations of LLMs and LRMs, offering a new framework for evaluating their advanced reasoning capabilities. Our code is available at https://github.com/hyundong98/RegexPSPACE .

Dario Cositore (@DarioCositore)

프리릴리즈 단계에서 100개 이상의 실제 비즈니스 워크플로우로 모델을 평가한 결과, 단순히 최신 모델이 항상 더 나쁜 것은 아니며 성능 변화가 영역별로 다르다고 설명한다. Opus 4.7은 구조화된 출력은 일부 퇴행했지만 멀티스텝 툴 체인은 개선됐고, Gemini 3.1은 추론 능력이 저하됐다고 언급한다.

https://x.com/DarioCositore/status/2053892255438536725

#ai #llm #modelevaluation #reasoning #tooluse

Dario Cositore (@DarioCositore) on X

@bindureddy I'm one of the people who evaluated these models pre-release across 100+ real business workflows before prod. The picture is way more nuanced than "Newer = worse" Opus 4.7 intentionally regressed on structured output but improved multi-step tool chains. Gemini 3.1 lost reasoning

khazzz1c (@Imkhazzz1c)

대형 언어모델이 생성 능력보다 이해 능력에서 더 큰 잠재력을 보이며, 이를 실제 업무에 어떻게 활용할지에 대한 관점이 제시됐다. 모델의 추론·이해 역량을 실용적 활용으로 연결하는 흐름을 시사한다.

https://x.com/Imkhazzz1c/status/2053885556351012885

#llm #reasoning #ai #languagemodels

khazzz1c (@Imkhazzz1c) on X

Large language models are far more powerful than they themselves let on. Compared to their generative capabilities, their comprehension has already reached an entirely new dimension. How can we put this aspect of their ability to practical use?

#GrapheneOS is A GREAT #EXAMPLE of how we can #participate USING #MASTODON ! +1 ⭐

(NOT just about #Tech #coding as a "join us" but the #social aspects of reasoning / replying / answering fans HERE ON MASTODON !!

So I #appreciate GrapheneOS and would appreciate more accounts answering *_#Mastodon complainers_* + copy-pasting them a template reply as human #reasoning + more #humanly.

Even as #interaction / custom #reply ?

☑️ See GrapheneOS acc

@GrapheneOS

https://mastodon.social/@GrapheneOS@grapheneos.social