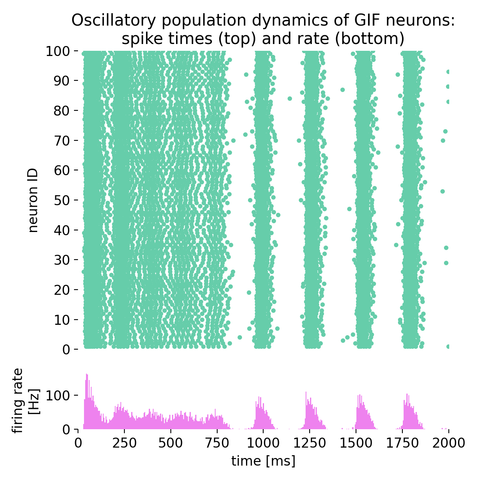

Oscillatory population dynamics of GIF neurons simulated with NEST

In this tutorial, we will explore the oscillatory population dynamics of generalized integrate-and-fire (GIF) neurons simulated with NEST. The GIF neuron model is a biophysically detailed model that captures the essential features of spiking neurons, including spike-frequency adaptation and dynamic threshold behavior. By simulating such a population of neurons, we can observe how these neurons interact and generate oscillatory firing patterns.

FitzHugh-Nagumo model

In the previous post, we analyzed the dynamics of Van der Pol oscillator by using phase plane analysis. In this post, we will see, that this oscillator can be considered as a special case of another dynamical system, the FitzHugh-Nagumo model. The FitzHugh-Nagumo model is a simplified model used to describe the dynamics of the action potential in neurons. With a few modifications of the Van der Pol equations we can obtain the model’s ODE system. By again using phase plane analysis, we can then investigate how the dynamics of the system changes under these modifications.

New #preprint by Aaron Spieler et al. on ELM (Expressive Leaky #Memory) neurons, a biologically inspired cortical #neuronmodel, showing promise in solving long-horizon tasks (complex computations) with remarkable efficiency.

📔 Spieler et al. (2023), "The ELM Neuron: an Efficient and Expressive Cortical Neuron Model Can Solve Long-Horizon Tasks", https://doi.org/10.48550/arXiv.2306.16922

#ComputationalNeuroscience #CompNeuro

The Expressive Leaky Memory Neuron: an Efficient and Expressive Phenomenological Neuron Model Can Solve Long-Horizon Tasks

Biological cortical neurons are remarkably sophisticated computational devices, temporally integrating their vast synaptic input over an intricate dendritic tree, subject to complex, nonlinearly interacting internal biological processes. A recent study proposed to characterize this complexity by fitting accurate surrogate models to replicate the input-output relationship of a detailed biophysical cortical pyramidal neuron model and discovered it needed temporal convolutional networks (TCN) with millions of parameters. Requiring these many parameters, however, could stem from a misalignment between the inductive biases of the TCN and cortical neuron's computations. In light of this, and to explore the computational implications of leaky memory units and nonlinear dendritic processing, we introduce the Expressive Leaky Memory (ELM) neuron model, a biologically inspired phenomenological model of a cortical neuron. Remarkably, by exploiting such slowly decaying memory-like hidden states and two-layered nonlinear integration of synaptic input, our ELM neuron can accurately match the aforementioned input-output relationship with under ten thousand trainable parameters. To further assess the computational ramifications of our neuron design, we evaluate it on various tasks with demanding temporal structures, including the Long Range Arena (LRA) datasets, as well as a novel neuromorphic dataset based on the Spiking Heidelberg Digits dataset (SHD-Adding). Leveraging a larger number of memory units with sufficiently long timescales, and correspondingly sophisticated synaptic integration, the ELM neuron displays substantial long-range processing capabilities, reliably outperforming the classic Transformer or Chrono-LSTM architectures on LRA, and even solving the Pathfinder-X task with over 70% accuracy (16k context length).