Some cool work from allen institute where they trained a more understandable mixture of experts model. The content that the experts are experts at are more clustered and better resemble human topics. A big benefit of this is it looks like you can remove an expert from the model and performance degrades much more gracefully than a standard MoE.

Arcee Trinity Large Technical Report

Arcee Trinity Large는 4000억 개의 파라미터를 가진 희소 Mixture-of-Experts(MoE) 모델로, 토큰당 130억 개의 활성화 파라미터를 사용한다. 이와 함께 Trinity Nano(60억 파라미터)와 Trinity Mini(260억 파라미터) 모델도 소개되었으며, 모두 최신 아키텍처와 새로운 MoE 부하 균형 전략인 SMEBU를 적용했다. 모델들은 Muon 옵티마이저로 훈련되었고, 대규모 토큰 데이터셋(최대 170억 토큰)으로 사전학습되었다. 이 기술 보고서는 대규모 희소 모델 설계와 훈련에 중요한 참고자료가 될 전망이다.

https://arxiv.org/abs/2602.17004

#machinelearning #mixtureofexperts #largescale #transformers #optimization

Arcee Trinity Large Technical Report

We present the technical report for Arcee Trinity Large, a sparse Mixture-of-Experts model with 400B total parameters and 13B activated per token. Additionally, we report on Trinity Nano and Trinity Mini, with Trinity Nano having 6B total parameters with 1B activated per token, Trinity Mini having 26B total parameters with 3B activated per token. The models' modern architecture includes interleaved local and global attention, gated attention, depth-scaled sandwich norm, and sigmoid routing for Mixture-of-Experts. For Trinity Large, we also introduce a new MoE load balancing strategy titled Soft-clamped Momentum Expert Bias Updates (SMEBU). We train the models using the Muon optimizer. All three models completed training with zero loss spikes. Trinity Nano and Trinity Mini were pre-trained on 10 trillion tokens, and Trinity Large was pre-trained on 17 trillion tokens. The model checkpoints are available at https://huggingface.co/arcee-ai.

https://winbuzzer.com/2026/04/27/deepseek-v4-open-weights-launch-xcxwbn/

DeepSeek V4 Ships 1M Context, Open-Weights

#AI #DeepSeekV4 #DeepSeek #OpenSourceAI #AIModels #MixtureOfExperts #ChinaAI #GenerativeAI #EnterpriseAI

Design Arena (@Designarena)

withnucleusai의 Nucleus-Image가 Design Arena에 추가되었습니다. 이 모델은 파라미터 효율적인 Mixture-of-Experts 이미지 모델로, 프롬프트 이해력이 뛰어나고 공간 배치도 정밀하게 처리하는 것이 특징입니다.

https://x.com/Designarena/status/2044873593574818108

#imagegeneration #mixtureofexperts #aivisual #model #designarena

https://winbuzzer.com/2026/04/03/arcee-ai-399b-open-source-reasoning-model-apache-2-xcxwbn/

Arcee AI Launches 399B Top-Performing Open-Source Model at 96% Lower Cost

#AI #ArceeAI #OpenSourceAI #LLMs #AIModels #MixtureOfExperts

Gemma 4: Google's Most Capable Open Models Are Here — and They Run on Your Laptop

https://techlife.blog/posts/gemma-4-google-open-models

#Gemma4 #GoogleAI #OpenSourceAI #LLM #OnDeviceAI #MixtureOfExperts

Gemma 4: Google's Most Capable Open Models Are Here — and They Run on Your Laptop

Google's Gemma 4 family brings frontier-level AI reasoning to devices ranging from Android phones to developer workstations, under a fully open Apache 2.0 license.

Simon Willison (@simonw)

거대한 Mixture-of-Experts 모델도 Mac 하드웨어에서 전체를 RAM에 올리지 않고 SSD에서 전문가 가중치를 일부씩 스트리밍해 실행할 수 있다는 점을 소개한다. Kimi 2.5는 1T 파라미터지만 활성화되는 32B만 필요해 96GB 메모리에서 구동 가능하다고 언급한다.

Simon Willison (@simonw) on X

Turns out you can run enormous Mixture-of-Experts on Mac hardware without fitting the whole model in RAM by streaming a subset of expert weights from SSD for each generated token - and people keep finding ways to run bigger models Kimi 2.5 is 1T, but only 32B active so fits 96GB

Nemotron 3 Super pushes the frontier with 40 M supervised & alignment samples, leveraging a Mamba‑Transformer backbone and Mixture‑of‑Experts scaling. The model shows stronger agent reasoning, RL‑based fine‑tuning, and tighter AI alignment. Dive into the details to see how this LLM reshapes open‑source AI. #Nemotron3 #MixtureOfExperts #AIAlignment #SupervisedFineTuning

🔗 https://aidailypost.com/news/nemotron-3-super-incorporates-40-million-supervised-alignment-samples

fly51fly (@fly51fly)

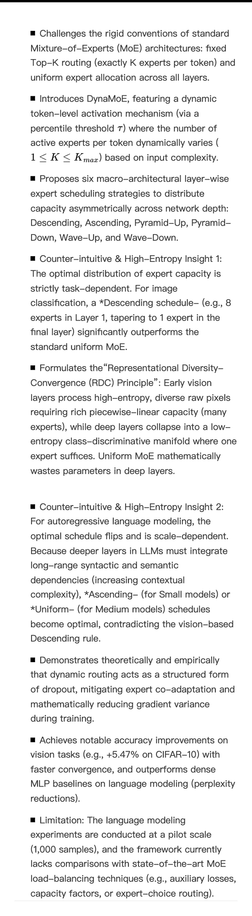

LG 소속의 G. Gülmez(2026) 연구 'DynaMoE'는 토큰 단위로 전문가(Expert)를 동적으로 활성화하고 레이어별 적응형 용량(layer-wise adaptive capacity)을 적용해 Mixture-of-Experts(MoE) 신경망의 효율성·자원 할당을 개선하는 방법을 제안한다. arXiv에 공개된 논문으로 MoE의 활성화 패턴과 계산 비용 최적화에 중점.

Gökdeniz Gülmez (@ActuallyIsaak)

새 연구 논문 발표: DynaMoE라는 Mixture-of-Experts 프레임워크를 소개합니다. DynaMoE는 토큰별로 활성화되는 전문가(expert)의 수를 동적으로 결정하고, 전체 전문가 수를 상황에 따라 스케줄링할 수 있는 구조를 제안합니다. MoE 계열의 효율성·확장성 개선을 목표로 한 아키텍처 연구입니다.

Gökdeniz Gülmez (@ActuallyIsaak) on X

Today I’m sharing a new research paper that explores a new idea in mixture of experts architecture called “DynaMoE”. DynaMoE is a Mixture-of-Experts framework where: - the number of active experts per token is dynamic. - the number of all experts can be scheduled differently