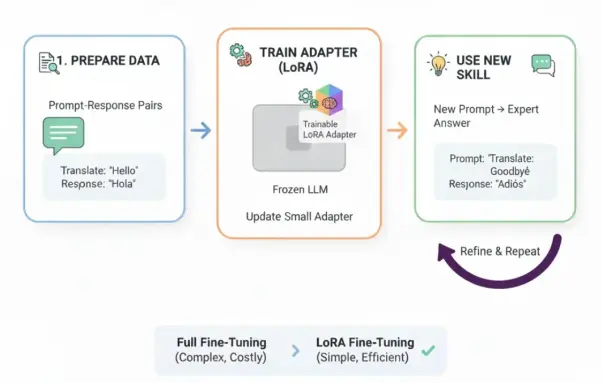

Nemotron 3 Super pushes the frontier with 40 M supervised & alignment samples, leveraging a Mamba‑Transformer backbone and Mixture‑of‑Experts scaling. The model shows stronger agent reasoning, RL‑based fine‑tuning, and tighter AI alignment. Dive into the details to see how this LLM reshapes open‑source AI. #Nemotron3 #MixtureOfExperts #AIAlignment #SupervisedFineTuning

🔗 https://aidailypost.com/news/nemotron-3-super-incorporates-40-million-supervised-alignment-samples