merve (@mervenoyann)

Gemma 4에 MTP drafter가 적용되어 speculative decoding으로 기존 대비 최대 3배까지 tokens/sec 속도가 향상됐다. 추론 결과는 동일하면서 훨씬 빨라졌고, transformers, MLX, vLLM에서 출시 첫날부터 지원되며 A2.0 라이선스로 제공된다.

merve (@mervenoyann)

Gemma 4에 MTP drafter가 적용되어 speculative decoding으로 기존 대비 최대 3배까지 tokens/sec 속도가 향상됐다. 추론 결과는 동일하면서 훨씬 빨라졌고, transformers, MLX, vLLM에서 출시 첫날부터 지원되며 A2.0 라이선스로 제공된다.

Ivan Fioravanti ᯅ (@ivanfioravanti)

MLX HN Local Image 프로젝트가 업데이트되었으며, 이제 uvx로 별도 다운로드 없이 실행할 수 있습니다. 또한 z-image-turbo와 flux2-klein 4B/9B의 간단한 비교가 추가되어 로컬 이미지 생성/실험 워크플로우가 개선되었습니다. 64GB 머신에서 실행 권장.

MLX HN Local Image project updated. You can now run it using uvx without downloading anything and I added a quick compare between z-image-turbo and flux2-klein 4B and 9B. A video below from M5 Max using ghostty. Note: run this on a 64GB machine, we can try to squeeze this win

Ivan Fioravanti ᯅ (@ivanfioravanti)

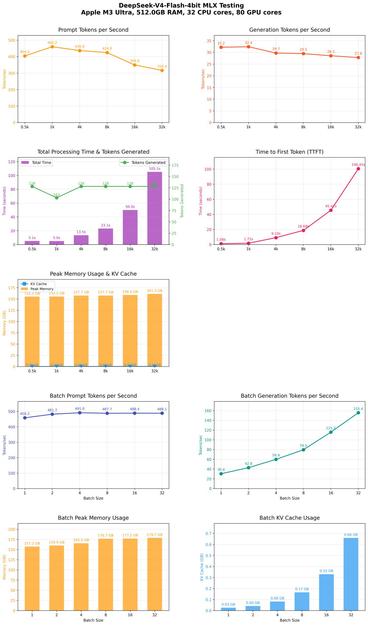

MLX 기반 DeepSeek 4 Flash의 최대 32k 컨텍스트 벤치마크 초기 결과를 공유했다. mlx-lm PR 진행 상황을 점검하는 내용이며, Apple M3 하드웨어에서 4bit 추론이 빠르고 긴 컨텍스트에서도 성능이 잘 유지된다고 언급했다. MLX/Apple 실환경 성능 참고용으로 유용한 업데이트다.

Another preliminary MLX DeepSeek 4 Flash context benchmark (up to 32k context), to check the status of the PR on mlx-lm now that @angeloskath is on it 💪 Things are progressing well as you can see below. 4bit is pretty fast and sustained across contexts. Hardware: Apple M3

MR BIZARRO (@AIBizarrothe)

Apple Silicon에서 동작하는 무료 로컬 비디오+오디오 생성기 Phosphene를 소개한다. MLX 위에서 LTX 2.3을 실행하며, Claude의 도움으로 개발되었다. 원클릭 설치는 Pinokio로 가능하고, 현재 일부 버그가 있어 PR 참여를 환영한다.

https://x.com/AIBizarrothe/status/2049858499824206114

#videogeneration #audiogeneration #applesilicon #mlx #opensource

Ivan Fioravanti ᯅ (@ivanfioravanti)

MLX 기반 컨텍스트 벤치마크 결과를 공유하며, M5 Max와 M3 Ultra를 비교한 뒤 대용량 배치에서는 M3 Ultra가 우세하지만 M5 Max 칩의 성능도 인상적이라고 평가했습니다. @kernelpool의 제안으로 디코드/프리필 속도가 크게 향상됐다고 언급했습니다.

Ivan Fioravanti ᯅ (@ivanfioravanti)

MLX Ling-2.6-Flash의 마지막 버그가 수정되어 OpenCode와 pi mono에서 정상 동작하게 되었다는 업데이트다. M5 Max에서 5bit 양자화로 구동한 영상도 공유했으며, pi mono는 매우 빠르고 OpenCode는 더 느리지만 상세한 결과를 제공한다고 언급했다.

田中義弘 | taziku CEO / AI × Creative (@taziku_co)

Gemma 4 27B와 MLX를 활용하면 맥에서 인터넷 연결 없이 채팅, 구현, 게임 생성까지 로컬로 수행할 수 있다는 데모가 소개됐다. 크롬 다이노 스타일 점프 게임을 오프라인 환경에서 빌드한 사례를 보여주며, 향후 오픈소스화 계획도 언급했다.

Ammaar Reshi (@ammaar)

Gemma 4 기반의 온디바이스 바이브 코딩 앱을 Mac에서 MLX로 구현했고, 인터넷 없이 모델을 선택해 채팅하거나 빌드할 수 있다. Chrome Dino 게임을 오프라인으로 생성하는 데모도 포함하며 오픈소스로 공개했다.

Vibe code without internet 🚀 I built a vibe coding app powered by Gemma 4, running fully on-device on Mac with MLX. Pick your model, then chat or build with it. Watch it build the Chrome Dino game offline using Gemma 4 27b. Open sourcing all of it below👇

Simon Willison (@simonw)

Microsoft의 MIT 라이선스 음성 인식 모델 VibeVoice가 소개되었다. Whisper처럼 화자 분리를 지원하며, M5 MacBook에서 MLX 4bit 변환본을 구동한 실사용 후기와 함께 약 1시간 오디오를 9분 내에 전사할 수 있었다는 성능이 언급되었다.

Microsoft's MIT licensed VibeVoice speech-to-text model (think Whisper with speaker diarization) is really good - my notes on running the 5.71GB 4bit MLX conversion on an M5 MacBook, using about 60GB of RAM at peak and transcribing 1hr of audio in ~9 mins https://t.co/lyu5rtXwPQ

Simon Willison (@simonw)

Microsoft의 MIT 라이선스 음성 인식 모델 VibeVoice를 소개하며, Whisper처럼 동작하되 화자 분리 기능이 포함된다고 설명한다. 5.71GB 4bit MLX 변환본을 M5 MacBook에서 실행한 후기와 함께, 약 60GB RAM 사용, 1시간 오디오를 약 9분 만에 전사했다는 성능 정보를 공유한다.

Microsoft's MIT licensed VibeVoice speech-to-text model (think Whisper with speaker diarization) is really good - my notes on running the 5.71GB 4bit MLX conversion on an M5 MacBook, using about 60GB of RAM at peak and transcribing 1hr of audio in ~9 mins https://t.co/lyu5rtXwPQ