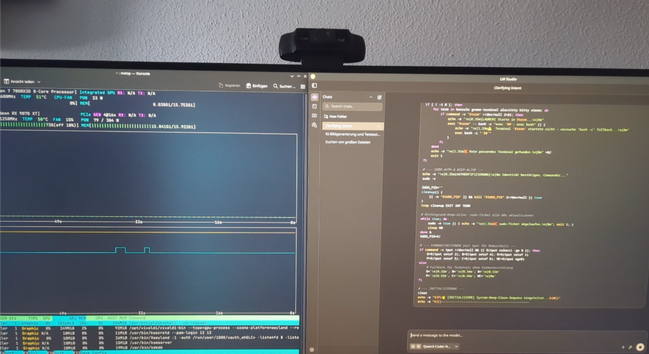

This is hilarious. There is a site that does the whole exposé on how #ClaudeCode works.

They should have called it CUCK: Claude Unpacked Code Knowledge.

Because that's is what Anthropic is going to feel the next coming weeks.

#Programming #Programmers #Coding #Code #SoftwareDevelopment #WebDevelopment #WebDev #AppDevelopment #CLI #Linux #FOSS #OSS #OpenClaw #Claude #Codex #Llama #Ollama #LlamaCCP #LLM #LargeLanguageModel #AI #LMStudio