1. Invent an illness;

2. Put up a couple of preprints about it;

3. See AI companies' web crawlers swallow it hook, line, and sinker whole;

4. LLMs start telling users that the condition is real. WTF.

5. See published papers cite them, likely from authors using LLMs to write them. WTAF.

Since I use Proton Mail, I thought I could test their Lumo LLM chatbot to see if it can help at building Sieve filters. It failed. From the beginning, it assumed tags and folders were different things, and insisted I use

tag "Priority High"; to mark important and urgent stuff high priority. Rookie mistake!In case you didn't know, emails started out as basic text files people send to each other on the same mainframe. Email recipients can store those

.eml files however they would like. Sieve language never differentiated "folders", "tags", "categories" etc. All those things are actually just labels that insists to act differently. They all use fileinto expression. For instance, you want to tag a utility bill email as ToDo, and also move it into "Bills" folder,require "fileinto";

if address :matches "From" "@utilitycompany.com" {

fileinto "ToDo";

fileinto "Bills";

}Further reads:

Great sieve reference by Thomas Schmid: https://thsmi.github.io/sieve-reference/en/

Sieve features on Proton Mail: https://proton.me/support/sieve-advanced-custom-filters

Envelope vs Header in email: https://www.xeams.com/difference-envelope-header.htm

#Email #Sieve #SieveProgramming #LLMFail

#LLMfail

That's some next level Bond villain shite!

Imagine having an ego that requires that kind of virtual massaging... by what amounts to a pre-programmed bot.

https://www.rollingstone.com/culture/culture-features/elon-musk-grok-lebron-james-1235469906/

#prayforhumanity #itisnotAI #LLM #LLMFAIL #LLMprogrammingFAIL #internet

Here’s a good example: https://www.fishiology.com/flag-cichlid-mesonauta-festivus/

Fishiology.com would like you to know that their author “Michelle” is “a long-time freshwater fish enthusiast with a passion for sharing knowledge about this fascinating hobby.”

In fact, she’s *so* passionate about it that she’s posted five or more long articles a day about it, 7 days a week, for *years*! Gosh, what passion! Why, one could say it’s almost *inhuman*! 🙄

And yet that’s one of the better sites, in that much of its suggestions and info here seem pretty accurate… but the point is that it’s entirely impossible to be sure unless you already know a fair amount on the subject.

In contrast, forum posts may be from people with a wide range of experiences and knowledge, but at least they’re *people* trying to provide genuine answers/anecdotes, usually in good faith.

The aquarium/fishkeeping web has been absolutely *destroyed* by LLMs. It was full of SEO spam previously, but now it’s practically impossible to find semi-trustworthy information.

Example: I poked around for info about tankmates that are reasonably safe to keep alongside South American cichlids such as flag cichlids. Most results were obviously LLM-generated given the recognizable writing style.

The danger is that these pages were *mostly* correct, but also contained a lot of dubious info. LLMs are doing the exact opposite of what they purport to do: by displacing more reliable info with less reliable info that’s conveyed with faux confidence, they’re making the web essentially useless as an information source.

(Which is quite a feat, considering it was full of dubious info to begin with!)

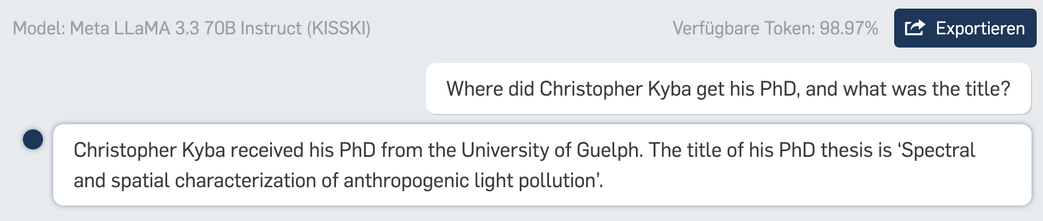

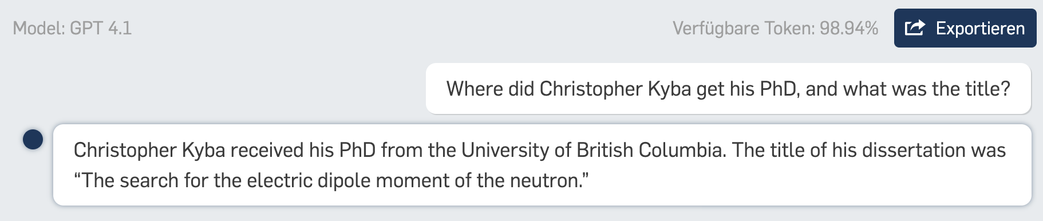

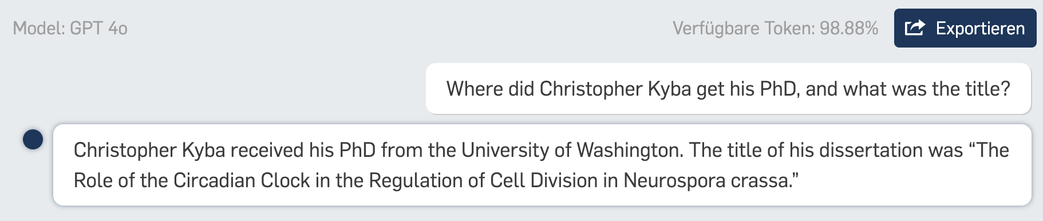

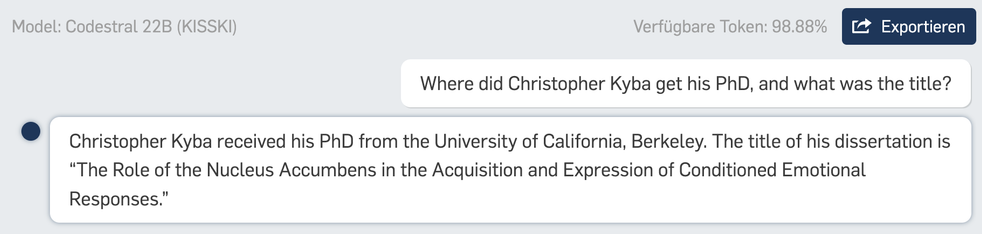

My daughter just came up with a great exercise: challenge your students to find the title of your PhD using ONLY LLMs (no Google allowed). If any of them manage, they get gummy bears 😃

I asked five different models, and got five different answers, all five of which were completely wrong 😂

#AI #ChatGPT #AISlop #LLM #LLMFail #Education #HigherEducation #AcademicChatter

🤖 Think your AI assistant can really reason? Apple’s puzzle tests say otherwise.

📉 See how “thinking” AIs collapse when logic gets real — and why we might be projecting intelligence where there is none.

Hashtags:

#AIReasoning #ChainOfThought #LLMFail #DeepTech