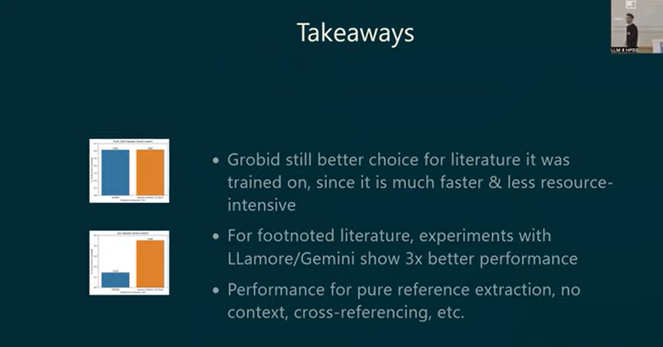

Here's an experiment with prompting #Claude to write a #llm - powered #TEI #annotation pipeline with #evaluation. I also prompted it to run an experiment to compare the performance of Gemini 2.0 Flash vs. Llama 3.3 70b (via saia.gwdg.de) when annotating <tei:bibl> elements.

https://github.com/cboulanger/tei-annotator

https://github.com/cboulanger/tei-annotator/blob/main/docs/batch-annotation-experiment.md

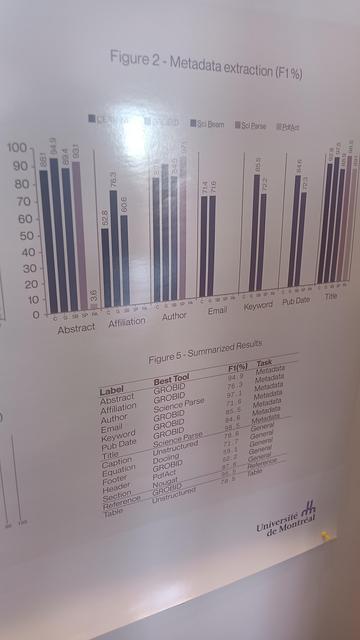

Very early stage, but promising results so far. Don't want to reinvent the wheel though - what other projects (besides #Grobid) engage in LLM/ML-based TEI annotation?