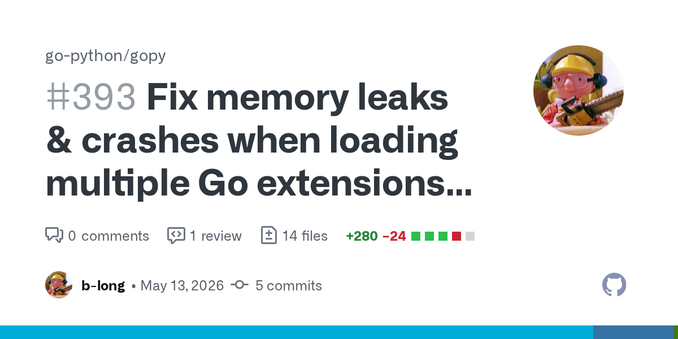

Fix memory leaks & crashes when loading multiple Go extensions in one Python process by b-long · Pull Request #393 · go-python/gopy

Relates-to: #392 Fixes: #385 Fixes: #370 I took Scusemua's two commits in #361 as a base and built a broader set of fixes on top, covering memory leaks, GC coordination, and shared library con...