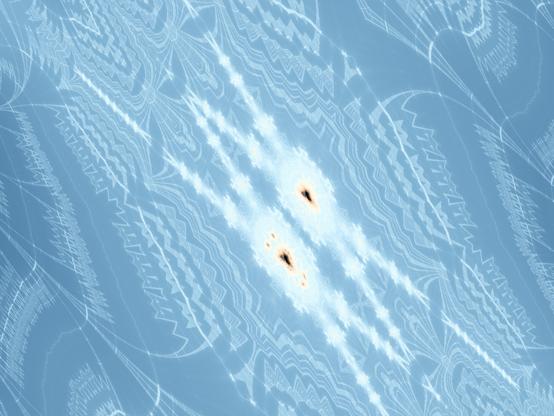

Implemented a double helix #DistanceEstimate based on an idea from #FragM's `Knot.frag` (not knighty's, the other one, based on forum posts by DarkBeam).

Not sure how to #fractal-ize it, wanted to turn it into a #helix of helices of helices etc. Nor how to make it a single helix (I only managed to colour the two halves individually...).

I think each strand is an Archimedean Serpentine, but I'm not 100% sure on terminology..

```

#version 330 compatibility

#define providesColor

#include "MathUtils.frag"

#include "Complex.frag"

#include "DE-Raytracer.frag"

#group Helix

uniform float HelixD; slider[0.0,2.0,10.0]

uniform float HelixR; slider[0.0,1.0,10.0]

uniform float Helixr; slider[0.0,0.5,10.0]

uniform float time;

float DE(vec3 q)

{

q.z += HelixD * time;

float t = (mod(q.z / HelixD + 0.5, 1.0) - 0.5) * 2.0 * PI;

q.xy *= mat2(cos(t), sin(t), -sin(t), cos(t));

q.z = 0;

float s = atan(HelixD / (2.0 * PI), HelixR);

q.yz *= mat2(cos(s), -sin(s), sin(s), cos(s));

return length(vec2(length(q.xy) - HelixR, q.z)) - Helixr;

}

vec3 baseColor(vec3 q, vec3 n)

{

q.z += HelixD * time;

float t = (mod(q.z / HelixD + 0.5, 1.0) - 0.5) * 2.0 * PI;

q.xy *= mat2(cos(t), sin(t), -sin(t), cos(t));

return vec3(0.5) + 0.5 * sign(q.x) * n;

}

```