🚀 Excited to share my latest paper!

What is a diffusion model actually doing when it turns noise into a photograph?The usual story involves score matching and stochastic differential equations, but there is a deeper geometric explanation hiding underneath.

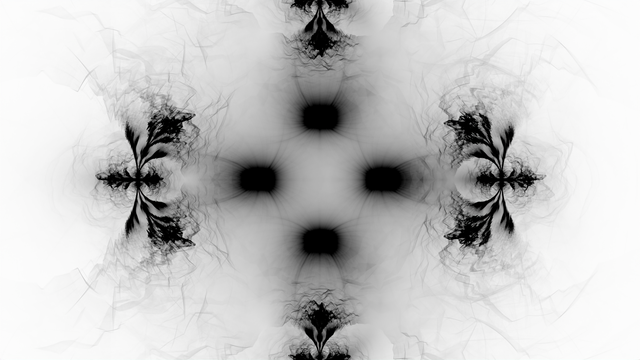

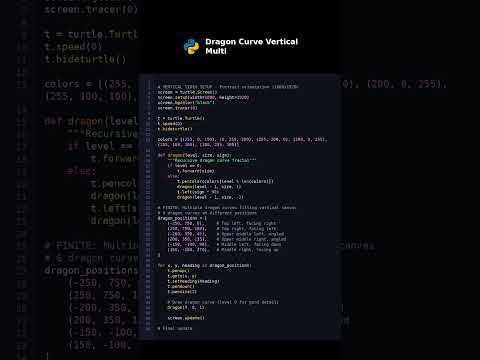

Turns out denoising diffusion is a contractive fractal system, where the noise schedule is literally sculpting an attractor toward your data distribution 🌀 Michael Barnsley introduced these partitioned iterated function systems in the 1980s for fractal compression of images for the Microsoft Encarta encyclopedia.

I showed in particular that:

🔹 The noise schedule of denoising diffusion controls the Hausdorff/Kaplan-Yorke dimension of that attractor;

🔹The fractal geometry framework explains the well-known observed two-phase structure in the reverse chain as expansion, crossover, contraction;

🔹 The theory gives principled, geometry-driven guidance for schedule design and immediately explains the working of several popular heuristics, such as the cosine offset.

A math dive with huge practical payoff!

📄 Full paper: https://arxiv.org/abs/2603.13069

#GenAI #ML #image #DenoisingDiffusion #FractalGeometry

Would love to hear thoughts from anyone working on generative models, dynamical systems or geometric deep learning! 👇