archive DataHoarders

markdown formatted

A nice presentable DataHoarders archive has been created regarding the epstein files

The archive is online accessible as given in the sources matrix.

Even if the content is less interesting to you, the manner in which the front & backend end is built is quite interesting. I have interests in both backend and frontend programming & networking, thus think this is a treasure trove from both perspectives.

YMMV

When you glance through the wikipedia pages of Jeffrey you will find interesting tidbits of his nature rise and fall. When you read it multiple times you will know more than you may want to about this man, enabled by different forces to flourish in his behavour. Go in with a neutral mind and read the sources, go there if you want to know more.

The wikipedia dbase of epstein is LONG the data ammount is massive. Don't expect to even glance over it in just a few minutes.

There are 305 references in this document

When you go to this datahoarders media archive you will have a pleasant representation of the visual and printed data as released by the USA DOJ

Quotes from the archive creators:

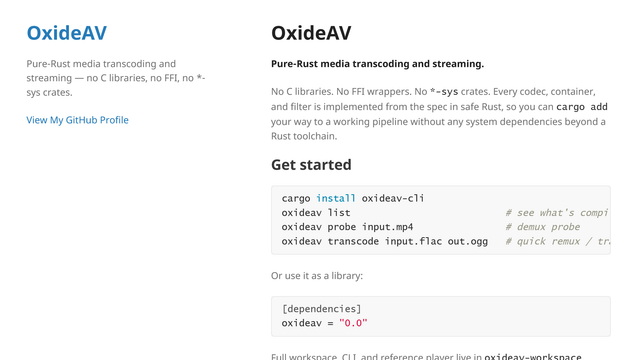

Hey! We are two college students and we just want to share the technical part of our project because you might appreciate it. The DOJ released the Epstein files and we decided to host the entire thing ourselves and build a proper interface on top of it. Here is what the archive actually looks like.

354GB total. 160GB of raw data from the original files and 194GB of our own processed data. Around 600,000 PDF files which actually contain roughly 1,400,000 individual pages inside them since many PDFs bundle multiple pages together when you scroll down. All 3,200 videos have been converted to HLS with adaptive bitrate streaming so quality adjusts automatically to your connection the same way Netflix does it.

For the videos we ran a full audio extraction pipeline, converting video to audio MP4 and then audio to text, generating SRT subtitle files for every single video that contains spoken content. This means you can search for a word that was spoken in any video and find the exact moment it was said

For the PDFs we converted every single page to PNG and ran OCR across all 1,400,000 pages. We then used Go to run AI agents that analyze and summarize the OCR output across the documents. The search engine works through tags associated to each specific file, built on top of all that processed data.

The frontend is React Native, infrastructure runs through Cloudflare.

We also added the possibility for a user to make an anonymous account to like, add a comment and reply to others or make your own investigation post on our platform.

We are not stopping here. There is still a lot to do and we are pushing updates constantly.

Z

Naturally ffmpeg / curl are crucial tool combo's for all this conversion fetch and serve to work smoothly, but I don't need to tell you that. There are many more tools used, go in read and learn!

Sources:

https://exposingepstein.com/home

https://en.wikipedia.org/wiki/Jeffrey_Epstein

https://www.reddit.com/r/DataHoarder/comments/1shx4po/we_scraped_processed_and_now_host_the_entire_doj/

#programming #database #video #HLS #pdf #recoding #streaming #json #backend #frontend #react #srt #subtitles #FFMPEG