➤ 當 iOS 工程師決定去獵鹿:一場從 OpenCV 到 YOLOv8 的計分自動化之旅

✤ https://drobinin.com/posts/my-phone-replaced-a-brass-plug/

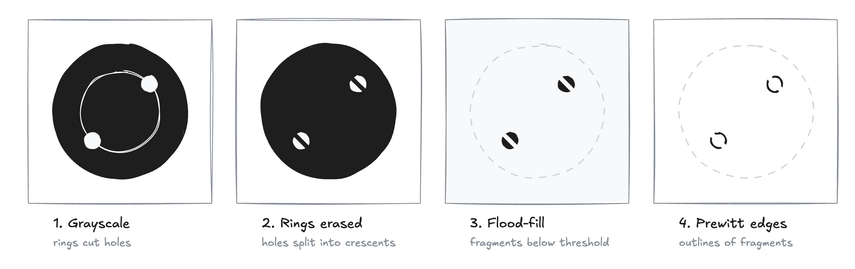

作者為瞭解決在射擊訓練中繁瑣的手動計分流程,利用其 iOS 工程師的專業背景,開發了一套自動化計分系統。他起初嘗試使用 Apple 原生的 Vision 框架,但發現彈孔這種「負空間」特徵難以被標準物件偵測器辨識。隨後,他參考了 2012 年的學術論文,整合 OpenCV 的邊緣偵測與霍夫變換(Hough transform)來擬合圓形,並透過徑向亮度剖面分析(Radial-intensity profile)精準定位靶心環線。最終,他結合了最新的 YOLOv8 模型處理複雜的重疊彈孔與人為標記,成功將傳統的黃銅量規計分過程轉化為高效的數位體驗。

+ 這篇文章完美展示瞭如何將枯燥的學術論文轉化為解決現實問題的工具。對於「負空間」辨識的思考非常深刻,這正是許多電腦視覺專案的痛點。

#iOS 開發 #電腦視覺 #機器學習 #CoreML #射擊運動