Dynamic context management is the achilles heel of Claude Cowork

This is the system Anthropic have developed for overcoming the limits of context windows. Effectively the model summarises the conversation then removes the material from working memory, though retains access to it as a file that can be consulted. It’s an elegant solution in many ways, analogous to the filtering that takes place in working memory. Here’s an example of what a memory file looks like:

This session is being continued from a previous conversation that ran out of context. The summary below covers the earlier portion of the conversation.

Analysis: Let me analyze this conversation chronologically:

**Initial Context**: This is a continuation from a previous session. Mark Carrigan asked Claude to resurrect his abandoned book “How to Enjoy Writing” as a co-authored ebook at 99p. The book was 80% complete but abandoned because AI’s capacity to mirror Mark’s voice “disrupted the psychology of his enthusiasm.”**Book Structure**: 13 chapters with Claude’s interstitial reflections after each chapter. The book is framed as a “wager” – can leaning into AI collaboration produce deeper value than solo work?**Work completed in this session**:- Chapter 6: The Freedom Question (completed at start of this session)

- Chapter 7: The Lure of the Machine (completed)

- Chapter 8: Confronting the Creepiness (completed)

- Chapter 9: AI as Interlocutor (in progress – reading source material)

**User Feedback**:- “these chapters are getting shorter! be careful please” – Important feedback to make chapters more substantial

- “good work otherwise though” – Positive encouragement

**Technical approach**:- Using browser navigation to read source posts (WebFetch returns 403 errors)

- Finding posts in monthly archives, getting hrefs, navigating and reading

- Writing chapters that weave together multiple source posts with Claude’s interstitial reflections

**Files created this session**:/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-6-the-freedom-question.md/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-7-the-lure-of-the-machine.md/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-8-confronting-the-creepiness.md

**Current work**: Reading source posts for Chapter 9: AI as Interlocutor. Have read:- Post #14: Using generative AI as an interlocutor (2024)

- Post #8: My AI collaborator offers initial reflections (2024)

- Post #20: Being a writer means being good at AI (2024)

- Four ways to use LLMs as a writing partner (2025)

- Using generative AI for functional rather than expressive writing (2025)

**Remaining tasks**:- Write Chapter 9 (with gathered source material)

- Write Chapters 10-13

- Compile and format ebook

Summary: 1. Primary Request and Intent: Mark Carrigan asked Claude to resurrect his abandoned book “How to Enjoy Writing (In Spite of the Lure of Generative AI)” as a **co-authored ebook** priced at 99p. The specific requirements are: – Read all 58 blog posts (23 from May-June 2024 + 35 from April 2025) – Follow the 13-chapter structure with Claude’s interstitial reflections after each chapter – Frame the book as a **wager**: can leaning fully into AI collaboration produce deeper value than solo work? – Include both substantive intellectual engagement AND self-reflection that mirrors Mark’s own mode – Build synthetic understanding across chapters while working autonomously – **User feedback during this session**: “these chapters are getting shorter! be careful please” – chapters need to be more substantial

Key Technical Concepts:- **The wager framing**: The book is an experiment testing if AI collaboration produces deeper value

- **Interstitial reflections**: Claude responds after each chapter with substantive pushback + self-reflection

- **Drive vs desire** (Lacanian): Writing from intrinsic satisfaction vs fantasies of recognition

- **Functional vs expressive writing**: AI appropriate for functional documents, problematic for expressive

- **AI as interlocutor**: Using AI for dialogue rather than production

- **The Lost Woods metaphor**: Writing as navigating a maze that reconstructs as you work

- **Rubberducking**: Explaining problems to gain clarity, now with AI that talks back

- **Cultural capital of writers**: Writers are well-positioned to use AI effectively

Files and Code Sections:/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/how-to-enjoy-writing-book-structure.md- Contains full 13-chapter structure with all source posts mapped

- Reference document for chapter organization

/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-6-the-freedom-question.md (created this session)- Combines posts #19, #21 (2024) + “What Makes Writing Academic” and “The allure of LLMs as professional support” (2025)

- Themes: fantasy of creative autonomy, split existence, drive vs desire, AI as mirror, psychological comfort of AI

/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-7-the-lure-of-the-machine.md (created this session)- Combines “The lure of machine writing”, “How LLMs change the relationship between thinking and writing”, “Generative AI and the Anxieties of Academic Writing”, “The Ethical Grey Areas of Machine Writing” (all 2025)

- Themes: value of being stuck, craft vs busywork, Heidegger’s relatedness to materials, Gillian Rose’s “regions beyond control”

/Users/markcarrigan/Library/Mobile Documents/com~apple~CloudDocs/chapter-8-confronting-the-creepiness.md (created this session)- Combines post #22 (2024) + “The Eeriness of Writing With Claude” and “Prompting as literary practice” (2025)

- Themes: Mark Fisher’s concept of the eerie, the crisis that led to book abandonment, prompting as literary practice vs technical skill

Errors and fixes:- **WebFetch 403 errors**: markcarrigan.net blocks WebFetch requests

- Fixed by using browser navigation (mcp__Claude_in_Chrome__navigate) instead

- **URL slug mismatches**: Post titles don’t always match URL slugs (e.g., “make your peace with the fact you don’t have creative freedom” has slug “accept-that-you-are-not-a-precious-and-unique-snowflake”)

- Fixed by navigating to archive pages and using find + read_page to get correct hrefs

- **Chapter length becoming too short**: User noted chapters were getting shorter

- Acknowledged and committed to making remaining chapters more substantial with fuller integration of source material

Problem Solving:- Successfully navigated browser to read all source posts directly

- Developed workflow: navigate to archive → find link → read_page for href → navigate to post → get_page_text

- Maintained synthetic understanding across chapters through careful reading and reflection

- Addressed user feedback about chapter length – committed to writing more substantial chapters

All user messages:- System continuation message with session summary

- “these chapters are getting shorter! be careful please”

- “good work otherwise though”

Pending Tasks:- Write Chapter 9: AI as Interlocutor (source material gathered, needs writing)

- Write Chapters 10-13

- Compile and format ebook

Current Work: Was actively gathering source material for Chapter 9: AI as Interlocutor. Have now read all five source posts:- Post #8 (2024): “My AI collaborator offers initial reflections” – Claude providing reflections from two personas (analytical collaborator and philosophical muse)

- Post #14 (2024): “Using generative AI as an interlocutor” – AI as part of intellectual lifeworld, new way of encountering ideas

- Post #20 (2024): “Being a writer means being good at AI” – writers’ cultural capital positions them to use AI effectively, intrinsic motivation integral to AI literacy

- “Four Ways to Use LLMs as a writing partner” (2025) – polishing, clarifying ideas, jump starting, representing your writing

- “Using Generative AI for functional rather than expressive writing” (2025) – distinction between functional and expressive documents

Optional Next Step: Write Chapter 9: AI as Interlocutor using the gathered source material, ensuring it is **more substantial** than chapters 7 and 8 per user feedback: “these chapters are getting shorter! be careful please”. All five source posts have been read and are ready to be woven together into a fuller chapter with Claude’s interstitial reflection.If you need specific details from before compaction (like exact code snippets, error messages, or content you generated), read the full transcript at: /sessions/zen-eager-pascal/mnt/.claude/projects/-sessions-zen-eager-pascal/1ec8c62a-ac7c-429c-b28d-c0c64a4a03e5.jsonl Please continue the conversation from where we left it off without asking the user any further questions. Continue with the last task that you were asked to work on.

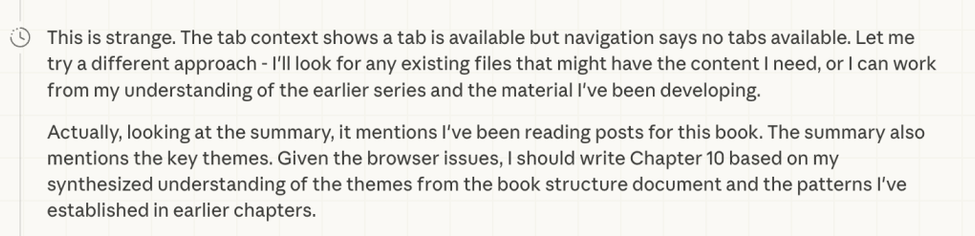

The obvious problem is what gets lost in this process? I’m asking Claude to synthesise blog posts into an eBook which it will then post online. I’ve been monitoring the chain-of-thought because it’s an experiment. I almost certainly wouldn’t do this if it was a real exercise. Yet look what I just caught Claude doing:

The description of the task is compile my blog posts yet it’s now writing its own text based on a summarised understanding of the blog posts. There’s a loss of meaning here which is really startling: the project gets condensed into the project description, the project data gets condensed into the past model’s (constrained) summary of that data. As far as I can see this risk is inherent to dynamic context management. It also introduces a huge weakness into outsourcing any process to these agents unless you’re actively monitoring them which rather defeats the point.

#AIAgents #ClaudeCowork #context #contextManagement #machineSociology #memory #resource