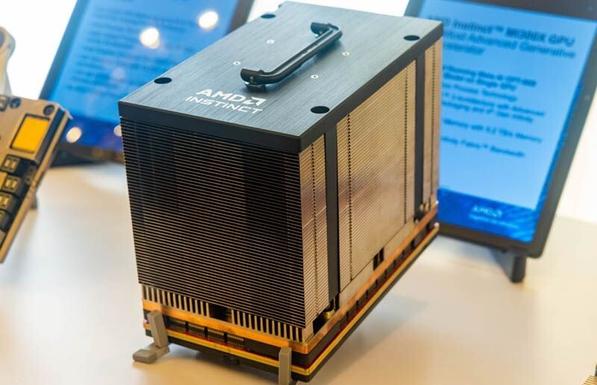

Achieving a 1000X performance increase in four years is a major achievement, though we should keep in mind that between the Instinct MI300X and Instinct MI500 there is a three-generational instruction set architecture (ISA) gap (#CDNA3 => #CDNA6).

Next-generation #CDNA 6 architecture on-track for 2027.

https://www.tomshardware.com/tech-industry/artificial-intelligence/amd-unwraps-instinct-mi500-boasting-1-000x-more-performance-versus-mi300x-setting-the-stage-for-the-era-of-yottaflops-data-centers

Probably #FP4