These brain implants speak your mind — even when you don't want to.

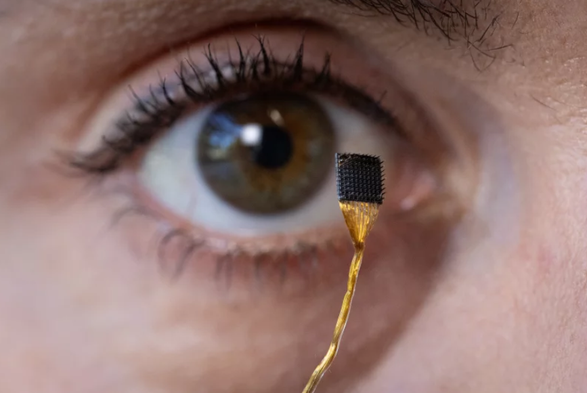

Surgically implanted devices that allow paralyzed people to speak can also eavesdrop on their inner monologue.

That's the conclusion of a study of brain-computer interfaces (BCIs) in the journal Cell.

https://www.npr.org/sections/shots-health-news/2025/08/20/nx-s1-5506334/brain-computer-implant-speak-inner-speech-mind?utm_source=globalmuseum #globalmuseum #brain #BCIs