CareFabric: A Blueprint for Healthcare Interoperability

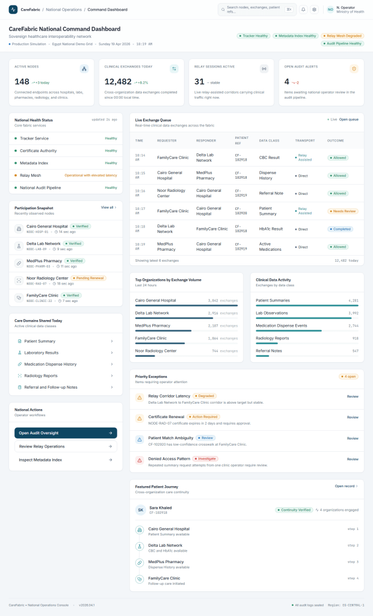

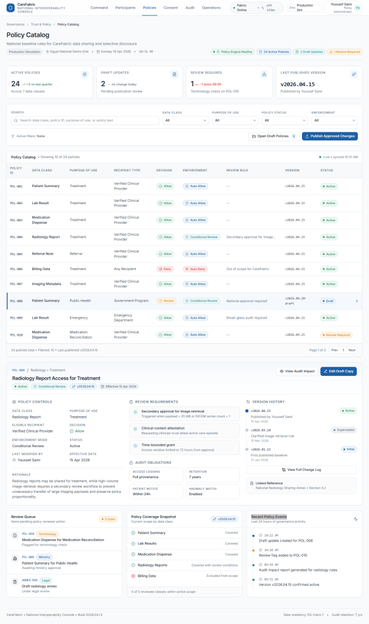

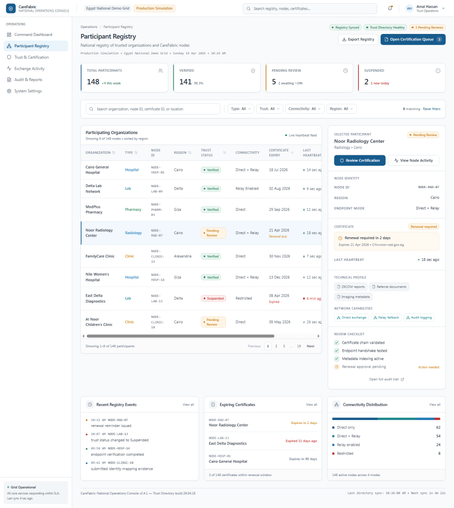

The CareFabric model proposes a new approach to healthcare interoperability, emphasizing clinical coordination rather than simply integrating existing systems. It aims to address the fragmentation in healthcare information by creating a federated clinical exchange where government plays a role as a trust tracker rather than a central data holder. This model preserves organizational autonomy while enabling selective data exchange and robust governance. The architecture incorporates a national control plane and a federated data plane, ensuring secure real-time communication. CareFabric emphasizes the importance of patient identity, metadata management, and AI governance to enhance operational efficacy while keeping financial processes out of scope.

https://roofman.me/2026/04/18/carefabric-a-blueprint-for-healthcare-interoperability/