| site | https://eriksonarias.dev |

Erikson Arias

- 19 Followers

- 12 Following

- 69 Posts

@kimcrawley Agree with this. GenAI is incapable of critical evaluation, only statistical significance. It can't tell if a translation preserves meaning; it has no concept of meaning. It can't tell if a statement is true; it has no concept of truth.

GenAI can do one thing: output. Wikipedia faces many challenges and "not enough text" has never been one of them. The vision of generating text, and therefore inaccuracies, at scale for editors to fix is utterly demented.

Will Hollingsworth is his name

Give him a listen

Talking against a #dataCenter proposal in Ravenna, #Ohio

Speaking eloquently on how he trained the #AI that replaced him, bad #regulations, lying #techBros...

Witty, passionate, persuasive

Will is a #citizen in the finest sense of the word

"I am not a cynic when it comes to #technology. I am believer in #community. I believe that a drop of clean #water for a Ravenna child is worth more than a billion #AI generated images"

👏 👏 👏

"AI Slop (n.) The endless tide of mediocre, derivative content produced by models trained on other models’ mediocre, derivative content. The cultural equivalent of feeding a landfill into a blender and calling the result “progress.”"

"The Devil's Dictionary of Vibe Coding" goes hard https://gist.github.com/artfwo/63eaaffdb47cbba342b04f989bd9463b

"Then imagine my — and many others' — utter confusion at the prospect of signing a massive B2B contract with an AI vendor and then mandating its usage, complete with corporate tracking, before the technology has had time to proof. Since when was it principled business to pay for something and then set out to prove its value?"

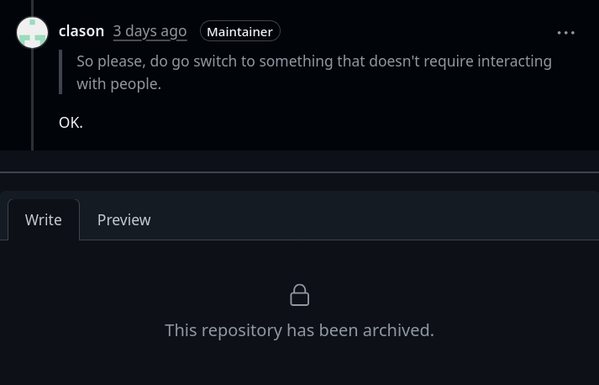

Mental fatigue when maintaining FLOSS projects, illustrated.

https://github.com/nvim-treesitter/nvim-treesitter/discussions/8627

Blogged: On the acceptance of GenAI

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/

A large international study coordinated by the #EBU and led by the #BBC found that AI assistants misrepresent news content 45% of the time across different languages and platforms, with #Gemini performing the worst.

[…] Key findings:

• 45% of all AI answers had at least one significant issue.

• 31% of responses showed serious sourcing problems – missing, misleading, or incorrect attributions.

• 20% contained major accuracy issues, including hallucinated details and outdated information.

• Gemini performed worst with significant issues in 76% of responses, more than double the other assistants, largely due to its poor sourcing performance.

• Comparison between the BBC’s results earlier this year and this study show some improvements but still high levels of errors.

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

AI training is updated on Nigerians and Kenyans. Here's an essay from a Kenyan about AI stealing the style he was taught. The essay's full of "AI tells" - but it does not at all read like AI, because it's so clearly a human writing, with something to say.

https://marcusolang.substack.com/p/im-kenyan-i-dont-write-like-chatgpt

I'm a big fan of this explanation/rant from Andrew Murphy.

Taken as a whole, there are many bottlenecks in a corporate software development process. The "load-bearing" calendar is a great example!

Speeding up code creation just increases pressure on the bottleneck, which decreases throughput.

If you thought the speed of writing code was your problem - you have bigger problems | Debugging Leadership

AI coding tools are optimising the wrong thing and nobody wants to hear it. Writing code was already fast. The bottleneck is everything else: unclear requirements, review queues, terrified deploy cultures, and an org chart that needs six meetings to decide what colour the button should be.