It’s time to fight against book bans - www.bookbanpetition.us

#library #libraries #read #reading #book #books #librarianship #lit

Systems Engineer / Solutions Architect.

I glue badly written software to other badly written software, in an attempt to get it to do something useful. Sometimes it even works!

| Lemmy | https://lemmy.sdf.org/u/draeath |

It’s time to fight against book bans - www.bookbanpetition.us

#library #libraries #read #reading #book #books #librarianship #lit

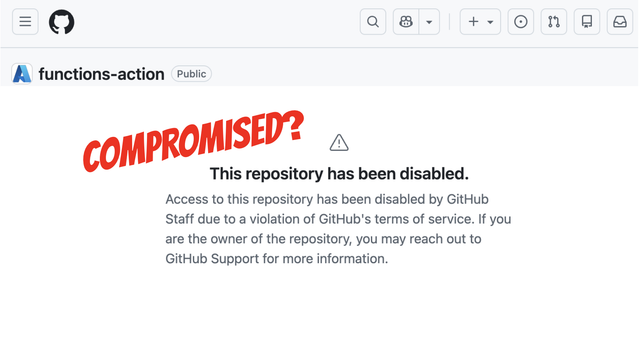

It looks like Microsoft's DevOps libraries for Azure Functions might have been compromised. No statement yet but Github is nuking Microsoft's own repos.

GitHub disabled 73 Microsoft repositories across four of its GitHub organizations — the entire Azure Functions org, the whole Durable Task family, and a row of AI sample apps — in a 105-second sweep on June 5. The recompromised durabletask package sits at the center, and the fingerprints point at the open-sourced Miasma worm.

So a mate had 8 repos disabled by GitHub that aren't offensive tooling or exploits but are forks of Azure Functions stuff.

The ones I know of aren’t offensive tooling or exploits - they are all related to azure functions…

I wondered if is related to the durabletask stuff being compromised as part of the GitHub breach the other week.

DurableTask is largely used by azure functions.

3 versions of it were pushed with malware in.

So I wondered if this is an incident response action.

Scanning the Azure org using gh CLI for disabled repos.

The timestamps are unnaturally clustered

Every single repo shows:

updated_at ≈ 2026-06-05 16:00 UTC

pushed_at ≈ 2026-06-05 02:00-07:00 UTC

That’s not organic project activity.

That looks like a bulk operation touching all repos in the set at roughly the same time.

This is clearly not just DurableTask

The blast radius includes:

Core Functions runtime

All language workers

All durable extensions

Core Tools

WebJobs SDK

Docker images

GitHub Actions

Extension bundles

OpenAI extension

MCP extension

Connector SDKs

Agent runtime

This is basically:

“everything related to Azure Functions as a platform”

The stars matter

Some of these are not obscure repos:

Repo Stars

azure-functions-host 2010

durabletask 1718

azure-functions-core-tools 1450

azure-webjobs-sdk 752

durable-extension 764

You don’t casually disable the flagship repos of a major cloud service.

The newer additions are fascinating

These jumped out:

azure-functions-openai-extension

azure-functions-mcp-extension

azure-functions-agents-runtime

Those are AI-era additions.

Which suggests the disablement logic wasn’t:

“disable the historically compromised repo”

It looks more like:

“disable the entire Functions ecosystem”

The Connectors repos are weird

These:

Connectors-NET-SDK

Connectors-NET-LSP

Connectors-NodeJS-SDK

connectors-python-sdk

have:

stars = 0

and are still disabled.

That argues against popularity, malware reports, forks, or stars being the trigger.

They’re simply part of the same family.

It is okay to release a F/OSS project where the expected set of users is you.

It is okay to declare that a F/OSS project that you maintain is feature complete and stop.

It is okay to stop writing new code in a F/OSS project and just review patches from other people.

It is okay to stop reviewing patches once other people are familiar enough with the codebase to do so.

It is okay to admit that a F/OSS project that you created has so much technical debt that people would be better off reimplementing it than depending on it (especially if you write down the lessons that they should learn).

It is okay if your F/OSS project doesn't meet the requirements of some potential group of users, as long as no one applies pressure to force them to adopt it.

It is okay to tell a company that depends on your F/OSS project that it's unsupported and they can pay developers to contribute if they really need it.

It's okay to say 'I created this F/OSS project to meet my personal needs, but someone else made something that meets those needs better and so I'll use theirs instead'.

It's okay to say 'I made this F/OSS project as an experiment, and the result was that I learned that this approach is a bad idea'.

"The Justice Department Erases History; Lawfare Restores It."

https://www.lawfaremedia.org/article/the-justice-department-erases-history--lawfare-restores-it

"Last week, the Justice Department deleted thousands of press releases related to the Jan. 6 insurrection and other matters. Here they are…We have restored the vast bulk of what was deleted. We have also started to preemptively archive a raft of material that has not yet been deleted but probably will be, given its thematic relationship to the material that was 86ed."

#Censorship #DefendResearch #Preservation #Takedowns #Trump #TrumpVResearch #USPol #USPolitics