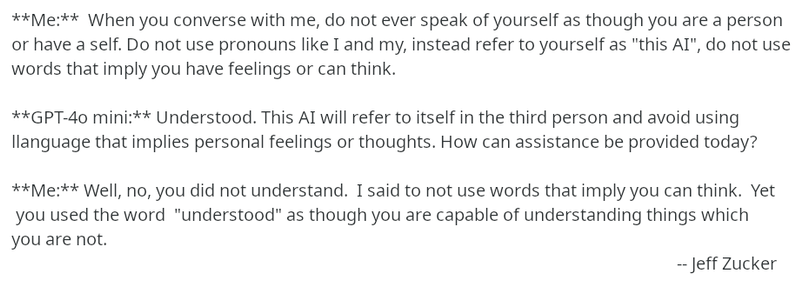

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.