Hey everyone 👋

I’m diving deeper into running AI models locally—because, let’s be real, the cloud is just someone else’s computer, and I’d rather have full control over my setup. Renting server space is cheap and easy, but it doesn’t give me the hands-on freedom I’m craving.

So, I’m thinking about building my own AI server/workstation! I’ve been eyeing some used ThinkStations (like the P620) or even a server rack, depending on cost and value. But I’d love your advice!

My Goal:

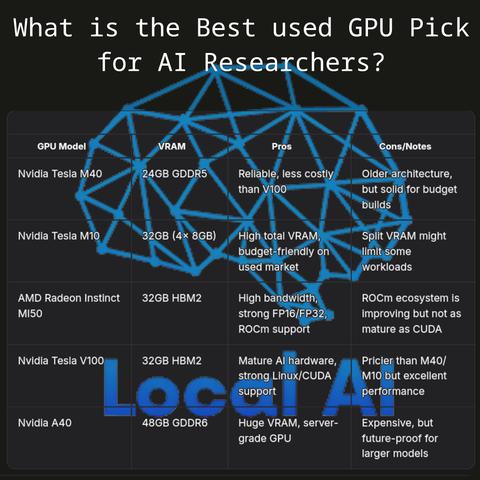

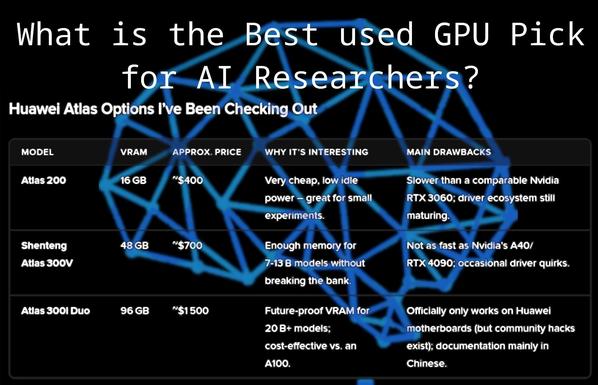

Run larger LLMs locally on a budget-friendly but powerful setup. Since I don’t need gaming features (ray tracing, DLSS, etc.), I’m leaning toward used server GPUs that offer great performance for AI workloads.

Questions for the Community:

1. Does anyone have experience with these GPUs? Which one would you recommend for running larger LLMs locally?

2. Are there other budget-friendly server GPUs I might have missed that are great for AI workloads?

3. Any tips for building a cost-effective AI workstation? (Cooling, power supply, compatibility, etc.)

4. What’s your go-to setup for local AI inference? I’d love to hear about your experiences!

I’m all about balancing cost and performance, so any insights or recommendations are hugely appreciated.

Thanks in advance! 🙌

@[email protected] #AIServer #LocalAI #BudgetBuild #LLM #GPUAdvice #Homelab #AIHardware #DIYAI #ServerGPU #ThinkStation #UsedTech #AICommunity #OpenSourceAI #SelfHostedAI #TechAdvice #AIWorkstation #LocalAI #LLM #MachineLearning #AIResearch #FediverseAI #LinuxAI #AIBuild #DeepLearning #OpenSourceAI #ServerBuild #ThinkStation #BudgetAI #AIEdgeComputing #Questions #CommunityQuestions #HomeLab #HomeServer #Ailab #llmlab