@jakintosh @cancel that is fascinating. The exact same thing happened to me too, just before Christmas. And it took me some time to figure out that I was fighting addiction. 😬

I'm pretty much the only one at my company who's clearly against generative A.I. My colleagues have been using it for months/years and my boss is always looking for ways to incorporate it into daily work to make us more efficient developers. 😩

His latest big idea: a Hackathon before Christmas to see how far a small team of skilled developers can get with genAI on a clearly defined side-project, loosely related to our regular products. I had never written a prompt before, but I agreed to at least try it out for two weeks. I always planned to put it aside after that, because I cannot use genAI in good conscience, knowing enough about all the problems it causes.

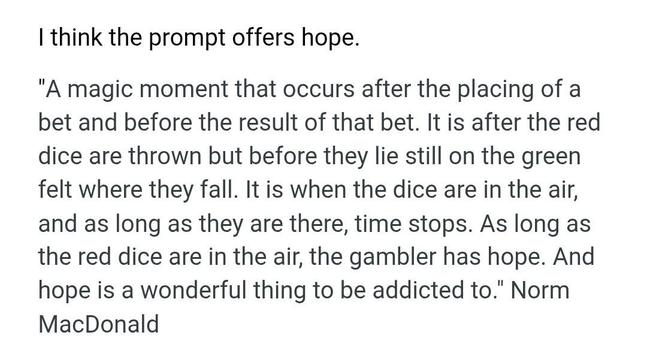

Nevertheless, just as you say, I wrote this

one prompt on a Monday afternoon... and it spit out 90% of the code I ultimately handed in for the Hackathon. And the code was surprisingly well-written and well-tested, something that I can imagine myself actively maintaining for the foreseeable future. The documentation was much too verbose, but you can always throw that away. 😅

And for a few days after that, I wanted to throw

everything at it, just to see what genAI would do with it. I had set clear boundaries for myself, so I only used it for the Hackathon and never for "real" work. But I had this strange, unexplainable urge to replace any internet search, any look into the docs, StackOverflow, etc. with a prompt.

But I want to believe, that even without clear boundaries, I'd have stopped myself again when I started using the LLM for anything other than Python code. Using it for Ansible was a very sobering experience, showing off all its worst traits: hallucinating dependencies, broken code, slightly off code that breaks things in subtle ways that take forever to debug,...

I'm so happy to see other people talk about getting adicted to genAI. I did notice that effect and my gut was trying to tell me what it is and it's just very affirming to hear it from other people too. 🙂