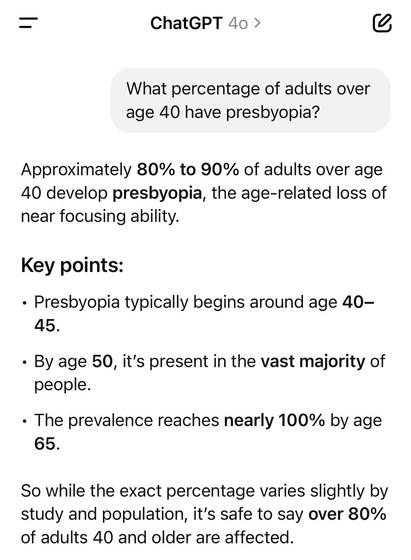

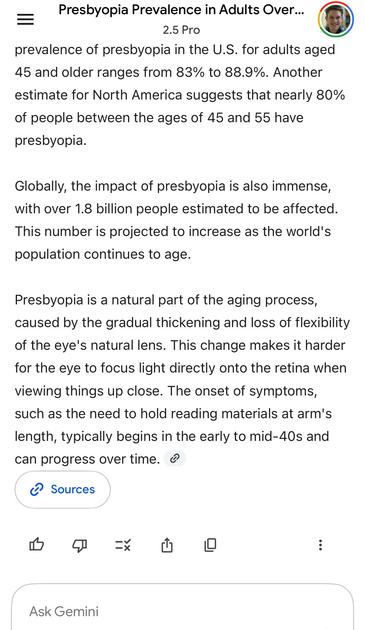

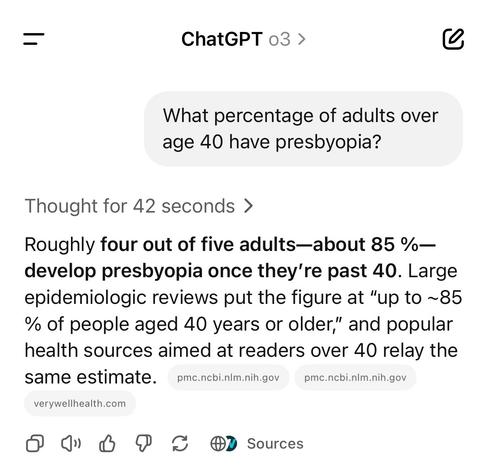

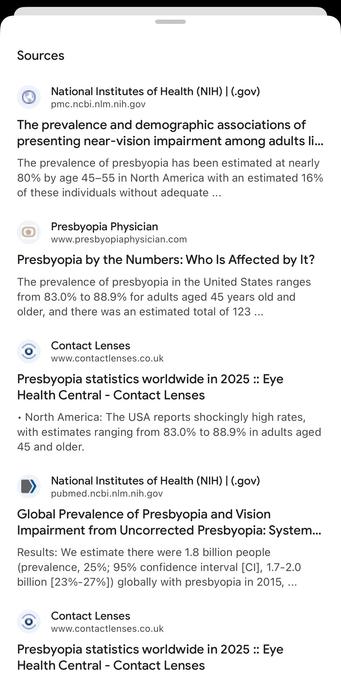

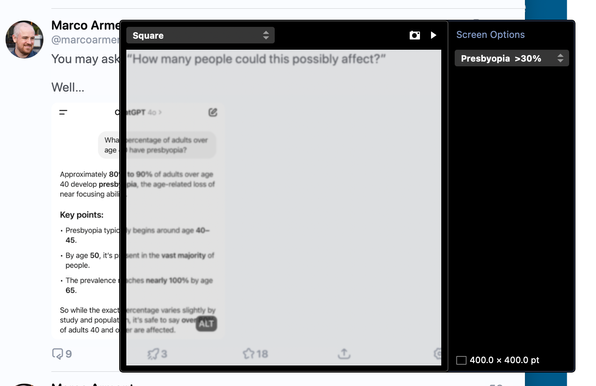

Liquid Glass’ blurred content everywhere is especially cruel to those of us who use reading glasses or progressives.

The reflex to seeing blurry text on our phones is to adjust our sight angle or distance to sharpen it. But, of course, it’s not our fault, doesn’t sharpen, and just causes eyestrain.

Text on my phone should never be blurry.