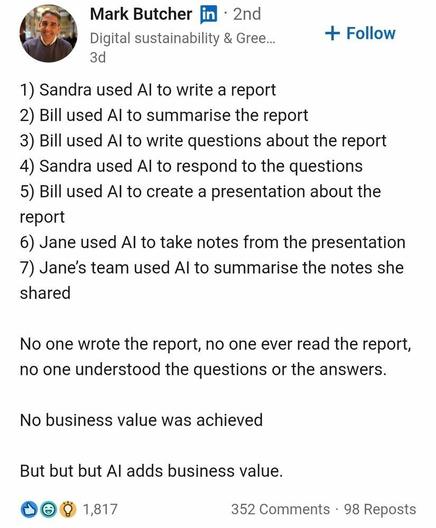

@Natasha_Jay The main AI application is sycophancy.

Since about 80% of jobs in North America involve countless hours of kissing up to the boss, it is clearly possible to achieve cost savings by locking up thin skinned neurotics in a room with a machine that strokes their egos non-stop.

Why else do you think they find "in person" work so essential?

@Natasha_Jay AI is also good at racism, too. This is another way that ML will take away burdensome repetitive jobs from humans. Instead of people being annoyed at having to continuously repeat the same racist (sexist, homophobic, ...) actions daily at work, the AI assistant can do it for them. While kissing up to the boss!

https://www.pcmag.com/news/tiktok-flooded-with-racist-ai-videos-likely-made-by-google-veo-3

the issue will come when the bosses will require all the llm agents to work in office

Hint: none of those folks had ever generated any business value.

@Natasha_Jay AI IS NOT THE WAY!

Do👏not👏listen👏to👏techbros👏

So hilariously funny and true that it’s sad.

Absolutely

I vaguely recall a joke along those lines.

Something about two guys who are ship wrecked on a remote island, with nothing but a hat. To pass the time, they trade the hat back and forth. When they are finally rescued, both are millionaires...

IIRC H. Beam Piper told a variation of that joke in his novel Space Viking.

Yes and yes. It was basically a racist joke about that some "people" (often Asian) could sell each other rocks in the desert and make a profit.

Yes, that was my point. Using AI to write, read, and interpret business reports is the new becoming billionaires by trading rocks.

Make Fun Of Them

Have you ever heard Sam Altman speak? I’m serious, have you ever heard this man say words from his mouth? Here is but one of the trenchant insights from Sam Altman in his agonizing 37-minute-long podcast conversation with his brother Jack Altman from last week: “I think there will

@Jude_theone479001 @mnf @Natasha_Jay "... it’s time to start mocking these people and tearing down their legends as geniuses of industry. They are not better than us, nor are they responsible for anything that their companies build other than the share price (which is a meaningless figure) and the accumulation of power and resources.

These men are neither smart nor intellectually superior, and it’s time to start treating them as such."

Spot on.

One point is missing. The important one. No one will feel or be made responsible for whatever will happen based on this workflow.

I don't know what it was like in the rest of the world but when the UK had its Covid lockdown and furlough scheme so that only essential workers could go out to work we found out who the really important workers were. None of them used or will AI as an essential part of their jobs.

The real stuff that keeps society running is very different for what the Techbrosphere imagine it is.

The last couple sentences are doing a lot of heavy lifting in that post.

Let me run that past the AI to see if it checks out...

@Natasha_Jay This is why "AI" will replace middle-management…

Sandra Jane and Bill used AI to write their resumes to submit to an AI that wrote the job description, and an AI that filtered through all the AI replies, to find the candidate best suited to hire, according to AI.

It’s AI hiring AI. No one knows what’s going on.

@Natasha_Jay But did the report ever actually need to be written? 'Cause if it wasn't, then AI is the symptom, not the root, of the problem.

Because nobody was reading, understanding, evaluating, or acting on the report to begin with. They just had to try harder to pretend to.

No worries: AI generated an earlier report pointing out the (non?) problem...perhaps based upon an even earlier AI generated accounting sheet.

Oh heck; that's not a new problem!

In the late 1980s, our team was pressured to implement some "vitally important functionality" report that some person was spending FULL TIME producing. We were being forced to, so we went to every person who received the report, to determine their real business needs.

NOT A SINGLE PERSON EVER USED THE REPORT!

Not at all. Not once. Not ever.

It was 100% filed, ignored, and then later discarded.

100% USELESS WASTE.

@Natasha_Jay @JeffGrigg Doesn't surprise me. A few years ago, I read a book titled Bullshit Jobs by an anthropologist who found, during a freak survey, that anywhere from thirty to forty percent of all people held jobs they honestly believed contributed nothing to society. A lot of these are professional-managerial positions that produce exactly those kinds of reports, and exist mostly to make upper management feel important.

I've had family members tell me they felt like they spent more time filling out paperwork about their work than actually working, that they felt useless when promoted to management because the team already knew what they were doing, and a friend of mine who went on to read the book claimed it explained so much of what went on at his tech workplace.

The book, "Bullshit Jobs: A Theory"

by David Graeber

https://www.amazon.com/Bullshit-Jobs-Theory-David-Graeber-ebook/dp/B075RWG7YM/

@Natasha_Jay @JeffGrigg That's the one!

Sounds like you might've read it already.

Sorry; I have not read the "Bullshit Jobs" book. Seems too depressing. 😢

@Natasha_Jay @JeffGrigg @billseitz I'm not sure I even see a response in those highlights. He basically handwaves away Graeber's entire argument. In no way does he demonstrate how the sorts of jobs Graeber describes are not bullshit, how public/private partnerships end up creating more positions and bureaucracy instead of less (a major point of evidence—if it's more efficient, why all the red tape?), or provides a convincing alternative explanation for why so many people (a metric ton of people responded to his survey) think their jobs are useless, or explain how rising productivity has actually compelled us to work just as much, if not more, than we used to but for less.

Meanwhile, here's a practical example in Graeber's support: when I was doing political activism for single-payer healthcare a few years back, an opponent of the bill my org supported wrote an article complaining that a Medicare-for-All style program would be *too* efficient. That would eliminate a ton of bureaucratic positions, obviously, but also reduce demand for imaging equipment and such that would be rendered unnecessary by reduced specialist demand. We had to maintain the current system, according to the writers, so that those people could keep there jobs.

If people would be just as healthy, if not healthier, under a single-payer system, but the economy would shed jobs due to its efficiency, those jobs are bullshit jobs. The extra time and energy spent on selling medical imaging equipment would be in bullshit jobs because that level of demand can only be maintained by keeping people sicker than they ought to be. The workers making those products, as well as delivering that extra specialist care, would serve as "duct-tapers," according to Graeber's typology. Meanwhile, if increasing access reduces the number of bureaucrats, then those positions were, in fact, pure bullshit jobs, because obviously their task wasn't to provide healthcare, but to *prevent* people from obtaining it. How can a job be more ridiculous than that?

If the author wanted to mount a serious critique, he would've done well to address the question of whether or not Graeber's estimates, in the realm to 30-40%, were too aggressive. But doing so would require engaging with his argument seriously, and bringing the empirical rigor they claim Graeber lacked. Sadly, the author was neither committed enough to science to compel nor enough to comedy to amuse.

It would be interesting to see if it did exactly the same as the old chestnut “send three and fourpence we’re going to a dance” which had started out as

“send reinforcements we are going to advance”

@Natasha_Jay I strongly suspect that the AI bubble won't burst until a large company (microsoft/apple/amazon level) leans a bit too heavily into it and we see a late 80s IBM style collapse because of it.

I also think that will happen sooner rather than later

We can only hope. 🙄

It added business value alright, but for those who sold the AI

🇪🇺

🇪🇺