@eosfpodcast I see we're now in the "of *course* you can't expect this skill from an LLM, that's not what they're good at" stage of the discourse. This sort of remark is also popping up a lot in comments to people posting links to that article about how an old Atari console chess game from almost 50 years ago stomped ChatGPT recently.

This observation is of course totally correct. LLMs are genuinely not good for this sort of thing. They can't play chess very well (certainly not as well as just about any off-the-shelf dedicated chess program from anytime in the last several decades), and they can't actually reason about anything, instead just printing text that *looks* like what a reasoning person might do or think. And, of course, people critical of "generative AI" have been pointing this out for years.

Pointing this out is neither trite nor useless.

When OpenAI literally advertises "Learn something new. Dive into a hobby. Answer complex questions." as a sensible use case for ChatGPT (https://openai.com/chatgpt/overview/), people will expect behavior like this from an LLM.

When Google uses its LLM, Gemini, not just as a search adjunct (where it might be at least *somewhat* useful, since it could plausibly associate your request with actual web pages' text) but as a tool for creating factual summaries of information on the web, people will expect behavior like this from an LLM.

When the entire first *year* of ChatGPT hype claimed things like showing "sparks of artificial general intelligence" (https://www.microsoft.com/en-us/research/publication/sparks-of-artificial-general-intelligence-early-experiments-with-gpt-4/), people will expect behavior like this from an LLM.

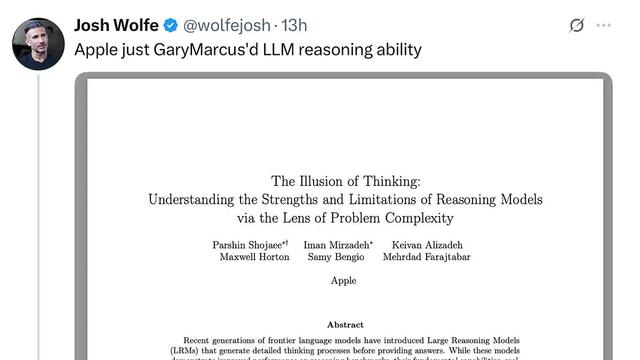

When every vendor out there (Anthropic, OpenAI, Microsoft, you name 'em) aggressively markets their models as "reasoning" models, people will expect behavior like this from an LLM.

There are lots of people who have no clue about the limitations of this flavor of "AI", precisely because it has been -- and still is -- hyped so aggressively in counterproductive and misleading ways.