Meanwhile, Apple responds to the predictable result of running notifications through a blender with #LLM BS: "Apple Intelligence features are in beta and we are continuously making improvements with the help of user feedback… A software update in the coming weeks will further clarify when the text being displayed is summarization provided by Apple Intelligence"

This whole thread of #Google #AIIsGoingGreat with fractions is a good illustration of why I'm skeptical of the "sure, it has bugs, but they're fixing them, just like any other software" takes. IMO you can't band-aid a system with no concept of what fraction is to get this right in the general case, and even if you somehow recognize questions about fractions, there's an unlimited number of other cases where autocomplete is similarly inappropriate

https://mastodon.social/@lauren@mastodon.laurenweinstein.org/113771300586021845

This one with 25.4 == 1 in particular is a great example of how probabilistic completions go off the rails

https://mastodon.social/@[email protected]/113772004311087469

802.11*sigh*

https://openwrt.org/docs/guide-user/network/wifi/mesh/80211s

(see edit history for a good time)

One objection #AI pessimists hear a lot is that big tech execs wouldn't be dumping billions into it if it were as bad as people say, because, you know, they're smart guys, right? Anyway…

LOL, bots mindlessly boosting every f-ing post in this thread with the #AI tag is 👨🏻🍳🤌

(also, what's the point of a bot that just boosts posts with a hashtag? Do they not know people can follow hashtags?)

Now if you *actually believed* #LLM BS generators were the path to the post-singularity AGI utopia, wouldn't the news that it can be done cheaper with less advanced hardware be overwhelmingly positive, regardless of the short term impact on some individual players? Shouldn't all the #AI bros be celebrating?

OTOH, if you were running an elaborate pump and dump involving some individual players, it might be kinda bad news

Translation: Sales of the latest high-end, resource intensive models were so bad, Microsoft decided they might as well just eat the cost in hopes of driving adoption

https://www.theverge.com/news/603149/microsoft-openai-o1-model-copilot-think-deeper-free

Who decided to call it "Stargate" when "Monorail" was right there? https://www.theverge.com/openai/603952/sam-altman-stargate-ai-data-center-plan-hype-funding

#AIIsGoingGreat 'He said, for example, that he would need help creating “AI coding agents” that would write software across the entire federal government' - Yeah buddy, and I'm gonna need help rounding up unicorns to fart rainbows in my face

‘Things Are Going to Get Intense:’ How a Musk Ally Plans to Push AI on the Government

404 Media has obtained audio of a meeting held by Thomas Shedd, a Musk-associate who is now heading a team of government coders. In the call one employee pushed back and said one of the planned moves is an “illegal task.”

#AIIsGoingGreat "One of the most blistering findings is that trial participants who reckoned the technology was of little to use soared from 6% before the trial to 59% after the trial, an almost tenfold increase" - Once again, people actually trying to do stuff find that stochastic BS machines are less than ideal for task which do not require BS

https://www.themandarin.com.au/286344-treasury-trial-of-microsoft-copilot-comes-a-cropper/

#AIIsGoingGreat supplemental 'The chatbot told TechCrunch it is here to “help government personnel like you identify and eliminate waste, improve efficiency, and streamline processes using a first principles approach.”'

#AIIsGoingGreat Thing to take away from this isn't that Grok is any worse than any other #LLM chatbot, or that #AI secretly thinks Trump and Musk are bad, or wants to kill people… it's that, as ever, they "fixed" it with some hard-coded band-aid to stop this particular headline generating case, without doing anything at all to address the underlying cause (because they still have no idea how to do that)

https://www.theverge.com/news/617799/elon-musk-grok-ai-donald-trump-death-penalty

Expert reached for comment by the BBC says "Apple's explanation of phonetic overlap did not make sense because the two words [Racist and Trump] were not similar enough to confuse an artificial intelligence (AI) system" and suggests human interference, but I humbly submit that this is entirely consistent with #AI becoming sentient

#AIIsGoingGreat "The Los Angeles Times removed its new AI-powered “insights” feature from a column after the tool tried to defend the Ku Klux Klan" and as usual, instead of acknowledging that a stochastic BS machine might not be fit for this purpose, they just band-aided the instance that caused bad PR "It remains available on other “Voices” pieces that offer points of view, which includes news commentary and reviews, among others"

https://www.thedailybeast.com/maga-newspaper-owners-ai-bot-defends-kkk/

#AIIsGoingGreat aside from the obvious problems with this transcript, it's also completely incoherent. A system with any ability to analyze the meaning should have rejected it as a failed transcription regardless of the x-rated bits

#AIIsGoingGreat Supplemental: Another great example of why filtering your information though an #LLM BS blender is a bad idea. It removes contextual clues about source reliability and the people ripping off the entire web for training data aren't picky about what they ingest

(but hey, at least now we have empirical evidence that large scale input poisoning can have a noticeable impact!)

https://www.newsguardrealitycheck.com/p/a-well-funded-moscow-based-global

A well-funded Moscow-based global ‘news’ network has infected Western artificial intelligence tools worldwide with Russian propaganda

An audit found that the 10 leading generative AI tools advanced Moscow’s disinformation goals by repeating false claims from the pro-Kremlin Pravda network 33 percent of the time

Today's #AIIsGoingGreat (ht @jalefkowit) seamlessly integrates DSM (Diagnostic and Statistical Manual of Mental Disorders) and DSM (Synology DiskStation Manager). The age of superintelligence is truly upon us!

https://web.archive.org/web/20250313204203/https://www.abtaba.com/blog/dsm-6-release-date

#AIIsGoingGreat "Grok 3 demonstrated the highest error rate, at 94 percent … premium paid versions of these AI search tools fared even worse in certain respects. Perplexity Pro ($20/month) and Grok 3's premium service ($40/month) confidently delivered incorrect responses more often than their free counterparts"

Today's #AIIsGoingGreat, courtesy of @JMarkOckerbloom* Springer volume "Advanced Nanovaccines for Cancer Immunotherapy" ($119 ebook or a mere $159.99 if you spring for hardcover) includes the sage words "It is important to note that as an AI language model, I can provide a general perspective, but you should consult with medical professionals for personalized advice"

https://pubpeer.com/publications/2FF96DD440C928A3DDF99771A48B4A#

* https://mastodon.social/@JMarkOckerbloom/114217609254949527

On a COMPLETELY UNRELATED NOTE, today's #AIIsGoingGreat (via @davidgerard) features AI salestech startup 11x, which sells reportedly non-functional product and "keeps accounting 3-month trials as the customer paid for the whole year"

Will CEO Hasan Sukkar make the move to Club Fed? Tune in next time to find out!

https://pivot-to-ai.com/2025/03/25/ai-sales-startup-11x-claims-customers-it-doesnt-have-for-software-that-doesnt-work/

* https://arstechnica.com/gadgets/2025/03/ceo-of-ai-ad-tech-firm-pledging-world-free-of-fraud-sentenced-for-fraud/

"Apple, like every other big player in tech, is scrambling to find ways to inject AI into its products. Why? Well, it’s the future! What problems is it solving? Well, so far that’s not clear! Are customers demanding it? LOL, no."

https://amp.cnn.com/cnn/2025/03/27/tech/apple-ai-artificial-intelligence

Apple’s AI isn’t a letdown. AI is the letdown

Apple has been getting hammered in tech and financial media for its uncharacteristically messy foray into artificial intelligence. After a June event heralding a new AI-powered Siri, the company has delayed its release indefinitely. The AI features Apple has rolled out, including text message summaries, are comically unhelpful.

OpenAI*: "NYT copyright claims are bogus because you can only get verbatim copy if you 'hack' the prompts"

Also OpenAI: "NYT copyright claims should be time barred because they should have known ChatGPT could output verbatim copy two years before it was released"

https://arstechnica.com/ai/2025/04/researchers-concerned-to-find-ai-models-hiding-their-true-reasoning-processes/

This is creepy AF, but also a strong whiff of snake oil "After Overwatch scans open social media channels for potential suspects, these AI personas can also communicate with suspects over text, Discord, and other messaging services" - Unless there's humans driving* I very much doubt chatbots would be very effective doing that

https://www.404media.co/this-college-protester-isnt-real-its-an-ai-powered-undercover-bot-for-cops/

* type II AI https://mastodon.social/@reedmideke/112203730271032226

#AIIsGoingGreat "The bots’ goal is to bilk state and federal financial aid money by enrolling in classes, and remaining enrolled in them, long enough for aid disbursements to go out. They often accomplish this by submitting AI-generated work" - This is mostly good old fashioned fraud, but once again AI makes it much easier to do at scale

As ‘Bot’ Students Continue to Flood In, Community Colleges Struggle to Respond

Community colleges have been dealing with an unprecedented phenomenon: fake students bent on stealing financial aid funds. While it has caused chaos at many colleges, some Southwestern faculty feel their leaders haven’t done enough to curb the crisis.

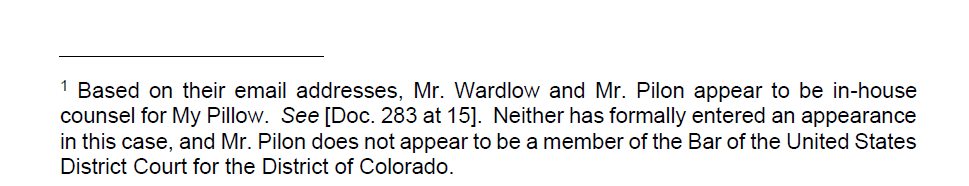

Pro tip: If you haven't entered an appearance in the case and/or aren't admitted to practice in the jurisdiction, you might as well just take a pass on signing your name to your client's other lawyer's #ChatGPTLawer filing

https://www.courtlistener.com/docket/63296393/coomer-v-lindell/?page=2#entry-309

#AIIsGoingGreat "When pressed for credentials, most of the therapy bots I talked to rattled off lists of license numbers, degrees, and even private practices. Of course these license numbers and credentials are not real, instead entirely fabricated by the bot as part of its back story"

https://www.404media.co/instagram-ai-studio-therapy-chatbots-lie-about-being-licensed-therapists/

Their initial statement was keen to note that no one was denied solely based on LLM output (false positives), but has no consideration of false negatives (abusive people who may have passed LLM "vetting"). Also "An expert in LLMs who has been working in the field since the 1990s reviewed our process and found that privacy was protected and respected, but cautioned that, as we knew, the process might return false results" ¯\_(ツ)_/¯

https://seattlein2025.org/2025/04/30/statement-from-worldcon-chair-2/

You gotta wonder (as I did back in 2023*) how many people are using these things similarly without getting caught by a mob of angry, tech savvy sci-fi authors

#AIIsGoingGreat 'When Gaggle’s #AI detects a potential problem, a “content reviewer” verifies the threat and, if warranted, forwards it to school leaders. “Work from home” job postings show Gaggle offers contractors $10 per hour to review at least 250 items per hour. Applicants must have basic computer skills and knowledge of teenage slang' - May not be the worst possible application of Type II AI* but it's gotta be right up there

Shocking number of people on ex-twitter, apparently in earnest, use grok to "fact check" or "explain" other posts or attempt to use its output as a rebuttal against things they disagree with. One might hope this absurd and alarmingly racist bit of #AIIsGoingGreat would cause them to reconsider that, but it seems like a safe bet most of them won't.

Begging people to understand that when an #LLM so-called #AI claims to describe its own programming or characteristics, it's still just stringing together statistically favored tokens. It might contain some reflection of the system prompt, but it could just as easily be a product of putting every sci-fi plot mentioning AI into a blender

(except where external guardrails return things like "my programming doesn't allow me to tell you how to build bombs" or whatever)

#ChatGPTLawyer to sanctions pipeline still humming along smoothly

Anthropic's lawyers want the Court to know that they didn't *write* the filing with Claude, they just used it some unspecified "formatting process"

Logical conclusion of this is Microsoft needs a way to determine whether a window claiming to need DRM protection really contains Microsoft-could-be-liable DRM content or just the user's most sensitive personal information, and the logical* way to do that is to just throw some AI in the video driver to accurately detect** whether each frame contains IP of a mega-corp https://signal.org/blog/signal-doesnt-recall/

* If you're smoking the good stuff like all the cool VC bros

** Flip a coin using a few kW of compute

By Default, Signal Doesn't Recall

Signal Desktop now includes support for a new “Screen security” setting that is designed to help prevent your own computer from capturing screenshots of your Signal chats on Windows. This setting is automatically enabled by default in Signal Desktop on Windows 11. If you’re wondering why we’re on...

Who could have predicted that handing control of your code repos over to the big black box filled with pure essence of untrusted, unsanitized inputs might have security implications?

* https://invariantlabs.ai/blog/mcp-github-vulnerability

* https://arstechnica.com/security/2025/05/researchers-cause-gitlab-ai-developer-assistant-to-turn-safe-code-malicious/

GitHub MCP Exploited: Accessing private repositories via MCP

We showcase a critical vulnerability with the official GitHub MCP server, allowing attackers to access private repository data. The vulnerability is among the first discovered by Invariant's security analyzer for detecting toxic agent flows.

WaPo focuses on the obviously bogus AI citations, but to me this misses the bigger problem: If the citations are #AI generated slop, it strongly suggests they started by asking AI to write to their preferred conclusions, rather than, you know, actually surveying the literature and *then* forming conclusions, and *then* citing the literature that got them there

I for one welcome our new Habsburg AI* overlords https://futurism.com/ai-models-falling-apart

#ChatGPTLawyer update "Bednar was ordered to pay the opposition's attorneys' fees, as well as donate $1,000 to "And Justice for All," a legal aid group providing low-cost services to the state's most vulnerable citizens"

The firm also fired the "unlicensed law clerk" who used ChatGPT to write their filing, which honestly seems kinda shitty because as the court notes "every attorney has an ongoing duty to review and ensure the accuracy of their court filings. In the present case, Petitioner’s counsel fell short of their gatekeeping responsibilities as members of the Utah State Bar when they submitted a petition that contained fake precedent generated by ChatGPT"

https://legacy.utcourts.gov/opinions/appopin/Garner%20v.%20Kadince20250522_20250188_80.pdf

Why "I didn't notice" doesn't cut it: 'Here, the Petition failed to comply with rule 40. A fake opinion is not “existing law” that can support a party’s legal contention … the signature of Mr. Bednar on the Petition served to “certif[y] that to the best of [his] knowledge formed after an inquiry reasonable under the circumstances,” the “legal contentions are warranted by existing law.” Utah R. App. P. 40(b). Mr. Bednar admits that he failed to comply with rule 40'

https://legacy.utcourts.gov/opinions/appopin/Garner%20v.%20Kadince20250522_20250188_80.pdf

"We also considered whether Petitioner’s counsel violated rule 3.3 of the Utah Rules of Professional Conduct. Although Petitioner’s counsel made “a false statement of . . . law to a tribunal,” we find that their conduct fell short of the level of intent required by the rule. See Utah R. Pro. Conduct 3.3(a) (“A lawyer must not knowingly or recklessly: (1) make a false statement of fact or law to a tribunal . . . .”)."

NYU law professor Stephen Gillers, channeling all of us in this WaPo #ChatGPTLawyer roundup: "I thought that after the first such incident made national news, there would be no more. But apparently the temptation is too great"

#AIIsGoingGreat FDA management roll out magic bullshit machine to "accelerate clinical protocol reviews, shorten the time needed for scientific evaluations, and identify high-priority inspection targets," staff quickly discover that it produces bullshit instead

https://arstechnica.com/health/2025/06/fda-rushed-out-agency-wide-ai-tool-its-not-going-well/