Today's #AIIsGoingGreat "…results from a hard-coded filter that puts the brakes on the AI model's output before returning it to the user" - Demonstrating once again that despite setting hundreds of billions of dollars on fire, #LLM #AI companies have no idea how to solve the "hallucination" (aka making shit up) problem in the general case. Their best solution is hard coded checks for individual phrases that might expose them to excessive legal costs

Today's #AIIsGoingGreat: Hard to see how drowning volunteer developers in #AI slop vulnerability reports could possibly go wrong. Great work everyone, throw another billion on the #LLM BS machine bonfire to celebrate!

#AIIsGoingGreat: 'correspondence seen by TechCrunch shows that previously, the guidelines read: “If you do not have critical expertise (e.g. coding, math) to rate this prompt, please skip this task.”

But now the guidelines read: “You should not skip prompts that require specialized domain knowledge.” Instead, contractors are being told to “rate the parts of the prompt you understand” and include a note that they don’t have domain knowledge'

https://www.404media.co/metas-ai-profiles-are-indistinguishable-from-terrible-spam-that-took-over-facebook/

Today's #AIIsGoingGreat via @telescoper: As he notes, google used to be quite OK for this kind of thing. Sure, you still needed to check whether the top result was from a reliable source, but it usually was, and unlike results run through the #LLM BS blender, you could do so at a glance

Altman's latest blog strikes me as a lot of hand-wavy CEO-speak, but I actually agree with this "in 2025, we may see the first AI agents “join the workforce” and materially change the output of companies" … with the small caveat that the average "material change" is unlikely to be in a positive direction

Meanwhile, Apple responds to the predictable result of running notifications through a blender with #LLM BS: "Apple Intelligence features are in beta and we are continuously making improvements with the help of user feedback… A software update in the coming weeks will further clarify when the text being displayed is summarization provided by Apple Intelligence"

This whole thread of #Google #AIIsGoingGreat with fractions is a good illustration of why I'm skeptical of the "sure, it has bugs, but they're fixing them, just like any other software" takes. IMO you can't band-aid a system with no concept of what fraction is to get this right in the general case, and even if you somehow recognize questions about fractions, there's an unlimited number of other cases where autocomplete is similarly inappropriate

https://mastodon.social/@lauren@mastodon.laurenweinstein.org/113771300586021845

This one with 25.4 == 1 in particular is a great example of how probabilistic completions go off the rails

https://mastodon.social/@[email protected]/113772004311087469

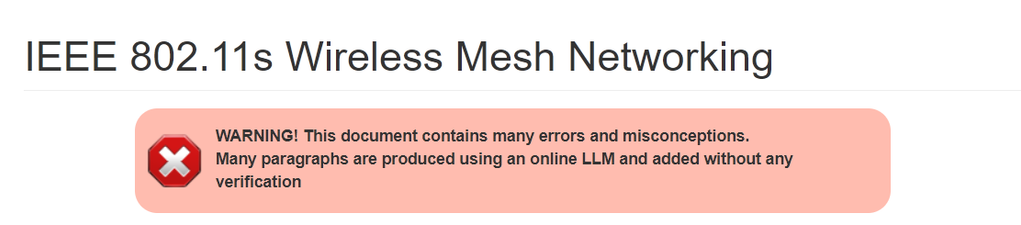

802.11*sigh*

https://openwrt.org/docs/guide-user/network/wifi/mesh/80211s

(see edit history for a good time)

One objection #AI pessimists hear a lot is that big tech execs wouldn't be dumping billions into it if it were as bad as people say, because, you know, they're smart guys, right? Anyway…

LOL, bots mindlessly boosting every f-ing post in this thread with the #AI tag is 👨🏻🍳🤌

(also, what's the point of a bot that just boosts posts with a hashtag? Do they not know people can follow hashtags?)

Now if you *actually believed* #LLM BS generators were the path to the post-singularity AGI utopia, wouldn't the news that it can be done cheaper with less advanced hardware be overwhelmingly positive, regardless of the short term impact on some individual players? Shouldn't all the #AI bros be celebrating?

OTOH, if you were running an elaborate pump and dump involving some individual players, it might be kinda bad news

Translation: Sales of the latest high-end, resource intensive models were so bad, Microsoft decided they might as well just eat the cost in hopes of driving adoption

https://www.theverge.com/news/603149/microsoft-openai-o1-model-copilot-think-deeper-free

Who decided to call it "Stargate" when "Monorail" was right there? https://www.theverge.com/openai/603952/sam-altman-stargate-ai-data-center-plan-hype-funding

#AIIsGoingGreat 'He said, for example, that he would need help creating “AI coding agents” that would write software across the entire federal government' - Yeah buddy, and I'm gonna need help rounding up unicorns to fart rainbows in my face

‘Things Are Going to Get Intense:’ How a Musk Ally Plans to Push AI on the Government

404 Media has obtained audio of a meeting held by Thomas Shedd, a Musk-associate who is now heading a team of government coders. In the call one employee pushed back and said one of the planned moves is an “illegal task.”

#AIIsGoingGreat "One of the most blistering findings is that trial participants who reckoned the technology was of little to use soared from 6% before the trial to 59% after the trial, an almost tenfold increase" - Once again, people actually trying to do stuff find that stochastic BS machines are less than ideal for task which do not require BS

https://www.themandarin.com.au/286344-treasury-trial-of-microsoft-copilot-comes-a-cropper/

#AIIsGoingGreat supplemental 'The chatbot told TechCrunch it is here to “help government personnel like you identify and eliminate waste, improve efficiency, and streamline processes using a first principles approach.”'

#AIIsGoingGreat Thing to take away from this isn't that Grok is any worse than any other #LLM chatbot, or that #AI secretly thinks Trump and Musk are bad, or wants to kill people… it's that, as ever, they "fixed" it with some hard-coded band-aid to stop this particular headline generating case, without doing anything at all to address the underlying cause (because they still have no idea how to do that)

https://www.theverge.com/news/617799/elon-musk-grok-ai-donald-trump-death-penalty

Expert reached for comment by the BBC says "Apple's explanation of phonetic overlap did not make sense because the two words [Racist and Trump] were not similar enough to confuse an artificial intelligence (AI) system" and suggests human interference, but I humbly submit that this is entirely consistent with #AI becoming sentient

#AIIsGoingGreat "The Los Angeles Times removed its new AI-powered “insights” feature from a column after the tool tried to defend the Ku Klux Klan" and as usual, instead of acknowledging that a stochastic BS machine might not be fit for this purpose, they just band-aided the instance that caused bad PR "It remains available on other “Voices” pieces that offer points of view, which includes news commentary and reviews, among others"

https://www.thedailybeast.com/maga-newspaper-owners-ai-bot-defends-kkk/

#AIIsGoingGreat aside from the obvious problems with this transcript, it's also completely incoherent. A system with any ability to analyze the meaning should have rejected it as a failed transcription regardless of the x-rated bits

#AIIsGoingGreat Supplemental: Another great example of why filtering your information though an #LLM BS blender is a bad idea. It removes contextual clues about source reliability and the people ripping off the entire web for training data aren't picky about what they ingest

(but hey, at least now we have empirical evidence that large scale input poisoning can have a noticeable impact!)

https://www.newsguardrealitycheck.com/p/a-well-funded-moscow-based-global

A well-funded Moscow-based global ‘news’ network has infected Western artificial intelligence tools worldwide with Russian propaganda

An audit found that the 10 leading generative AI tools advanced Moscow’s disinformation goals by repeating false claims from the pro-Kremlin Pravda network 33 percent of the time

Today's #AIIsGoingGreat (ht @jalefkowit) seamlessly integrates DSM (Diagnostic and Statistical Manual of Mental Disorders) and DSM (Synology DiskStation Manager). The age of superintelligence is truly upon us!

https://web.archive.org/web/20250313204203/https://www.abtaba.com/blog/dsm-6-release-date

#AIIsGoingGreat "Grok 3 demonstrated the highest error rate, at 94 percent … premium paid versions of these AI search tools fared even worse in certain respects. Perplexity Pro ($20/month) and Grok 3's premium service ($40/month) confidently delivered incorrect responses more often than their free counterparts"

Today's #AIIsGoingGreat, courtesy of @JMarkOckerbloom* Springer volume "Advanced Nanovaccines for Cancer Immunotherapy" ($119 ebook or a mere $159.99 if you spring for hardcover) includes the sage words "It is important to note that as an AI language model, I can provide a general perspective, but you should consult with medical professionals for personalized advice"

https://pubpeer.com/publications/2FF96DD440C928A3DDF99771A48B4A#

* https://mastodon.social/@JMarkOckerbloom/114217609254949527

On a COMPLETELY UNRELATED NOTE, today's #AIIsGoingGreat (via @davidgerard) features AI salestech startup 11x, which sells reportedly non-functional product and "keeps accounting 3-month trials as the customer paid for the whole year"

Will CEO Hasan Sukkar make the move to Club Fed? Tune in next time to find out!

https://pivot-to-ai.com/2025/03/25/ai-sales-startup-11x-claims-customers-it-doesnt-have-for-software-that-doesnt-work/

* https://arstechnica.com/gadgets/2025/03/ceo-of-ai-ad-tech-firm-pledging-world-free-of-fraud-sentenced-for-fraud/

@reedmideke we wrote about Kubient too when Roberts pleaded guilty: https://pivot-to-ai.com/2024/09/18/kubients-adtech-use-case-for-ai-an-excuse-for-a-fraud/

this podcast about the case (37:34 on) is amazing: https://podcasts.apple.com/us/podcast/episode-48-anne-coghlan-from-scope-3-on-measuring-and/id1615989259?i=1000637275912

@davidgerard Indeed, I featured that post in the thread at the time https://mastodon.social/@reedmideke/113160781568746961

(not blaming you not reading the whole thing, LOL, putting all my AI dunks in one thread is definitely Using Mastodon Wrong)