If anybody is wondering the impact of the Crowdstrike thing - it’s really bad. Machines don’t boot.

The recovery is boot in safe mode, log in as local admin and delete things - which isn’t automateable. Basically Crowdstrike will be in very hot water.

You know it was coming...

Crowdstrike's BSOP theme tune

I've obtained copies of the .sys driver files Crowdstrike customers have. They're garbage. Each customer appears to have a different one.

They trigger an issue that causes Windows to blue screen.

I am unsure how these got pushed to customers. I think Crowdstrike might have a problem.

For any orgs in recovery mode, I'd suspend auto updates of CS for now.

The .sys files causing the issue are channel update files, they cause the top level CS driver to crash as they're invalidly formatted. It's unclear how/why Crowdstrike delivered the files and I'd pause all Crowdstrikes updates temporarily until they can explain.

This is going to turn out to be the biggest 'cyber' incident ever in terms of impact, just a spoiler, as recovery is so difficult.

I'm seeing people posting scripts for automated recovery.. Scripts don't work if the machine won't boot (it causes instant BSOD) -- you still need to manually boot the system in safe mode, get through BitLocker recovery (needs per system key), then execute anything.

Crowdstrike are huge, at a global scale that's going to take.. some time.

Crowdstrike statement: https://www.bbc.co.uk/news/live/cnk4jdwp49et?post=asset%3A0c379e1f-48df-493c-a11a-f6b1e3d1eb63#post

Basically 'it's not a security incident... we just bricked a million systems'

For anybody wondering why Microsoft keep ending up in the frame, they had an Azure outage and- this may be news to some people- a lot of Microsoft support staff are actually external vendors, eg TCS, Mindtree, Accenture etc.

Some of those vendors use Crowdstrike, and so those support staff have no systems.

But MS isn’t the outage cause today.

@steve @GossiTheDog The Register says no: https://www.theregister.com/2024/07/19/crowdstrike_shares_sink_as_global/

Edit: Wikipedia says there were two Azure incidents, one unrelated on the 18th, one right after the Crowdstrike update. https://en.m.wikipedia.org/wiki/2024_CrowdStrike_incident#Outage

Had an outage on our Azure MFA but hard to say if it's related or not, who knows what's running inside the service.

@hittitezombie @steve @GossiTheDog Microsoft told BBC they fixed the “underlying issue” but I don’t know if MS meant for the Azure problem or for Crowdstrike triggering the BSOD.

https://www.bbc.com/news/live/cnk4jdwp49et?post=asset%3Ad69abf29-6c37-4b11-8335-f73fe5b03f3d#post

@GossiTheDog I dont know how to use this platform but you seem to. here is a semi automatic way that I solved this on 1000 machines in 30 minutes.

Copy your custom drivered WinPE image (or a bare one from the ADK) to your system.

Mount it with wimlib.

Edit startnet.cmd and add

del C:\Windows\System32\drivers\CrowdStrike\C-00000291*.sys

exit

unmount image

put image in your PXE loader OR make it a usb bootable in Rufus

Save an assload of time.

@JaxxAI @GossiTheDog yes it should. Unless they changed it to a model where each driver has a dedicated address space and can't alter memory for other parts of the kernel. You should not catch "corrupted memory" exceptions and ignore them, as you have no idea if said corrupted memory is important to the rest of the system.

The same applies to most widely used kernels such as Linux.

Having drivers running outside the kernel is a great idea though, but hard due to performance. #Redox makes a pretty decent attempt

@JaxxAI @GossiTheDog so can Windows, and it will use the last known good configuration. But unfortunately there are exceptions.

And in many cases, you can't know who caused an issue. The altered memory may not be attributed to the faulting driver at all.

@JaxxAI @GossiTheDog I don't disagree with your wish.

I just try to point out this is no better elsewhere. On windows a driver must be signed to be loaded, either by a catalogue file or embedded in the file itself.

I'm out traveling, so can't verify myself, but in this case, I suspect it's a data file named as a driver, and that another signed driver loads it. Nothing would stop this from happening on other operating systems.

@gigantos @JaxxAI @GossiTheDog I mean, when you're building a security product, you definitely want the system to just *load without it* when it's tampered with, right?

There's an argument to be made that your security product crashing the system when it isn't able to function is a feature, not a bug.

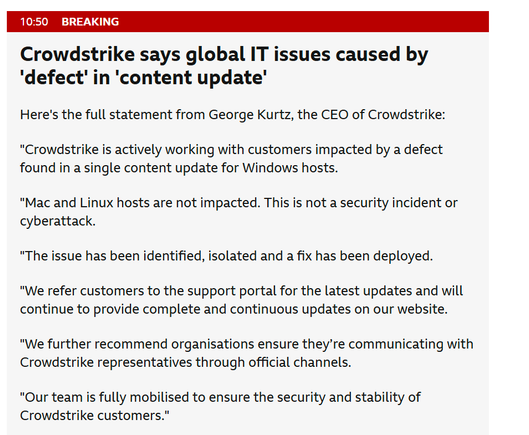

Crowdstrike says global IT issues caused by 'defect in 'content update

Here's the full statement from George Kurtz, the CEO of Crowdstrike:

"Crowdstrike is actively working with customers impacted by a defect found in a single content update for Windows hosts

"Mac and Linux hosts are not impacted. This is not a security incident or cyberattack.

"The issue has been identified, isolated and a fix has been deployed

"We refer customers to the support portal for the latest updates and will continue to provide complete and continuous updates on our website,

"We further recommend organisations ensure they're communicating with Crowdstrike representatives through official channels.

'Our team is fully mobilised to ensure the security and stability of Crowdstrike customers."

I love how 'bricked' has become the new 'fucked'.

@GossiTheDog

A million systems?

29k customers, and assuming 5k boxes per customer == 145 million boxes.

@alslater

Plenty of door stops.

@a_lex_ander @GossiTheDog LAPS password is still a local password with no requirement on network etc, it's just a managed local password that's system unique and automatically cycled on a schedule.

So if, IF, your AD is still up and you're a user with the LAPS authorisations you can retrieve what those managed passwords are.

LDAP to be recovered first, otherwise bitlocker keys are unavailable as well.

Clients are useless until AD/LDAP available

Surely purely academical at this point, but shouldn't a partially scripted solution be technically possible using a PXE boot scenario? Just curious.

LetheTheForgotten (@LetheForgot) on X

@SwiftOnSecurity What we did was use the advanced restart options to launch the command prompt, skip the bitlocker key ask which then brought us to drive X and ran "bcdedit /set {default} safeboot minimal"which let us boot into safemode and delete the sys file causing the bsod.

@GossiTheDog they claim 24000 customers. If each customer averages 1000 machines. That's 24 million machines needing manual intervention.

Even more fun for those attached to the ceiling of an airport...

This is gonna take days of not weeks to fully fix...