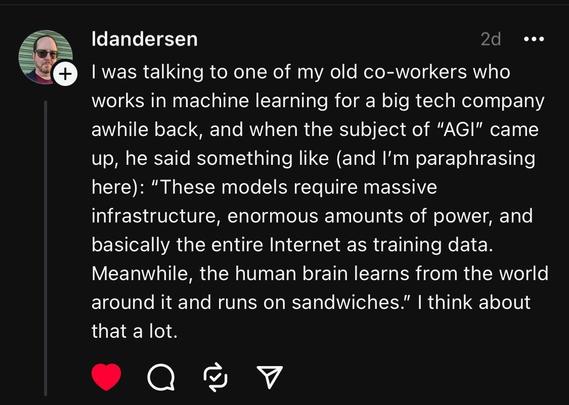

@Jyoti Yeah whenever I see some evangelist prattling on about AGI I think about how bigtime LLMs are currently using about the maximum amount of resources any computing project plausibly *could*.

And none of those resources are getting cheaper; in fact some (like water) are only going to get *more* expensive.

If AI companies want to build something that's several generations beyond bigtime LLMs in scale and reasoning ability, like AGI, they may as well be aspiring to build a 20 km skyscraper.